SearXNG in 2026: Architecting Sovereign Retrieval for the AI Era

In 2026, the search landscape has shifted from simple web browsing to complex, agent-driven information discovery. As centralized search engines become increasingly cluttered with AI-generated noise and privacy-invasive tracking, SearXNG has emerged as a critical infrastructure component for developers and self-hosters. This guide explores how to leverage this open-source metasearch engine as a sovereign retrieval layer, providing a private and high-performance foundation for local AI agents and advanced RAG (Retrieval-Augmented Generation) pipelines.

1. Introduction: The Rise of Sovereign Search

The digital landscape of 2026 has fundamentally altered our relationship with information retrieval. As mainstream search engines become increasingly saturated with AI-generated filler and aggressive tracking telemetry, the demand for sovereign search infrastructure has transitioned from a niche privacy concern to a technical necessity. In this environment, SearXNG stands as a defiant abstraction layer.

Defining “Sovereign Search” in this era means more than just blocking ads; it is about controlling the retrieval layer—the very “eye” through which your AI models and personal agents see the web. By self-hosting your search infrastructure, you ensure that your data queries remain private and that the information grounding your local Large Language Models (LLMs) is not filtered by the commercial biases of search giants. SearXNG has emerged as the nexus of this movement, providing the open-source plumbing necessary to build a truly independent discovery stack.

For further insights on the core philosophy, you can explore SearXNG: what is it and how does this open source metasearch engine work?.

2. Architecture: What SearXNG Actually Is

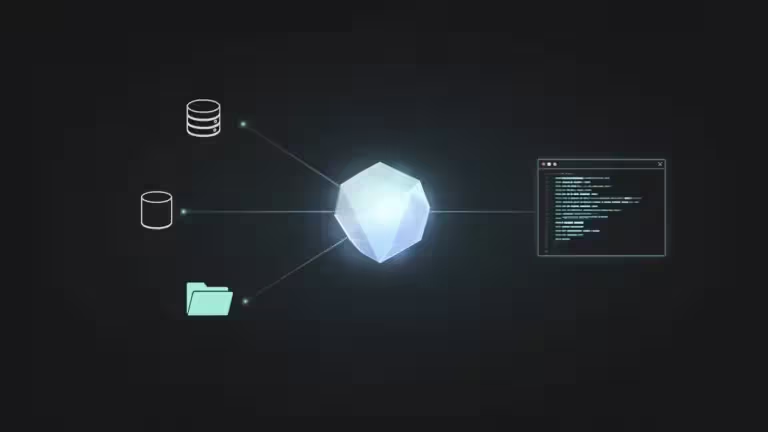

At its core, SearXNG is not a search engine in the traditional sense; it is a sophisticated metasearch abstraction layer. While Google or Bing maintain massive, proprietary databases of the crawled web, SearXNG remains entirely stateless. It serves as a real-time gateway that sits between the user and over 70 distinct information sources.

The Metasearch Model

The fundamental architecture is designed to decouple the query from the identity of the searcher.

- The “Index-less” Engine: SearXNG does not crawl the web or store a persistent index of websites.

- Real-time Dispatch: Every time a user submits a query, SearXNG simultaneously contacts multiple configured engines.

- Privacy Shielding: By acting as a proxy, it prevents the destination engines from seeing the user’s IP address, browser fingerprint, or search history.

Aggregation vs. Indexing

Unlike traditional engines that rank pages based on complex, opaque AI algorithms, SearXNG focuses on result aggregation and deduplication.

- Normalization: It retrieves raw data from various backends and converts it into a standardized internal format.

- Deduplication: If a specific URL is returned by multiple engines, SearXNG identifies the overlap and merges the results into a single entry to reduce noise.

- Basic Scoring: While it does not perform deep neural ranking, it applies basic scoring logic based on the frequency and position of a result across multiple sources.

This architecture is what allows SearXNG to be incredibly resource-efficient, as it offloads the heavy lifting of web indexing to the major providers. For a deeper look at the implementation, refer to the Architecting a Sovereign Search Engine: High-Performance SearXNG Deployment guide.

3. Under the Hood: The Metasearch Pipeline

The transition from a user’s query to a structured list of results follows a rigorous, multi-stage pipeline designed for speed and anonymity. Unlike a traditional engine that fetches data from a local database, SearXNG orchestrates several external network calls simultaneously, making its internal logic more akin to a high-performance proxy.

Step-by-Step Data Flow

- Request Reception: The pipeline begins when the instance receives a query via the web interface or API.

- Engine Dispatch: Based on the selected category, SearXNG identifies all active engines in settings.yml.

- Concurrent Retrieval: The engine triggers dedicated Python modules for each source. These run in parallel to minimize latency, governed by a global timeout to prevent slow sources from hanging the request.

- Response Parsing & Normalization: External results are parsed into a standard internal format containing the title, URL, and snippet.

- Deduplication & Scoring: Duplicate URLs are merged, and a weighted score is applied based on individual engine “weights”.

- Delivery: Results are delivered in the requested format (HTML, JSON, CSV, or RSS).

Diverse Retrieval Methods

To interact with diverse data sources, SearXNG utilizes three primary strategies:

- HTML Scraping: Used for engines without public APIs. The engine fetches the HTML page and uses CSS selectors or XPath to extract results. While powerful, this method is sensitive to layout changes.

- JSON/REST Endpoints: Whenever possible, SearXNG targets structured endpoints for higher reliability and efficiency.

- Official APIs & Feeds: For sources like Wikipedia or Reddit, SearXNG uses official public APIs or RSS feeds to ensure stability. Learn how to configure SearXNG for better search results to optimize these connections.

The Ranking Myth

It is a common misconception that SearXNG performs complex AI-driven ranking. In reality, SearXNG prioritizes transparency over opaque algorithms. The order of results is primarily determined by the position in the source engine, the number of engines returning the result, and the manual weight assigned by the administrator.

4. Deployment Models: From Personal to Production

In 2026, the SearXNG community has moved toward containerization as the standard for maintaining sovereign search infrastructure. While Docker is the most prominent recommendation for most users, the software remains highly flexible, allowing for deployment on a variety of architectures depending on the required scale and performance.

Resource Requirements and Scaling

SearXNG is remarkably efficient, but its resource footprint scales with the complexity of the integrated AI workflows.

- Personal Instance: A single-user setup typically requires only 512MB to 1GB of RAM.

- Production/AI Instance: For high-concurrency environments or instances serving as a retrieval layer for local LLMs, 2GB of RAM is recommended to handle the overhead of parallel request processing and caching.

- CPU Overhead: Since the engine is primarily I/O-bound, even a modest dual-core CPU is sufficient for most self-hosting scenarios.

Deployment Methods

Choosing a deployment model depends on your familiarity with Linux systems and your specific infrastructure needs.

- The Container Standard: Implementing Architecting a Sovereign Search Engine: High-Performance SearXNG Deployment via Docker or Podman is the preferred method for 2026. It isolates Python dependencies and allows for easy updates of engine selectors.

- Native Python + uWSGI: For bare-metal performance enthusiasts, SearXNG can be run directly as a Python application behind the uWSGI application server.

- Cloud & Kubernetes: For organizations requiring high availability, SearXNG is often deployed in Kubernetes clusters to distribute load across multiple nodes.

Infrastructure Synergy: The Role of Redis

While SearXNG is stateless, integrating Redis is critical for a production-grade experience.

- Query Caching: Redis stores the results of recent queries, allowing the instance to serve frequent searches instantly without triggering new outbound requests to source engines.

- Rate Limiting: It acts as the backend for the “Limiter” plugin, protecting your instance from being overwhelmed by bot traffic or recursive AI agent loops.

- Reliability: By reducing the total volume of outbound requests, Redis significantly lowers the risk of your server’s IP being flagged or blacklisted by providers like Google.

5. Privacy Engineering: Software vs. Infrastructure

A critical distinction for any administrator is the difference between the privacy of the software and the privacy of the infrastructure. While the application is designed to be a “zero-knowledge” gateway, its effectiveness depends entirely on the server environment.

Application-Level Statelessness

By design, the SearXNG software is engineered to be stateless and anti-tracking:

- No User Databases: The software does not include a database to store user profiles, search history, or persistent preferences.

- Client-Side Storage: User settings are typically stored in the user’s browser via cookies or encoded into the URL, ensuring the server “forgets” the user between sessions.

- Query Obfuscation: Before forwarding a request, SearXNG strips identifying metadata, such as IP addresses and original browser headers, replacing them with randomized alternatives.

The Infrastructure Leak

Even with “clean” software, the surrounding stack often captures sensitive data by default:

- Reverse Proxy Logs: Standard configurations for Nginx or Traefik log the IP address and the full request URL for every hit.

- Container Verbosity: Docker’s standard output can capture request logs that are stored on the host’s disk.

- Upstream Visibility: Without a VPN or proxy, outbound requests to engines still originate from your server’s IP, which can lead to IP-based profiling by destination engines.

To achieve true sovereignty, you must explicitly disable access logs in your reverse proxy and route Docker logs to none.

6. The Anti-Bot Arms Race

Operating an instance is an exercise in managing IP reputation. Because many engines—most notably Google—actively discourage scraping, self-hosters must navigate an evolving landscape of anti-bot measures.

Blocking and Throttling

Destination engines utilize several signals to identify metasearch traffic:

- IP Fingerprinting: Traffic from known datacenter ranges is often greeted with CAPTCHAs or immediate blocks.

- Request Patterns: A sudden burst of high-concurrency requests originating from a single IP is a primary trigger for rate-limiting.

The Evolution of the Gateway

While standalone tools like Filtron were once common for rate limiting, modern architectures have shifted:

- Integrated Limiters: SearXNG now includes robust internal plugins for rate limiting and bot detection.

- Reverse Proxy Dominance: Most administrators rely on advanced Nginx or Caddy configurations to handle security headers and filtering.

- Rotating Proxies: For high-concurrency AI workflows, using a pool of residential proxies is the only reliable way to prevent upstream blocking.

7. SearXNG as the Retrieval Layer for AI and RAG

By 2026, SearXNG has evolved from a privacy tool for humans into a mission-critical infrastructure component for the local AI ecosystem. As developers move away from centralized search APIs due to escalating costs and data leakage concerns, SearXNG has emerged as the preferred retrieval mechanism for autonomous agents and Retrieval-Augmented Generation (RAG) pipelines.

Hallucination Mitigation and Grounding

The primary challenge of Large Language Models (LLMs) remains their tendency to hallucinate when their training data is outdated or insufficient. SearXNG solves this by providing “Real-time Grounding”. By performing a live search before generating a response, an AI agent can inject verified, current web context into the prompt. To ensure the quality of this retrieved data, developers can use benchmarks like FRAMES vs Seal-0: Which Benchmark Should You Use to Evaluate Your RAG AI to measure the impact of search quality on AI accuracy.

The RAG Pipeline Architecture

In a modern sovereign search stack, SearXNG acts as the primary “Retriever” in a multi-stage pipeline:

- Orchestration: A framework like LangChain or an agent receives a query and dispatches it to SearXNG.

- Context Ingestion: SearXNG returns a set of URLs and snippets.

- Vectorization: The content of these pages is scraped, chunked, and stored in systems like Vector Databases for Semantic Memory.

- Synthesis: The LLM queries the vector database to find the most relevant “truth” and synthesizes a cited answer.

The JSON Mandate for Agentic Workflows

For machine-to-machine communication, the standard HTML interface of SearXNG is insufficient. Administrators must enable the JSON output in the settings.yml file to allow AI agents to parse search results programmatically.

search:

formats:

- html

- json # Critical for RAG and AI integrations

8. Ecosystem Integrations: Building the Local AI Stack

The power of SearXNG in 2026 lies in its interoperability with other self-hosted AI tools.

UI and Model Integration

Most advanced users now combine SearXNG with Open WebUI in Docker, which provides a native toggle for web search. In these setups, users can distinguish multiple inference engines in Open WebUI, such as routing a general query to Llama 3 but using a specialized research agent for SearXNG-backed deep dives.

The MCP Shift

The adoption of the Model Context Protocol (MCP) has further streamlined these integrations. By integrating the Model Context Protocol (MCP) with Ollama, vLLM, and Open WebUI, developers can create standardized “tools” that allow any MCP-compliant agent to perform web searches via SearXNG without custom coding for each model.

9. Comparison: SearXNG vs. Kagi and Managed Approaches

The search landscape of 2026 presents a fundamental choice between the “Sovereign DIY” model and “Premium Search-as-a-Service”. While both paths offer an escape from the ad-saturated index of traditional giants, they represent opposite ends of the trade-off between control and convenience.

The Sovereignty Trade-off

Choosing between these tools depends on whether your primary constraint is time or financial investment.

- Kagi (The Polished Product): Kagi provides a high-quality, ad-free experience out of the box for a monthly subscription fee. It relies on proprietary filtering and a collaborative “Leaderboard” to rank domains. However, it remains a centralized service; your queries still pass through their infrastructure.

- SearXNG (The Infrastructure): SearXNG is free and open-source, allowing for total data ownership. As detailed in the Comparison: can free SearXNG rival paid Kagi?, a well-tuned SearXNG instance can replicate much of Kagi’s utility by using custom weights in settings.yml to boost trusted domains like GitHub or Wikipedia.

Reliability vs. Cost

For developers building AI products, the “API Tax” is a major consideration.

- Managed APIs: Official search APIs (Google, Bing, or Kagi’s API) provide high reliability and uptime but come with per-query costs that can scale aggressively for high-volume RAG applications.

- Sovereign Retrieval: SearXNG allows for unlimited queries at the cost of server maintenance and IP reputation management. While it requires more effort to bypass bot detection, it offers a “fixed-cost” infrastructure that is essential for experimental or high-volume research agents.

| Criteria | Kagi (Managed) | SearXNG (Sovereign) |

|---|---|---|

| Business Model | Subscription-based (SaaS) | Open Source & Self-hosted |

| AI & API Access | Paid, rate-limited API access | Native, unlimited JSON API |

| Privacy Model | Managed trust (Centralized) | Zero-trust (Total infrastructure control) |

| Operational Stability | High (Official API reliance) | Variable (Sensitive to IP reputation) |

| RAG & Agent Utility | Limited local pipeline integration | Optimized for local RAG and agentic workflows |

| Customization | Community-driven Leaderboard | Granular YAML and engine weighting |

10. Limitations and Maintenance Overhead

Despite its power, SearXNG is not a “set-and-forget” appliance. It requires an ongoing commitment to maintenance, often referred to as the “Sysadmin Tax”.

- The Fragility of Scraping: Because many engines are accessed via HTML scraping, updates to the source website’s layout can break specific SearXNG modules instantly.

- Upstream Maintenance: Administrators must track the SearXNG GitHub repository for frequent updates to engine selectors to maintain search quality.

- Result Consistency: Since results depend on the health of dozens of third-party engines, search quality can fluctuate if major providers like Google increase their blocking measures against your specific IP.

11. Conclusion: The Strategic Future of Retrieval

In 2026, the ability to find information is no longer just a luxury—it is a core utility for both humans and AI. SearXNG has proven that we do not have to accept the “black box” approach of centralized search engines.

By mastering the retrieval layer, you transition from being a passive consumer to an architect of your own information environment. Whether you are building a private RAG pipeline or simply seeking a cleaner web, sovereign search infrastructure is the only way to ensure that your digital discoveries remain your own.

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!