The Millisecond War: Decoding LLM Inference Performance in 2026

In the 2026 AI ecosystem, raw model intelligence is no longer the sole metric of success; generation speed, measured in…

Machine Learning, Generative AI, AI in Business, AI Tools & Frameworks

In the 2026 AI ecosystem, raw model intelligence is no longer the sole metric of success; generation speed, measured in…

The rapid integration of Large Language Models (LLMs) into the corporate workflow has birthed a significant security paradox: how can…

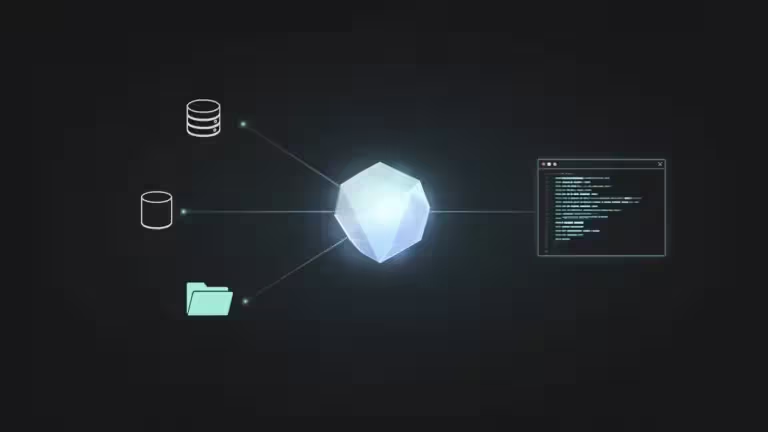

The architectural landscape of artificial intelligence is shifting from static chat interfaces to dynamic, action-oriented systems. With the maturation of…

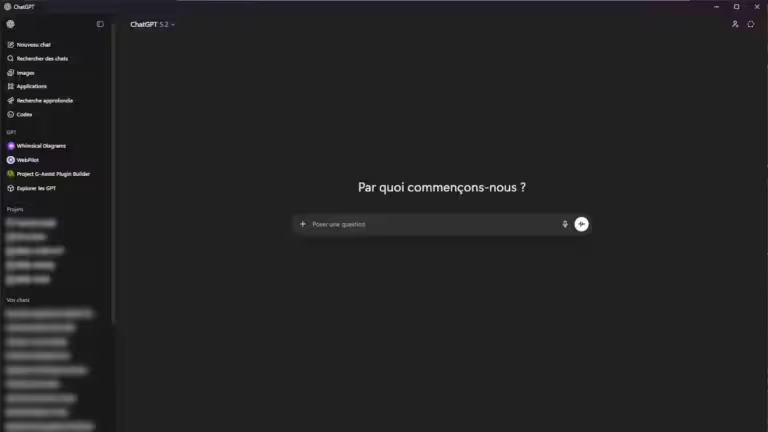

The ChatGPT Windows application has evolved far beyond a mere Electron wrapper of the web interface. In 2026, it positions…

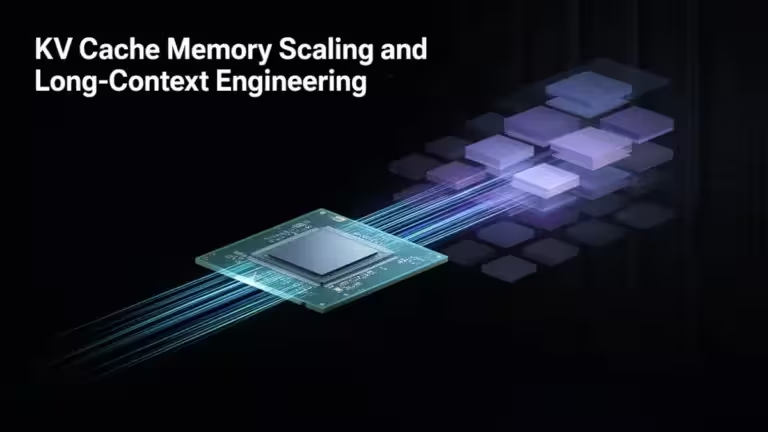

AI inference throughput defines how many tokens per second a system can process under sustained load, while latency measures how…

KV cache memory scaling has become a central engineering constraint in 2026 as long-context models move from 128K to 1M…