Highlights

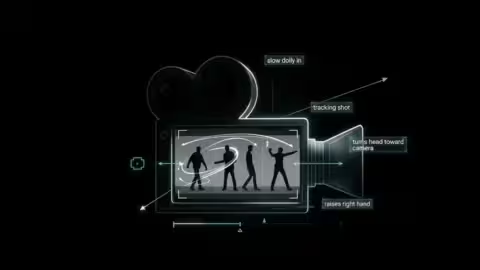

Prompting LTX 2.3: The Techniques That Actually Work

LTX 2.3 is capable of producing impressive videos locally, but it is also far more demanding than most image generation models. Many users quickly discover that prompts that work perfectly…

OmniNFT for LTX-2.3: Alignment via reinforcement learning

The LTX-2.3-OmniNFT-RL-Lora_bf16.safetensors file, hosted on Kijai’s ComfyUI repository, is drawing significant curiosity from the open-source AI video community. Far from being a standard artistic style filter, this adapter introduces an advanced optimization framework…

Why Your Video Player is Sabotaging Your Production (And What to Choose in 2026)

I. The Video Playback Paradox: Fidelity vs. Complacency In the world of broadcasting, a good player is one that manages to play a corrupted or poorly encoded file without stopping….

The Sora Sunset: Decoding OpenAI’s Strategic Retreat from Generative Video

The sudden discontinuation of Sora in early 2026 marks a watershed moment for the generative AI industry. Once hailed as the “Hollywood-killer” during its viral unveiling, the model has been…

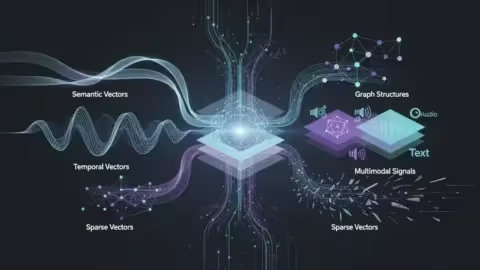

Beyond semantic search: Architecting the multi-vector RAG stack in 2026

In the early days of the generative AI boom, semantic search was hailed as the definitive solution for knowledge retrieval. By 2026, however, the industry has reached a “vector plateau”….

Local AI Video on RTX: Mastering the NVIDIA 3D-to-Diffusion Workflow

Local AI video generation in 2026 is undergoing a radical structural shift. For too long, creators have been held hostage by the “slot machine” nature of Text-to-Video (T2V) and Image-to-Video…

RTX Video Super Resolution in ComfyUI: Neural Upscaling at the Service of Creative Workflow

The challenge of scaling in artificial intelligence workflows has long relied on a frustrating compromise between execution speed and visual fidelity. Traditionally, ComfyUI users had to choose between resource-hungry diffusion…

The LLM-Ready Web: A Battle of Semantic Extraction (Firecrawl vs. Crawl4AI)

In the race to build production-grade RAG systems, architects often overlook the most critical failure point: the data ingestion layer. While LLMs are scaling in reasoning capabilities, they are still…

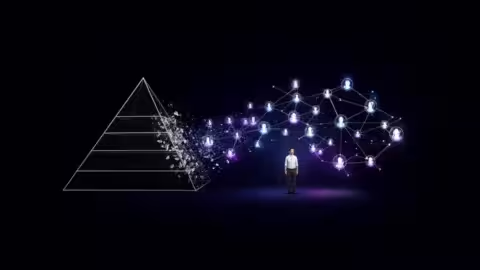

The AI-First Organization: From Hierarchical Control to Agent-Driven Symbiosis

The year 2026 marks a definitive inflection point in corporate evolution. We are witnessing the transition from “AI-augmented” legacy firms to the AI-first organization. This shift is not merely a digital…

The Architectural Ceiling: Why Gemini 3.1 Pro and Claude 4.6 Opus Diverge on Output Length

In 2026’s high-stakes Large Language Model landscape, a structural divergence has become a primary friction point for power users: the “Output Length Gap.” While Google’s Gemini 3.1 Pro (released Feb 19, 2026)…

Best GPU for Local AI in 2026: Deep Dive on VRAM, Energy, and Real Benchmarks

Running large language models on your own hardware has reached a critical inflection point in 2025-2026. This isn’t just a question of technical curiosity or cloud cost-cutting—it’s become a compliance,…

The Millisecond War: Decoding LLM Inference Performance in 2026

In the 2026 AI ecosystem, raw model intelligence is no longer the sole metric of success; generation speed, measured in tokens per second (token/s), has become the ultimate competitive frontier….

The illusion of the trust layer: securing LLM prompts through interception

The rapid integration of Large Language Models (LLMs) into the corporate workflow has birthed a significant security paradox: how can an organization leverage generative intelligence without its industrial secrets, proprietary…

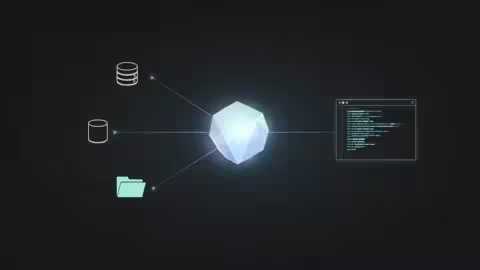

MCP servers in Claude Desktop and Cowork guide: local, remote, and secure architectures

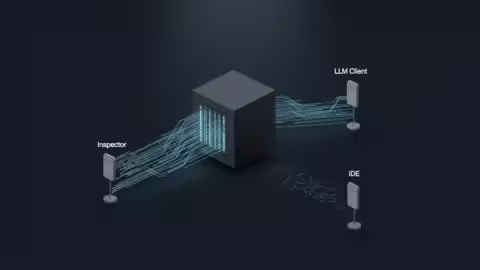

The architectural landscape of artificial intelligence is shifting from static chat interfaces to dynamic, action-oriented systems. With the maturation of the Model Context Protocol (MCP), Anthropic has transformed Claude Desktop into a central…

Testing MCP servers: protocol validation, transport correctness, and interoperability

The Model Context Protocol (MCP) has rapidly shifted from a nascent proposal to a critical standard for connecting Large Language Models (LLMs) to local and remote data sources. However, as…

Infrastructure guide: Cloud-native local orchestration

The strength of DDEV lies in its ability to transform your local machine into a micro-cloud. For a senior developer or DevOps engineer, understanding the handshake between the DNS request…

DDEV WordPress 403 Forbidden Fix: Traefik, Nginx, and Docroot Explained (2026)

The promise of modern local development is a “zero-config” abstraction that mirrors production. DDEV is often cited as the gold standard for this, providing a Docker-based orchestration that feels like…

How to distinguish multiple inference engines in Open WebUI?

Open WebUI serves as a sophisticated orchestration layer, capable of aggregating multiple backends into a unified interface. For AI infrastructure professionals managing hybrid environments—combining Ollama in Docker, vLLM on GPU clusters, and…

Discord Delays Age Verification Rollout to 2026 Amid Privacy Concerns

Discord has officially announced a delay in the global rollout of its mandatory age verification system, pushing the launch to the second half of 2026. This decision follows significant backlash…

Securing WSL mirrored networking: a technical deep dive into architecture and exposure

The release of networkingMode=mirrored for the Windows Subsystem for Linux (WSL), introduced with WSL 2.0.0 in the September 2023 update, represents a significant architectural shift from the traditional NAT-based virtualization. While NAT…