ChatGPT Timeline Explained: Key Releases from 2022 to 2025

ChatGPT’s evolution from 2022 to 2025 is not just a sequence of model releases, but the story of how modern AI systems adapt to growing global demand, hardware limitations, and new forms of reasoning. In just three years, ChatGPT has expanded from a GPT-3.5 conversational prototype to a multimodal, dynamically routed assistant embedded across software platforms, enterprise workflows, and consumer devices.

Understanding this timeline is essential today. The rise of GPT-4, GPT-4o, the o-series, GPT-5, and most recently GPT-5.1 reflects deeper architectural transitions, shifting from dense transformers to fused multimodal models and selective reasoning pathways. These changes unfolded alongside increasing compute pressure, as the global GPU shortage pushed providers toward more efficient inference strategies and hybrid cloud plus local execution models.

This article provides a clear, technically grounded view of every major ChatGPT version, how each model reshaped capability, and why inference efficiency now matters as much as raw performance. It is designed for developers, researchers, and professionals who need an authoritative timeline spanning 2022 to 2025 — and a forward-looking sense of where ChatGPT is heading next.

| Model Version | Release Date | Status | Short Description |

|---|---|---|---|

| GPT-3.5 | Nov 2022 | Discontinued | First model used in the initial public release of ChatGPT. |

| GPT-4 | Mar 2023 | Discontinued | Major improvement in reasoning, reliability, and safety; introduced with ChatGPT Plus. |

| GPT-4o | May 2024 | Legacy Support | Unified multimodal model handling text, images, audio, and video with low latency. |

| GPT-4o mini | Jul 2024 | Discontinued | Lighter, cheaper version of GPT-4o; replaced GPT-3.5 in ChatGPT. |

| o1-preview | Sep 2024 | Discontinued | Early preview of the first OpenAI model designed for explicit reasoning steps. |

| o1-mini | Sep 2024 | Discontinued | Smaller, faster variant of o1 optimized for cost and latency. |

| o1 | Dec 2024 | Discontinued | Full release of the explicit reasoning model; more stable and structured outputs. |

| o1-pro | Dec 2024 | Discontinued | High-compute version for deeper reasoning; offered to ChatGPT Pro users. |

| o3-mini | Jan 2025 | Discontinued | Successor to o1-mini focused on improved reasoning efficiency. |

| o3-mini-high | Jan 2025 | Discontinued | Higher-effort variant of o3-mini for more complex reasoning. |

| GPT-4.5 | Feb 2025 | Discontinued | Very large model, bridging GPT-4o to GPT-5; last non-chain-of-thought model. |

| GPT-4.1 | Apr 2025 | Legacy Support | More efficient successor to GPT-4, first released via API then added to ChatGPT. |

| GPT-4.1 mini | Apr 2025 | Discontinued | Lightweight variant of GPT-4.1; replaced GPT-4o mini in May 2025. |

| o3 | Apr 2025 | Legacy Support | Full version of o3, improving structured reasoning and response stability. |

| o4-mini | Apr 2025 | Legacy Support | Compact, efficient precursor to the o4 family; optimized for inference cost. |

| o4-mini-high | Apr 2025 | Discontinued | High-compute variant of o4-mini. |

| o3-pro | Jun 2025 | Discontinued | Pro-tier version of o3 with extended reasoning capabilities. |

| GPT-5 | Aug 7, 2025 | Legacy Support | Flagship model introducing adaptive routing (Instant/Thinking) and improved reasoning. |

| GPT-5.1 | Nov 12, 2025 | Active | More consistent reasoning, better instruction following, and user-aligned personalities. |

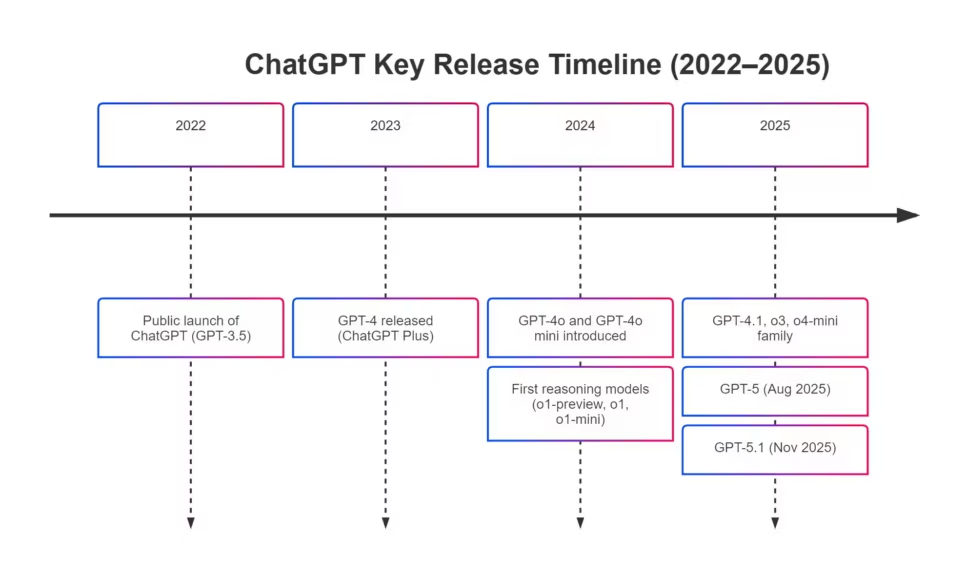

Major Milestones at a Glance (2022–2025)

Understanding when each version arrived, how it differed, and what architecture or reasoning upgrade powered it is essential for anyone working with LLMs, APIs, or multimodal systems. This section provides a concise chronological view that supports fast technical onboarding and accurate historical context for comparative evaluation.

1. The Launch of ChatGPT in 2022

1.1 Release date and initial context

ChatGPT was officially launched on November 30, 2022, using the GPT-3.5 family as its underlying model. The release followed several years of foundational transformer research, including GPT-1 (2018), GPT-2 (2019), and GPT-3 (2020). These earlier models provided the architectural basis for ChatGPT, with GPT-2 notably being downloadable and widely used for open-source experimentation. The public beta of ChatGPT marked a milestone: it brought large-scale generative transformers to mainstream use with an interface accessible to non-technical audiences.

GPT-3.5 itself was built on refinements of GPT-3’s dense transformer architecture, combined with supervised fine-tuning and instruction training. While limited by modern standards, it introduced practical instruction following, context-aware dialogue, and acceptable latency for broad deployment. ChatGPT rapidly gained one million users within five days, signaling unprecedented public interest in conversational AI.

1.2 Why GPT-3.5 mattered for adoption

GPT-3.5 delivered a turning point in usability due to three main factors: stable instruction following, low inference cost relative to larger models, and readiness for mainstream consumer workloads. These advantages explain why GPT-3.5 served as ChatGPT’s backbone for months and remained available until its deprecation in 2024, when GPT-4o mini became the new lightweight default.

2. The 2023 Breakthrough: GPT-4 and the First Major Upgrade

2.1 GPT-4 release and capabilities

OpenAI introduced GPT-4 on March 14, 2023, offering major gains in reasoning, safety alignment, and reliability. Released initially to ChatGPT Plus subscribers, GPT-4 represented a leap in complexity, with deeper attention layers, expanded hidden dimensions, and improved training data diversity. It excelled at structured reasoning tasks, exam-style prompts, and context-sensitive instructions, outperforming GPT-3.5 significantly on standardized evaluations.

While not explicitly multimodal in its first consumer release, GPT-4 set the foundation for subsequent vision-enabled systems integrated later that year. It also marked the arrival of higher-tier subscription plans and API segments tailored to professional users, establishing a pattern where more advanced models were gated behind premium offerings.

2.2 Early limitations and architectural constraints

Despite its impact, GPT-4 had notable constraints: high inference cost, significant VRAM requirements, and latency that limited real-time or interactive multimodal workloads. Its dense architecture offered predictable performance but scaled poorly for consumer-facing use during peak hours, hinting early at one of the core systemic challenges AI providers would face in 2024–2025—the growing mismatch between model size and global compute availability.

2.3 Relevance for developers

For engineers, GPT-4 established baseline expectations for precision, reliability, and safety. It became the model of choice for legal, medical, and analytical applications requiring predictable reasoning. Its architectural lineage continues to influence models like GPT-4.1 and GPT-5, particularly in alignment, safety scoring, and structured evaluation protocols.

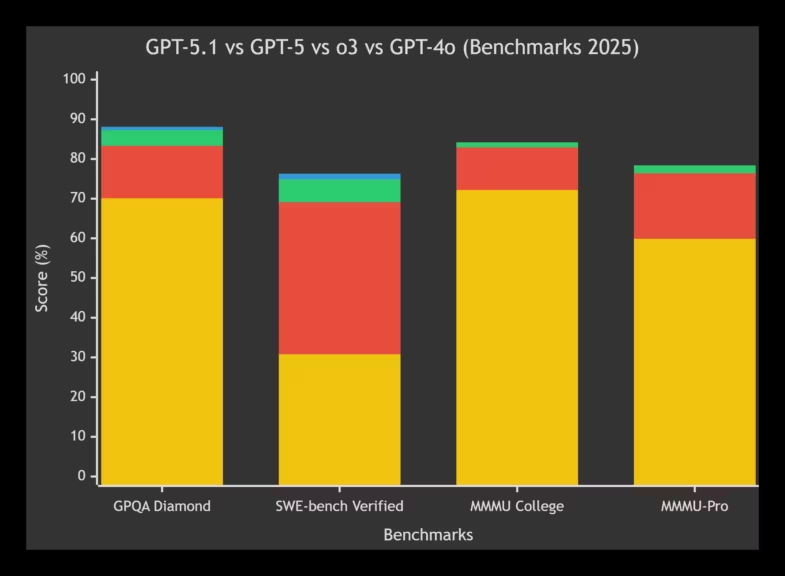

*GPT-4o in yellow, o3 in red, GPT-5 in green, GPT-5.1 in blue with partial data

3. Expansion in 2024: Multimodality, Mini Models, and the o-Series Preview

3.1 GPT-4o and the rise of integrated multimodality

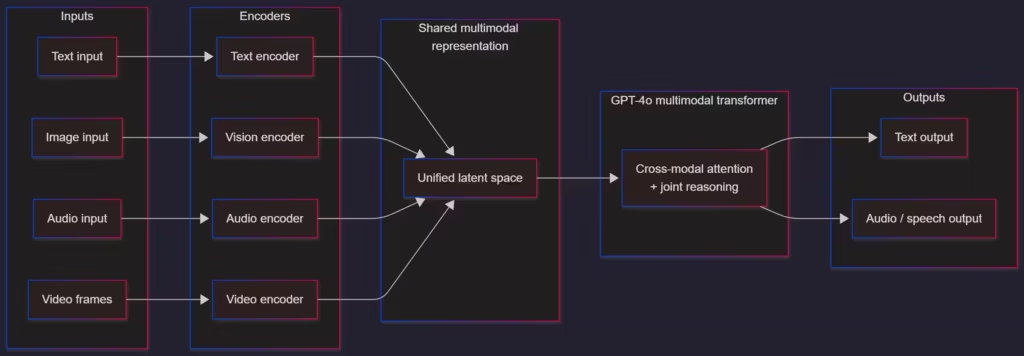

May 2024 introduced GPT-4o, a major architecture update that unified text, vision, audio, and video in a single multimodal model. GPT-4o was not simply GPT-4 with additional encoders but represented a more integrated architecture with fused attention layers and cross-modal representations. It offered significantly lower latency and improved throughput, enabling near real-time interaction—an essential capability for consumer applications, accessibility tools, and creative workflows.

GPT-4o also became available for free within usage limits, raising the baseline quality of ChatGPT’s free tier. Developers saw improved consistency in multimodal tasks like visual question answering, document parsing, and audio-based interactions.

3.2 GPT-4o mini and the end of GPT-3.5

Released in July 2024, GPT-4o mini replaced GPT-3.5 as the default lightweight model. It delivered lower inference cost, smaller context windows, and simpler reasoning capabilities compared to GPT-4o, but with substantial improvements over the retiring GPT-3.5. For API users and hobbyist developers, GPT-4o mini became a practical choice for inexpensive, high-volume workloads.

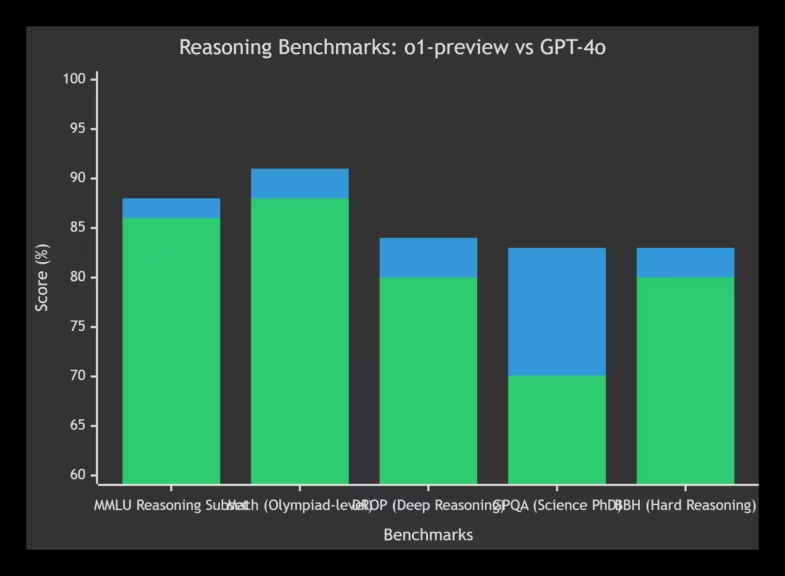

3.3 The o-series previews: o1-preview and o1-mini

In September 2024, OpenAI introduced o1-preview and o1-mini, two prototype models demonstrating explicit chain-of-thought reasoning controlled through reinforcement learning. Unlike GPT-4o, these models prioritized depth of reasoning over multimodality or speed. They delivered strong performance on tasks like mathematics, logic, and coding competitions, signalling a new branch of architectures optimized specifically for reasoning rather than general-purpose interaction.

However, both models were short-lived, serving as stepping stones toward the full o1 and later o3/o4-series models released in 2025.

This period marks the moment when large-scale multimodality and explicit reasoning began diverging as independent optimization goals. GPT-4o pushed latency and sensory integration forward, while the o-series explored deliberate reasoning depth under tight compute constraints, a split that would later be reconciled through GPT-5’s adaptive routing.

4. Consolidation in 2024–2025: From o1 to GPT-4.1 and Efficient Reasoning

4.1 The December 2024 releases: o1 and o1-pro

December 2024 marked a turning point with the release of o1 and o1-pro, the first stable reasoning-focused models. They emphasized structured problem solving, explicit reasoning traces, and system reliability under long-form logical tasks. The “pro” variant increased compute allocation for higher reasoning depth, targeting advanced users and professional subscribers.

These models introduced clearer compute scaling options and illustrated how future architectures could separate “fast inference” and “deep reasoning” pathways without requiring monolithic transformer stacks.

4.2 GPT-4.1 and GPT-4.1 mini in April–May 2025

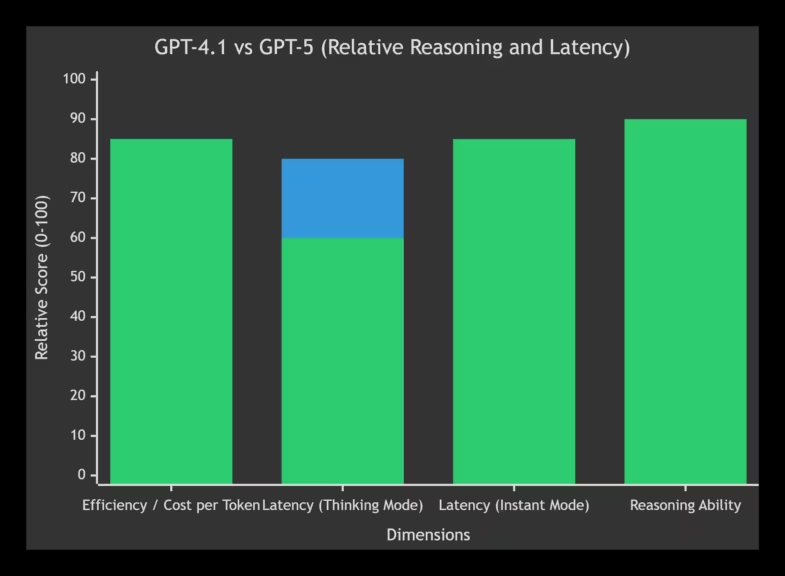

April 2025 introduced GPT-4.1 and GPT-4.1 mini, models that refined GPT-4o techniques while reducing cost and latency. GPT-4.1 became an intermediate step before GPT-5, offering improved consistency, updated safety layers, and better long-context manipulation. The mini version replaced GPT-4o mini in May 2025, highlighting a trend toward smaller, more cost-efficient models with competitive performance.

| Model | Year | Multimodality | Reasoning Ability | Speed / Latency | Cost / Efficiency | Availability | Key Notes |

|---|---|---|---|---|---|---|---|

| GPT-4 | 2023 | Partial (image via API only) | Strong but slower, high-depth reasoning | High latency, heavy compute load | Expensive to run | Legacy API model | Major quality breakthrough, strong reasoning, but computationally costly and not optimized for large-scale usage. |

| GPT-4.1 | 2025 | Improved multimodality but not fully unified | More stable and efficient than GPT-4 | Faster, optimized inference | Much more efficient than GPT-4 | API and later ChatGPT | A compact, cost-optimized evolution of GPT-4 designed for lower inference cost during GPU shortages. |

| GPT-4o | 2024 | Fully unified multimodal model (text, image, audio, video) | Very strong, though not as specialized as o-series | Very low latency, near real-time | Highly cost-effective | Available to all users (limits vary by plan) | First unified multimodal model, enabling natural, real-time interactions across modes with high efficiency. |

| *Differences Between GPT-4, GPT-4.1, and GPT-4o |

These models arrived during the first signs of industry-wide compute pressure. As demand outpaced GPU availability, OpenAI and other providers shifted focus from monolithic architectures to selective attention, optimized inference pathways, and controlled reasoning depth. This transition set the stage for GPT-5, where efficiency became a primary design constraint rather than a secondary optimization.

5. The 2025 Shift: GPT-5, GPT-5 Instant, and GPT-5.1 Thinking

5.1 GPT-5 release in August 2025

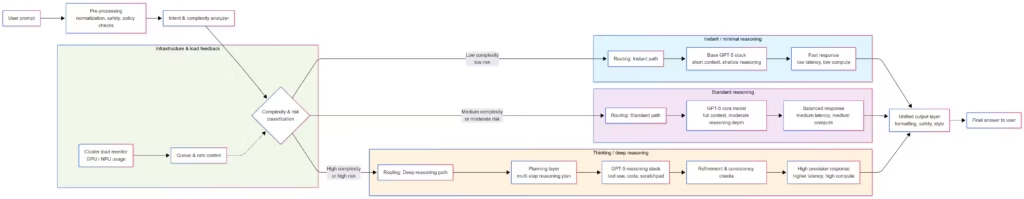

OpenAI released GPT-5 on August 7, 2025, consolidating all previous model families into a unified architecture available across both free and paid tiers. GPT-5 introduced three operating modes designed to optimize reasoning time according to user intent: GPT-5 Instant, GPT-5 Thinking, and GPT-5 Pro. The default variant, GPT-5 Auto, used an inference router capable of estimating prompt complexity and allocating appropriate reasoning depth.

Architecturally, GPT-5 built on the combined lineage of GPT-4o, GPT-4.1, and the o-series, incorporating selective-attention mechanisms, safety-alignment layers, and more dynamic reasoning pathways. Developers noted improvements in long-context stability, reduced hallucination rates, and more consistent cross-task performance.

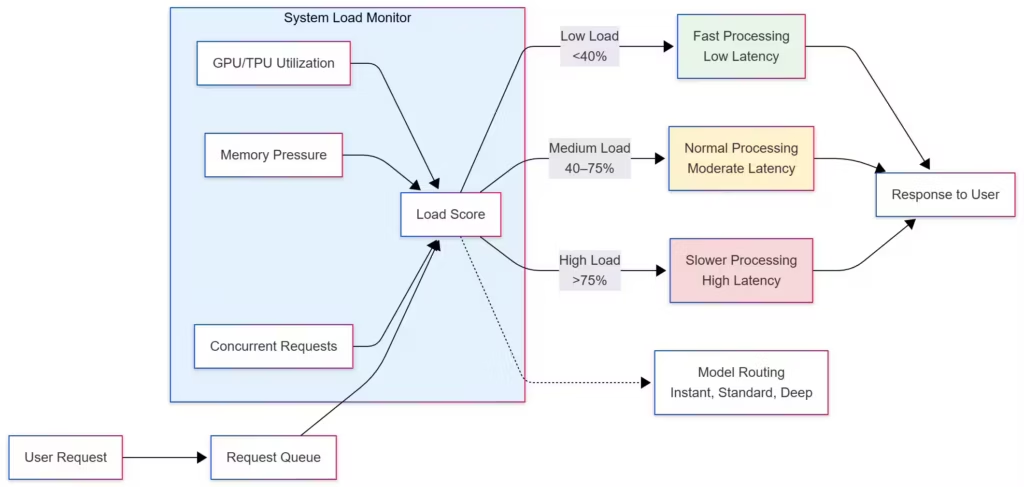

GPT-5 also arrived during a period of global GPU scarcity, meaning its rollout had to balance model scale with inference efficiency. Through routing-based reasoning, GPT-5 could reduce unnecessary compute expenditure during low-complexity prompts, indirectly helping providers manage peak demand.

- GPT-5 surpasses GPT-4.1 in virtually all reasoning benchmarks (GPQA, SWE-bench, math, multimodal).

- The actual difference is sometimes slight in simple tasks, but very pronounced in complex tasks.

- GPT-5 in Instant mode is faster than GPT-4.1 thanks to adaptive routing and pipeline optimization improvements.

- In Thinking mode, GPT-5 activates a deep reasoning pipeline → therefore slower than GPT-4.1.

- This is an accepted cost to obtain more structured responses.

- GPT-5 manages its inference cost better than GPT-4.1, despite its complexity.

- Essential in a context of GPU shortage.

GPT-5’s introduction of automatic reasoning selection was as much an engineering necessity as a usability improvement. By dynamically choosing between Instant, Thinking, and deeper reasoning paths, OpenAI reduced unnecessary compute overhead during simple interactions while reserving capacity for complex workloads, a crucial adaptation in a period of global GPU scarcity.

This adaptive system also explains why modern interactions may feel inconsistent in speed, a behavior examined in detail in independent analyses of 2025 compute constraints.

5.2 GPT-5 instant and resource-efficient workloads

Released alongside GPT-5, GPT-5 instant provided a cost-efficient fallback for users reaching usage limits or needing high-volume, moderate-precision workloads. Designed for fast responses and lower inference cost, GPT-5 instant extended the trend set by GPT-4o mini and GPT-4.1 mini, offering a highly optimized model suited to background tasks, automation pipelines, and embedded applications where full-scale reasoning was unnecessary.

5.3 GPT-5.1 in November 2025

On November 12, 2025, OpenAI launched GPT-5.1, emphasizing better instruction following, warmer conversational tone, and expanded personalisation options. While public details remain limited, GPT-5.1 introduced improvements in reasoning control, adaptive response generation, and refinement of the Auto selector, enhancing how the system chooses between fast inference and deep reasoning.

Developers observed incremental but meaningful gains in response stability, reduced jitter in reasoning modes, and improved performance on tasks requiring nuanced interpretation. GPT-5.1 also arrived with new configuration options enabling alternative conversational “personalities”, a feature aimed at consumer adoption and enterprise differentiation.

6. Architectural Evolution: How ChatGPT Changed Internally from 2022 to 2025

6.1 From dense transformers to multimodal fusion

The evolution from GPT-3.5 to GPT-5.1 reflects a series of deep architectural transitions. Early models like GPT-3.5 relied on dense, uniform transformer stacks optimized for general-purpose language tasks. By contrast, GPT-4o introduced a fully fused multimodal architecture capable of handling text, image, audio, and video in a unified representation space, reducing latency and enabling seamless cross-modal reasoning.

6.2 The emergence of explicit reasoning models

The o-series marked a deliberate shift toward explicit reasoning. Models like o1, o1-mini, and later o3-mini-high experimented with reinforcement learning to optimize chain-of-thought depth, enabling more controlled reasoning for mathematics, logic, and code synthesis. These models demonstrated that deeper reasoning does not always require larger transformers. Instead, controlled test-time compute allocation and optimized reasoning traces can significantly improve results on complex tasks.

This architectural philosophy influenced GPT-5, which blends implicit and explicit reasoning modes depending on prompt difficulty.

6.3 Selective attention and inference routing

GPT-4.1 introduced selective attention mechanisms that improved long-context reliability without proportionally increasing compute. GPT-5 expanded this with a router that dynamically adjusts reasoning depth. This not only improves user experience but helps providers manage infrastructure load during global traffic peaks.

These architectural shifts illustrate a broader industry trend: efficiency and controllable reasoning now matter more than raw model size.

(Click to enlarge)

7. Performance Constraints: GPU Shortage, Inference Cost, and Latency

7.1 The impact of global GPU scarcity

By 2024–2025, the rapid rise of multimodal and reasoning-focused models collided with global supply constraints on advanced GPUs, driven by limited CoWoS packaging capacity and HBM availability. Even large providers experienced performance fluctuations, especially during peak usage.

These constraints directly shaped ChatGPT’s evolution. Models needed to balance increased reasoning capability with efficient inference, and releases like GPT-4.1, GPT-5, and GPT-5.1 incorporated cost-saving techniques to accommodate infrastructure limits.

7.2 OpenAI’s multi-cloud strategy

OpenAI adopted a multi-cloud strategy, spreading workloads across Azure, AWS, and other providers to reduce dependence on any single infrastructure and mitigate regional slowdowns. While this improved reliability, it did not fully eliminate latency dips associated with global traffic surges, a problem noted across the industry.

7.3 Why ChatGPT feels slower despite model improvements

Recent ChatGPT versions have not become faster for end users. Instead, dynamic reasoning selection and compute routing can create the impression of speed improvements during simple tasks. However, during heavy load or complex queries, deeper reasoning pathways can introduce noticeable latency. Providers also adjust compute allocation depending on traffic, meaning quality and speed can vary throughout the day.

For a full explanation, see related analyses on compute scarcity, model slowdowns, and multi-cloud resource management.

Model evolution between 2024 and 2025 cannot be understood without acknowledging the hardware bottleneck shaping it. Each new release, from GPT-4.1 to GPT-5.1, embedded increasingly aggressive efficiency strategies, from speculative decoding to optimized KV cache layouts, reflecting the reality that model capability was now deeply intertwined with available infrastructure.

For a broader view of the infrastructure side, analyses of data center constraints in 2025 provide essential context for understanding model behavior under load.

8. Future Outlook: Beyond 2025 and Toward GPT-6

8.1 Early signals about GPT-6

GPT-6 does not yet have an official release date, but early indications point to major improvements in memory persistence, personalization, and adaptive reasoning. The next generation is expected to deepen integration with user preferences and long-term context, potentially expanding how AI can serve as a personalized assistant in both consumer and enterprise contexts.

8.2 Hybrid AI: local + cloud inference

The broader industry is converging toward hybrid execution: combining cloud-based LLMs with local inference on consumer hardware, NPUs, or edge accelerators. This shift is driven by cost pressures, privacy requirements, and the practicality of running lighter models locally while reserving deep reasoning tasks for cloud systems.

Enterprise strategies increasingly avoid dependence on a single provider, deploying internal models for sensitive workloads while using cloud reasoning on demand. This hybrid approach is already visible in 2025 and is likely to expand further as compute constraints persist.

8.3 Efficiency as the next frontier

The pace of raw performance improvements is slowing, and the leading differentiator is now inference efficiency. GPT-5.1 illustrates this shift, similar to Anthropic’s focus with Haiku 4.5. As efficiency rises, use cases will diversify: autonomous agents, deeper software integration, real-time multimodal assistants, and new forms of productivity tooling.

As NPUs and lightweight edge accelerators become more capable, hybrid execution models will allow a growing share of inference to shift onto local devices. Cloud-scale reasoning will remain essential, but everyday AI use will increasingly blend on-device efficiency with server-side depth.

9. Final Perspective

Over just three years, ChatGPT has evolved from a conversational prototype into a deeply integrated AI system shaping productivity, research workflows, and everyday digital tools. The trajectory from GPT-3.5 to GPT-5.1 highlights a shift toward assistants that are easier to use, more adaptive to individual preferences, and increasingly embedded across devices, software platforms, and enterprise environments.

This evolution reflects a broader industry transition. AI systems will no longer rely exclusively on large cloud-scale inference. The coming years point toward hybrid execution models where lightweight, efficient models run locally on consumer hardware, while cloud systems handle complex reasoning. For enterprises, this hybrid cloud plus local approach is already underway, driven by the strategic need to avoid dependence on a single provider and to secure performance, privacy, and resilience. OpenAI’s multi-cloud deployments illustrate this shift, though compute constraints remain a defining challenge across the industry.

Despite architectural progress, end-user speed has not improved in recent months. As detailed in analyses such as Why AI Models Are Slower in 2025: Inside the Compute Bottleneck and GPU Shortage: Why Data Centers Are Slowing Down in 2025, the global GPU shortage continues to limit capacity. Models have grown heavier, traffic has increased, and providers adjust compute allocation dynamically to maintain stability. This can result in variable performance depending on load and region.

GPT-5 and GPT-5.1 introduced automatic model selection, routing between “Instant”, “Thinking”, and deeper reasoning modes. This mechanism can give the impression of increased responsiveness on simple queries, while preserving capacity for complex tasks. However, it also means answer quality can fluctuate: a practical compromise for maintaining operational continuity at massive scale.

Looking ahead, the critical challenge is no longer raw model size but inference efficiency. GPT-5.1 follows this direction, as do competing systems such as Anthropic’s Haiku 4.5. Sustained reductions in inference cost will unlock new use cases, enable more autonomous AI agents, and support deeper integration into mainstream software and edge devices.

As for GPT-6, no release date has been confirmed, but expectations converge on long-term memory, richer personalization, and improved adaptability across user contexts. The next phases of AI development will favor systems that are more distributed, more efficient, and more aligned with user intent — a trend visible across the latest industry updates and ongoing research, covered in AI News This Week.

Sources & References

Official Sources

- OpenAI Blog – Announcements, model updates, and reasoning improvements https://openai.com/blog

- OpenAI Platform Documentation – API reference, model versioning, release notes https://platform.openai.com/docs

- OpenAI Research – Technical papers on model architecture and training https://openai.com/research

Technical Documentation & Model Releases

- OpenAI Model Index – Current and legacy model listings (GPT-4, GPT-4o, GPT-5, o-series) https://platform.openai.com/docs/models

- OpenAI Release Notes – Historical changes, performance upgrades, feature rollouts https://platform.openai.com/docs/release-notes

Press & Industry Coverage

- The Verge – Coverage on GPT-4o, multimodal capabilities, and model evolution https://www.theverge.com

- Ars Technica – Analysis of OpenAI updates and system behavior under load https://arstechnica.com

- Wired – Industry context on AI scaling, compute budgets, and emerging constraints https://www.wired.com

Performance, Reasoning & Architecture Analysis

- OpenAI Reasoning Models Overview – o-series reasoning methodology and depth selection https://platform.openai.com/docs/guides/reasoning

- OpenAI Multimodal Documentation – Text, vision, audio, video input specs https://platform.openai.com/docs/guides/vision

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!