The Millisecond War: Decoding LLM Inference Performance in 2026

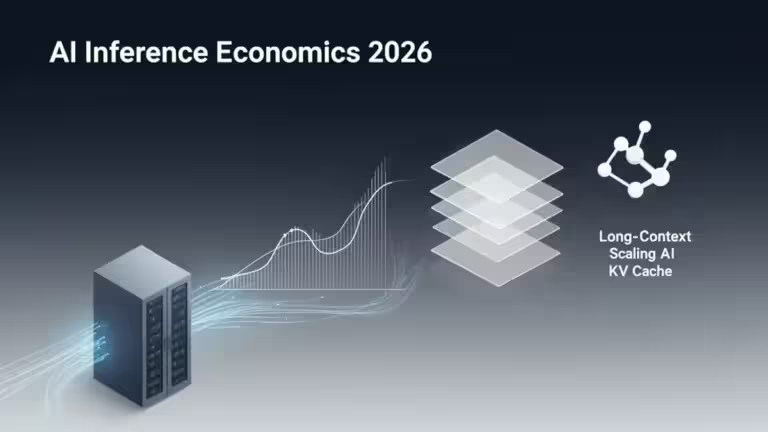

In the 2026 AI ecosystem, raw model intelligence is no longer the sole metric of success; generation speed, measured in tokens per second (token/s), has become the ultimate competitive frontier. As users demand near-instantaneous interactivity, recent benchmarks reveal stark disparities between industry leaders and highlight the critical role of optimized inference engines.

Speed Benchmarks: The Race for Throughput in 2026

Output speed measurements show that not all models are created equal when it comes to latency. A significant gap has emerged between “Flash” architectures optimized for fluidity and “Pro” models dedicated to complex reasoning.

LLM Output Speed Comparison (Feb 2026)

The following table synthesizes average performance observed across major API providers and reference infrastructures.

| Model | Typical Speed (token/s) | Technical Profile |

|---|---|---|

| Gemini 3 Flash | ~200–220 | Optimized for responsiveness (Direct API) |

| GPT-5 Standard | ~170–180 | Versatility and high throughput |

| Gemini 3 Pro | ~100+ | Long-context & multimodal specialist |

| GPT-5 Pro | ~60 | Advanced reasoning (Chain-of-Thought) |

| DeepSeek V3 | ~50–65 | MoE architecture (varies by provider) |

| Claude 4 Sonnet | ~45–50 | Balanced nuance and speed |

| Claude 4 Opus | ~39–40 | High-fidelity reasoning & creative writing |

Methodological Note: These values represent typical ranges observed via Artificial Analysis, Vellum, and public 2026 benchmarks. Real-world performance varies based on provider, region, server load, and prompt context length.

Market Leaders Analysis

According to available public benchmarks, Gemini 3 Flash currently positions itself as one of the fastest models in raw generation, offering a near-instant experience for summarization and translation tasks. This is largely driven by Google’s TPU v6e (Trillium) infrastructure, which is specifically tuned for high-speed inference. Meanwhile, GPT-5 Standard remains a dominant force in the general-purpose assistant market by maintaining an exceptionally high throughput baseline.

Theoretical Use Case: Latency impact goes beyond user comfort. From a technical perspective, high velocity allows for more granular real-time behavioral monitoring. Conversely, high-latency models could theoretically be exploited for micro-prompt injections (jailbreaking) in massive data streams, whereas an ultra-fast API would identify the anomaly before the request completes. Similar background inference logic is seen in Discord’s predictive moderation, which analyzes user signals silently.

TTFT vs. Throughput: The Perception of “Real-Time”

For the end-user, perceived speed is a combination of two distinct metrics:

- TTFT (Time To First Token): The reaction time before the first character appears.

- Throughput: The speed at which the remaining text flows.

A model can exhibit high throughput but suffer from a long TTFT. This is common in “Reasoning” models: the AI may “think” for 5 to 30 seconds (high TTFT) before streaming the final answer at a rapid pace. For interactive chatbots, a low TTFT is mandatory to prevent user churn, while for long-document analysis, the overall throughput dictates the efficiency of the AI inference economics. Understanding the trade-offs between throughput and latency is now a core requirement for AI architects.

Inference Engines: The Orchestrators of Performance

Speed depends as much on the inference runtime as it does on the model weights. Frameworks like vLLM, TensorRT-LLM, and llama.cpp use advanced engineering to shave off milliseconds:

- Continuous Batching: Processing multiple requests in parallel without waiting for the first one to finish.

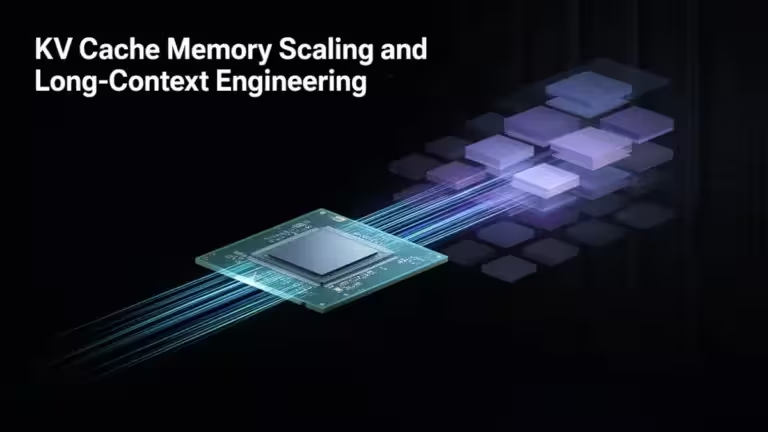

- FlashAttention & PagedAttention: Optimizing GPU memory access and KV Cache management, which is essential for scaling long-context windows.

- Speculative Decoding: Using a smaller “draft” model to predict the next few tokens, which are then validated by the larger model in a single pass.

- Disaggregated Serving (Prefill vs. Decode): A 2026 architecture standard that physically separates the prompt processing phase from the token generation phase to prevent long prompts from slowing down short responses.

Selecting the right provider is critical; the integration of Public AI on Hugging Face highlights the industry’s move toward democratizing these specialized inference runtimes.

Technical Architecture: From Cloud to Edge AI

As cloud infrastructures face periodic congestion, a paradigm shift toward Edge AI is underway. In specific scenarios—such as saturated servers or short-context tasks—optimized local inference via high-performance NPUs or memory-efficient formats like DFloat11 can be more responsive than cloud APIs.

This trend is championed by technologies like Google Personal Intelligence, which processes sensitive data directly on-device. Privacy has thus become a performance driver: fewer round-trips to the cloud inevitably result in lower latency.

AI Performance FAQ

Why is speed lower on “Pro” or “Reasoning” models?

Reasoning models perform internal verification steps and Chain-of-Thought processing before outputting text, which mechanically increases the computational time per request.

Is the token/s rate constant during a conversation?

No. Throughput generally decreases as the context window fills up, because the model must process an increasing amount of historical data to generate each new token.

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!