The Millisecond War: Decoding LLM Inference Performance in 2026

In the 2026 AI ecosystem, raw model intelligence is no longer the sole metric of success; generation speed, measured in…

AI tools, software, frameworks, cloud services

In the 2026 AI ecosystem, raw model intelligence is no longer the sole metric of success; generation speed, measured in…

The market for AI coding agents in 2026 is defined by a fierce competition for control of the terminal. This…

Benchmarks such as Terminal Bench 2.0 or SWE-Bench Pro measure an agent’s ability to produce a correct patch in a…

Automatic speech-to-text systems are now ubiquitous. Yet their evaluation is most often superficial: a few impressions of readability, sometimes a…

AI inference cost, not training expense, now defines the real scalability, latency, and budget limits of modern AI systems. In…

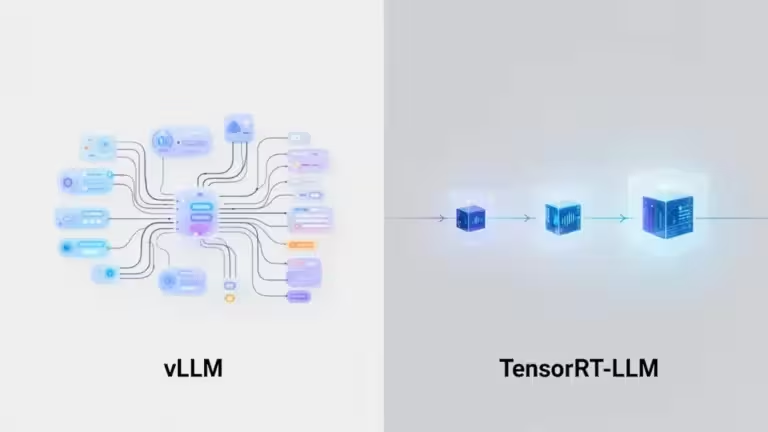

Developers comparing vLLM and TensorRT-LLM are usually evaluating how each runtime handles scheduling, KV cache efficiency, quantization, GPU utilization, and production deployment. This guide…