Discord and Edge AI: ultimate privacy or technical black box?

As of March 2026, Discord enters a new era of digital regulation. Under pressure from the UK Online Safety Act and Australian authorities, the platform is mandating age verification. Yet, behind the “blackout” hitting unverified accounts lies a massive technical hurdle: deploying on-device facial estimation (Edge AI) across millions of heterogeneous devices.

25 February 2026 Update

Discord has postponed the global launch of its age assurance features to late 2026 due to privacy-related criticism. While previous technical analyses of the system remain relevant, both the timeline and Discord’s communication strategy have significantly evolved.

For Cosmo-edge, we have dissected the core of this technology. Is this the future of privacy or a new bottleneck for users? For more details, check our special report on Discord age verification.

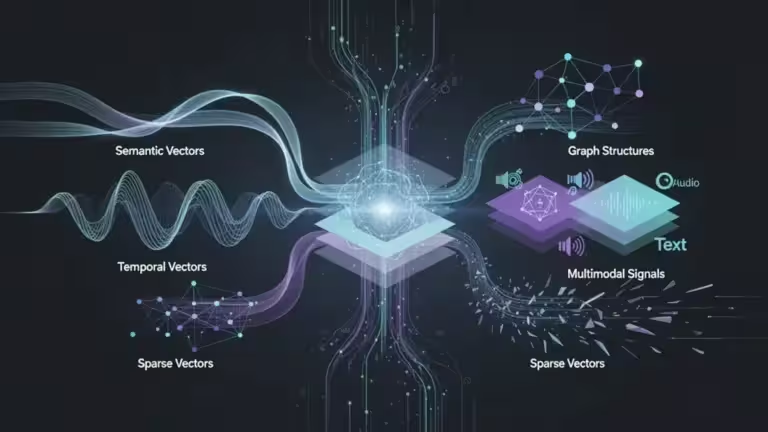

On-device AI: the engineering under the hood

Instead of routing your facial data to its servers—a major legal and security liability following the 5CA data breach in October 2025, which leaked 70,000 IDs—Discord is offloading the intelligence to your hardware. This “Local-first” approach directly aligns with GDPR and CNIL data minimization principles.

WebAssembly architecture and CNN models

Discord leverages WebAssembly (WASM) to execute high-performance code within its Electron client or web browser.

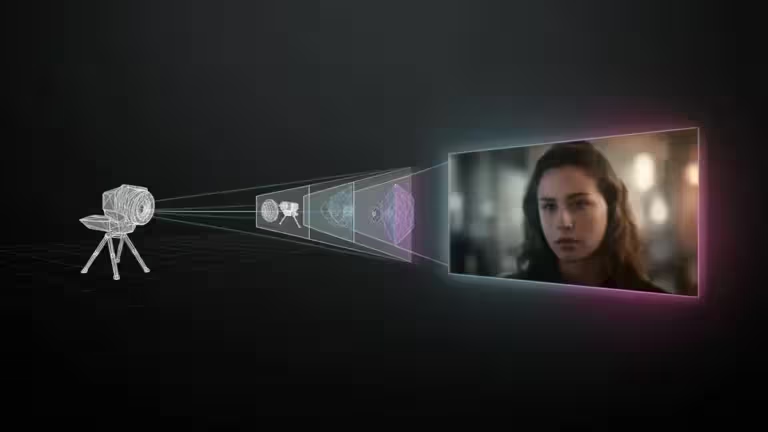

- The Model: While Discord has not officially named its “face scan” partner, the technical architecture points toward a lightweight Convolutional Neural Network (CNN). This type of model, standardized by industry leaders like Yoti or available via open-source libraries on Hugging Face, is specifically optimized for local inference.

- Liveness Detection (PoC): To prevent spoofing via simple photos, the AI uses facial landmarks to require real-time movement. A notable technical bypass during the UK beta phase illustrated the limits of these algorithms: users successfully spoofed the system using the ultra-realistic photo mode of Death Stranding. By manipulating Sam Porter’s facial expressions, they bypassed the “proof of life” requirement, forcing a refinement of skin texture and depth analysis filters.

Estimated performance by hardware setup

| Hardware | Inference Time | CPU Impact |

| Gaming PC (RTX/GTX GPU) | < 1s | Negligible |

| Office PC (Intel i5/Ryzen 5) | 2 – 4s | Brief load spike |

| Entry-level Smartphone | 5 – 10s | Slight thermal increase |

| Legacy Laptop (< 2018) | 8 – 12s | Potential stutter |

The flip side: reliability and the “Black Box” paradox

While the privacy promise is compelling, the technical reality raises questions about algorithmic fairness and reliability.

The MAE (Mean Absolute Error) challenge

According to NIST (FATE) standards, facial age estimation models carry a Mean Absolute Error (MAE) of 2 to 4 years.

The Critical Metric: Pilot tests revealed a false-negative rate (adults flagged as minors) of 10% to 28% among the 18-22 demographic. Poor lighting, glasses, or “youthful” features can trigger an AI failure, forcing users toward Persona, a significantly more intrusive ID-scanning alternative.

Audit opacity: a strategic contradiction

This is where the narrative falters. While market leaders like Yoti embrace transparency through public audits (NIST, ACCS), the system integrated by Discord remains proprietary. Although GDPR-compliant on paper, no independent public audit has yet validated the total isolation of the on-device code. For experts, it remains a “black box” where one must take the platform’s security claims at face value.

Why independent creators are left behind

At Cosmo-edge and Cosmo-Games.com, we have long advocated for Local AI as a tool for personalization and privacy. For independent publishers, replicating Discord’s strategy is now feasible by integrating third-party SDKs. These tools allow complex models to run directly in the browser via WebAssembly, ensuring that biometric analysis remains confined to the user’s RAM.

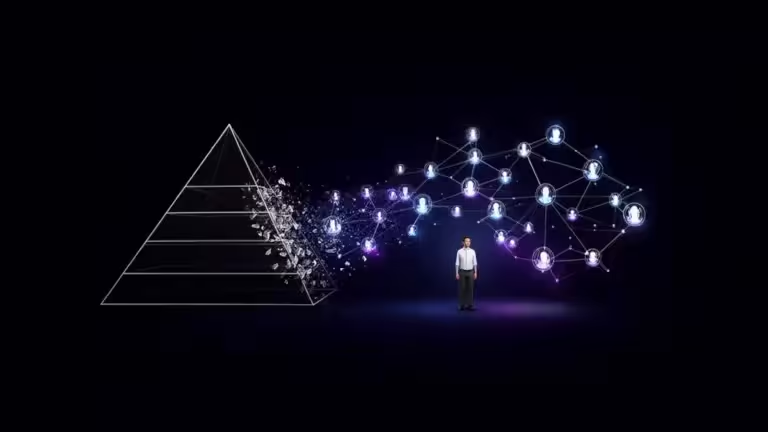

Edge AI: privacy savior or big tech privilege?

Discord’s pivot to on-device AI marks a decisive shift where the cloud is no longer the default for sensitive data. By leaning on Edge AI, the platform attempts to bridge the gap between regulatory compliance and the right to relative anonymity.

However, will this transition be enough to restore lasting trust? The paradox is striking: Discord secures access on one end via anonymous facial estimation but continues to refine behavioral inference models based on private metadata on the other. The challenge for 2026 will be to see if “Privacy by Design” becomes a globally audited standard or remains a marketing shield for tech giants.

Understanding the broader implications of on-device inference is key to navigating the new digital safety era. You can find our full series of technical reports in our dedicated Discord 2026 Age Verification Hub.

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!