The Sora Sunset: Decoding OpenAI’s Strategic Retreat from Generative Video

The sudden discontinuation of Sora in early 2026 marks a watershed moment for the generative AI industry. Once hailed as the “Hollywood-killer” during its viral unveiling, the model has been moved from a public-facing product roadmap to a “research integration” phase before ever reaching a general 1.0 release.

This retreat is not a sign of technical failure, but rather a calculated pivot toward capital efficiency. As the industry shifts from the “era of wonder” to the “era of ROI,” OpenAI’s decision to sunset its most famous video model reflects a broader strategic reallocation of compute toward agentic reasoning and enterprise-grade reliability.

Official Discontinuation: What We Know for Certain

While the tech community speculated on a potential “Sora 2” or a wide-scale ChatGPT integration, OpenAI’s official communications have signaled a definitive end to Sora as a standalone consumer product. According to the official OpenAI discontinuation notice, the organization is moving away from dedicated text-to-video interfaces to focus on core multimodal capabilities.

Key confirmed milestones:

- Access Termination: The “Red Teaming” phase and the “Sora for Artists” creative program will officially conclude by the end of Q2 2026.

- API Decommissioning: Early-access API endpoints for enterprise partners on Microsoft Azure are being phased out, with a full shutdown scheduled for June 30, 2026.

- Internal Integration: OpenAI maintains that the underlying research—specifically regarding spatio-temporal consistency—will be “folded into” future multimodal iterations of its primary models (likely the “Orion” or GPT-5 lineage).

This move effectively ends the pursuit of a “YouTube of AI” under the Sora brand, a sharp departure from the expectations set during the OpenAI DevDay announcements just a year prior.

The Unit Economics of Pixels: Why Video AI Hit a Financial Wall

The sunsetting of Sora is, at its core, an admission that the “Inference Tax” on video is currently too high for a sustainable business model. While OpenAI has not released specific loss ledgers, industry analysts and compute economists point to a massive mismatch between the cost of generation and the market’s willingness to pay.

The Inference Gap

The computational load of video generation does not scale linearly with text or static images; it scales cubically. To generate a single second of high-fidelity video, a model must maintain spatial consistency across millions of pixels while simultaneously ensuring temporal coherence across 24 to 60 frames.

- Compute-to-Revenue Mismatch: Estimates from infrastructure analysts suggest that running a model with Sora’s parameters could cost between hundreds of thousands and a million dollars per day in raw H100/B200 compute cycles.

- The “Slop” Problem: Unlike a coding assistant or a legal LLM—where the output has high economic utility—much of the early Sora usage was focused on “viral slop” for social media. This created a high-volume, low-margin traffic profile that burned through GPU cycles without building a sticky, high-value user base.

Hardware as a Constraint

Even for a company with OpenAI’s massive Microsoft-backed infrastructure, GPU scarcity remains a reality. Every H100 cluster dedicated to Sora was a cluster not being used to train “Orion” or to serve low-latency requests for ChatGPT Plus. In a pre-IPO environment, capital efficiency is king. Pruning a “compute-hungry” research project to prioritize VRAM-efficient and high-margin models is a standard move for a maturing tech giant.

The Compute Arbitrage: Prioritizing Reasoning over Rendering

The strategic pivot reflects a fundamental realization within OpenAI: the path to AGI (Artificial General Intelligence) lies in Reasoning and Agency, not in “faking” the physical world through pixels.

- From Generative to Agentic: The resources once earmarked for Sora are being reallocated to Agentic AI. An agent that can autonomously navigate a company’s ERP system or refactor a legacy codebase offers a recurring B2B value proposition that far outweighs a video generator.

- The Pivot to Enterprise: As discussed during the OpenAI DevDay announcements, the focus has shifted toward building an “OS for AI.” In this vision, video is a niche feature, not a core product.

By killing Sora, OpenAI is betting that the market cares more about an AI that can do work than an AI that can show a simulation of work.

Market Saturation and the Shift to Specialized Vertical Stacks

The discontinuation of Sora isn’t occurring in a vacuum. By 2026, the AI video landscape has matured from a single-player race into a fragmented market where specialized control and workflow integration have outperformed “one-click” cinematic generation.

The Rise of the Vertical Specialists

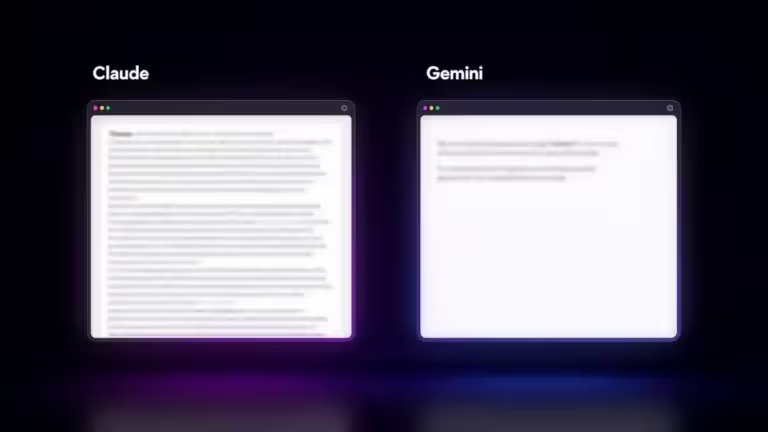

Professional creators have largely migrated to platforms that offer more than just a “prompt-to-video” box.

- Runway (Gen-4): Dominates the high-end creative market by offering granular tools like Motion Brush, camera path controls, and multi-image references.

- Adobe Firefly (Video Model): Leverage the ultimate moat—Deep Integration. By embedding video generation directly into Premiere Pro and After Effects, Adobe solved the “last mile” problem that Sora never addressed: the transition from a generated clip to a finished edit.

- Kling AI (Kuaishou): The volume leader. With the ability to generate clips up to 3 minutes in length (compared to Sora 2’s 25-second limit) and a significantly lower API cost ($0.07/sec vs. Sora’s $0.10–$0.50/sec), Kling captured the mid-tier and social media markets that OpenAI found unprofitable to serve.

The Local Efficiency Counter-Movement

As cloud-based video remains expensive, the technical community has pivoted toward Local Inference and Optimization. The adoption of model optimization formats like GGUF and FP8 has allowed prosumers to run high-quality video pipelines on consumer RTX hardware.

This decentralized “homegrown” competition made it increasingly difficult for OpenAI to justify a $200/month Pro tier for Sora when a user could achieve similar results using ComfyUI and open-weight models with zero recurring subscription fees.

Conclusion: The End of the “One Model to Rule Them All” Era

The death of Sora is a signal that the era of the “Generalist Giant” is ending. OpenAI is no longer trying to be everything to everyone; they are evolving into an Infrastructure and Agent company.

For the industry, this shutdown is a wake-up call regarding vendor lock-in. It proves that even the most well-funded AI products can vanish if their unit economics don’t align with the reality of GPU scarcity. The future of AI video likely lies in hybrid workflows: cloud-based agents for planning and low-res “sketches,” followed by local, specialized upscaling and refinement.

FAQ: Navigating the Sora Discontinuation

Why was Sora cancelled if it was so technically advanced? Internal sustainability was the primary factor. Estimates suggest Sora was a “compute sinkhole,” costing upwards of $15 million daily in inference while seeing a retention rate of less than 8% for Pro users. OpenAI chose to reallocate these massive GPU clusters to their Agentic Commerce Protocol (ACP) and reasoning models.

Will I lose my Sora-generated videos? Yes, unless you export them. The Sora app is scheduled to shut down on April 26, 2026, and the API will follow on September 24, 2026. Users are advised to archive their assets before these deadlines.

Is OpenAI leaving the video space entirely? Not exactly. The underlying technology is being folded into ChatGPT’s multimodal search and “proactive assistant” features. You may see video capabilities return, but as a secondary feature of a broader AI agent rather than a standalone creative suite.

What is the best alternative for professional production? For shot-specific control and motion precision, Runway Gen-4 is the current industry standard. For high-volume, long-duration content (up to 3 minutes), Kling AI offers the most competitive pricing and stability.

Source : OpenAI

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!