SearXNG in 2026: The Structural Paradox of Private Metasearch and AI Retrieval

The digital landscape of 2026 has fundamentally altered our relationship with information retrieval. As mainstream search engines become increasingly saturated with AI-generated filler and aggressive tracking telemetry, the demand for sovereign search infrastructure has transitioned from a niche privacy concern to a technical necessity. In this environment, SearXNG stands as a defiant abstraction layer.

Unlike traditional search engines that crawl the web to build proprietary, multi-petabyte indexes, SearXNG operates as a metasearch engine. It does not “own” its data; instead, it queries dozens of external engines in real-time, aggregates their results, and presents them through a unified, privacy-hardened interface. This model creates a unique structural paradox: SearXNG provides unparalleled anonymity by acting as a shield for the user, yet it remains entirely dependent on the very giants—Google, Bing, and Baidu—that it seeks to bypass.

For the modern developer and self-hoster, SearXNG is no longer just a tool for private browsing. It has evolved into the critical “discovery” component of the local AI stack, serving as the primary retrieval mechanism for autonomous agents and Retrieval-Augmented Generation (RAG) pipelines. Understanding its architecture, its deployment nuances, and its inherent limitations is essential for anyone looking to maintain digital sovereignty in an era of centralized AI.

1. Core Architecture: How Metasearch Operates Without an Index

At its technical core, SearXNG is a sophisticated request-aggregator written in Python. While a standard search engine follows the “Crawl, Index, Rank” lifecycle, SearXNG operates on a “Dispatch, Retrieve, Normalize” model. When a user submits a query, the engine initiates a high-concurrency event where multiple “engines”—Python modules specifically designed to interface with external sites—are triggered simultaneously.

The Retrieval Mechanisms

SearXNG does not rely solely on a single method to pull data; it utilizes a modular backend system to communicate with over 70 different services:

- HTML Scraping: For engines that do not provide public access, SearXNG uses CSS selectors or XPath to extract data directly from the raw HTML of a search results page. This is the most fragile method, as minor layout changes on the source site can break the engine module.

- JSON Endpoints: Many modern platforms provide semi-structured data via internal or public JSON endpoints. SearXNG leverages these for higher reliability and lower parsing overhead compared to HTML.

- Public APIs: Where available, SearXNG uses official APIs (e.g., Wikipedia, WolframAlpha) to retrieve structured data.

- RSS/Atom Feeds: For news-heavy or chronological searches, the engine can parse XML feeds to gather the latest updates.

The Normalization Layer

Once the raw data returns from the various sources, SearXNG’s normalization layer takes over. Each engine’s output is converted into a standard internal format. The engine then performs deduplication—ensuring that if a link appears in both Google and Bing results, it is only shown once—and calculates a basic ranking score based on the frequency and position of the result across the queried sources.

This architecture allows SearXNG to be exceptionally lightweight. Because it offloads the storage of the “World Wide Web” to the major providers, a fully functional instance can run on a machine with as little as 2GB of RAM, provided it is not serving a high volume of concurrent users.

2. Deployment Realities: From Docker to Bare-Metal

In 2026, the SearXNG community has moved toward containerization as the path of least resistance, but the software remains highly flexible. While Docker is the most prominent recommendation for most users, it is by no means a mandatory requirement.

The Container Consensus

Docker and Podman have become the industry standard for deploying SearXNG due to their ability to manage complex Python dependencies and system-level configurations (like uWSGI) in a pre-packaged environment.

- Official Images: SearXNG maintains high-quality images on DockerHub and GHCR, optimized for multiple architectures.

- Orchestration: Advanced users often deploy SearXNG within Kubernetes clusters to ensure high availability and load balancing for high-traffic instances.

Native and Bare-Metal Alternatives

For those seeking to minimize virtualization overhead or who prefer traditional Linux systems administration, SearXNG can be deployed natively:

- Python + uWSGI: The core engine is designed to run behind the uWSGI application server, which handles the multithreading and heavy lifting of search requests.

- systemd Integration: In a native environment, SearXNG is typically managed via systemd unit files, ensuring it restarts automatically and operates as a system service.

- The Installation Script: To bridge the gap, SearXNG provides an official installation script that automates the setup of dependencies, virtual environments, and uWSGI on distributions like Debian, Arch, and Fedora.

The Redis Variable: Caching and Performance

While SearXNG is functionally stateless, Redis (or the Valkey fork) is a critical performance enhancer for any production-grade instance.

- Is it Required? No. SearXNG can operate without Redis. In its absence, it will default to a local SQLite-based cache or skip caching entirely for certain features.

- Why use it? Redis is recommended to alleviate the load on external search engines. It stores the results of frequent queries, allowing the instance to serve common searches instantly without triggering new outbound requests—reducing the likelihood of your server being flagged as a bot.

- Rate Limiting: Redis is also used by the SearXNG Limiter plugin to protect your instance from being overwhelmed by bot traffic or API-harvesting attempts.

3. The Privacy Paradox: Software vs. Infrastructure

The central appeal of SearXNG is its “no-logs” philosophy. However, for the technical administrator, it is vital to distinguish between the application layer (the SearXNG software) and the infrastructure layer (the server environment).

The “No-Storage” Design

By default, the SearXNG software is engineered to be stateless. It does not implement a user database, and it does not store search history or persistent user profiles.

- Session Management: Preferences (such as language or specific engine toggles) are typically stored in the user’s browser via a cookie or encoded into the URL, rather than on the server.

- In-Memory Processing: Search results are aggregated in RAM, served to the user, and then discarded once the request is complete.

The Infrastructure Leak

While the software is “clean,” the infrastructure hosting it often remains “noisy.” Unless specifically hardened, metadata can accumulate in several locations:

- Reverse Proxy Logs: If you use Nginx, Caddy, or Traefik to handle SSL, these services log every incoming IP address and the full URL of the search query by default.

- Container Logs: In a Docker environment, the standard output (stdout) of the container may capture request details that are stored by the Docker daemon.

- System Logs: Linux kernels and system daemons (like journald) may log connection metadata during high-traffic periods.

- Caching Layers: While Redis improves performance, it temporarily stores query strings in memory. If the server is compromised or an unencrypted dump is taken, this data could be exposed.

Insight: To achieve true privacy, an administrator must disable logging at the proxy level or route logs to /dev/null. Without these infrastructure-level changes, the “no-storage” claim of the software is undermined by the verbosity of the operating system.

4. The Arms Race: Scraping, Blocking, and IP Reputation

Operating a SearXNG instance is an act of digital camouflage. Because SearXNG often relies on scraping HTML or spoofing browser headers, it is in a constant battle with the anti-bot mechanisms of major search engines.

The Bot-Detection Barrier

In 2026, engines like Google and Bing have deployed advanced behavioral analysis to detect non-human traffic. They look for:

- High Request Volume: Too many searches coming from a single IP address in a short window.

- Datacenter IP Ranges: Many search engines automatically flag or CAPTCHA traffic originating from AWS, DigitalOcean, or Hetzner.

- Inconsistent Headers: Discrepancies between the declared User-Agent and the actual network behavior.

Public vs. Self-Hosted Instances

This “arms race” affects different types of instances in vastly different ways:

- Public Instances: These are the primary targets. Because hundreds of users share a single IP, they are frequently blocked or presented with CAPTCHAs. This is known as the “tragedy of the commons” in the metasearch world.

- Private/Self-Hosted Instances: An individual or family using an instance on a residential IP (or a clean VPS) rarely encounters these issues. The traffic volume is low enough to blend in with standard human behavior.

Defensive Measures: Filtron and Proxies

To maintain uptime, advanced SearXNG setups utilize Filtron, a Go-based reverse proxy that acts as an application firewall. Filtron can:

- Rate-limit aggressive bots.

- Filter out malicious query patterns.

- Distribute requests across a pool of rotating proxies to prevent IP-based blacklisting.

5. SearXNG in the AI Ecosystem: The Retrieval Layer

By 2026, SearXNG has transitioned from a privacy tool for humans into a foundational component of the local AI ecosystem. As developers shift away from centralized search APIs due to cost and privacy concerns, SearXNG has emerged as the preferred “Retriever” for autonomous agents and Retrieval-Augmented Generation (RAG) pipelines.

The RAG Pipeline: Grounding LLMs in Real-Time Data

SearXNG does not perform RAG itself; instead, it serves as the essential first step in a multi-stage retrieval architecture. In a modern 2026 workflow, the process typically follows this path:

- User Query: A user asks a question to a local LLM (e.g., via Ollama).

- Search Orchestration: The system converts the query into optimized keywords and dispatches them to a self-hosted SearXNG instance.

- Context Extraction: SearXNG returns a list of results. The RAG pipeline then scrapes the content of these URLs, chunks the text, and generates embeddings.

- LLM Synthesis: The most relevant text “chunks” are fed back into the LLM as grounded context, enabling the model to provide accurate, source-cited answers while minimizing hallucinations.

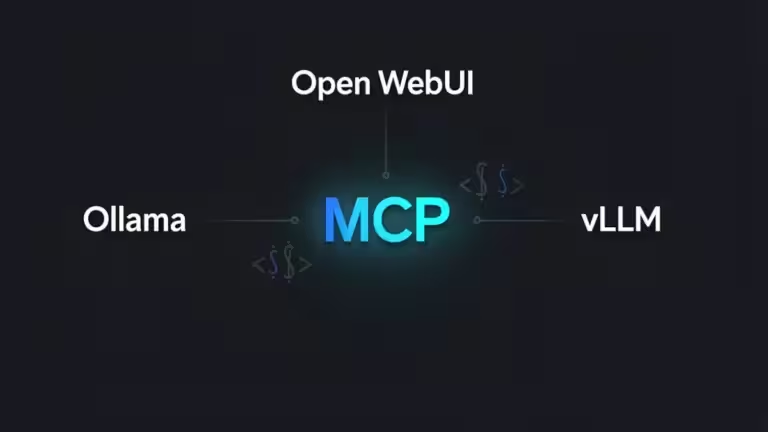

AI Integrations: Open WebUI, Perplexica, and MCP

Several prominent open-source platforms now use SearXNG as their primary search provider to create “local-first” alternatives to services like Perplexity:

- Open WebUI: This popular interface for local LLMs supports native SearXNG integration, allowing users to enable “Web Search” directly in their chat settings.

- Perplexica: An AI-powered search engine that bundles a frontend, backend orchestrator, and local LLM. It relies on SearXNG to fetch up-to-date information without the overhead of daily index updates.

- Model Context Protocol (MCP): In late 2025 and early 2026, the adoption of MCP (the “USB-C for AI tools”) allowed AI agents like Claude Desktop to connect directly to SearXNG via lightweight MCP servers.

The JSON Mandate for Agentic Workflows

For these AI integrations to function, a critical configuration change is required. By default, SearXNG only outputs HTML, which is difficult for AI agents to parse reliably. To enable machine-readable communication, administrators must manually activate the JSON format in settings.yml:

search:

formats:

- html

- json # Required for AI and API integrations

Without this setting, tools like LangChain, CrewAI, and Flowise cannot “read” search results, leading to a failure in the retrieval chain.

6. Comparative Analysis: SearXNG vs. The Market

The search landscape of 2026 offers two primary paths for the privacy-conscious user: the sovereign, self-hosted route represented by SearXNG or the managed, premium model exemplified by Kagi.

SearXNG vs. Kagi: Sovereignty vs. Convenience

Choosing between these tools is essentially a trade-off between engineering time and financial investment.

- Economic Model: Kagi operates on a subscription-based model (typically starting at $5/month), which eliminates the incentive to monetize user data. SearXNG is free software, with your only costs being the VPS or hardware used for hosting (typically $5–$20/month).

- Reliability: Kagi utilizes official APIs and a proprietary index, ensuring consistent results without CAPTCHAs or blocking issues. SearXNG admins must often manage proxy rotation and anti-bot challenges to maintain high availability.

- Control: SearXNG offers absolute control over search sources, allowing users to toggle 70+ engines or block specific URLs. Kagi provides “Lenses” and domain ranking, but users are ultimately confined to Kagi’s managed ecosystem.

- Privacy Baseline: While Kagi promises no telemetry, SearXNG allows for a “Trust No One” architecture where the user owns the logs, the cache, and the network exit point.

Strategic Integration

For those who prefer a managed service for general browsing but require a private backend for research, a hybrid approach is common. You might use Kagi for daily tasks while maintaining a [SearXNG Docker configuration] for automated research agents. This ensures that your most sensitive or high-volume queries remain entirely decoupled from a commercial account.

7. Limitations and Maintenance Overhead

While SearXNG is a powerful tool, it is not a “set-and-forget” solution. In 2026, the “Sysadmin Tax” for maintaining an instance has increased alongside the complexity of the web.

- Scraping Fragility: Frequent website structure changes mean that SearXNG engine modules occasionally break. Administrators must regularly update their instances to benefit from community-contributed parsing fixes.

- Result Consistency: Because it aggregates from diverse sources, the relevancy of results can vary more than a tuned, single-index engine.

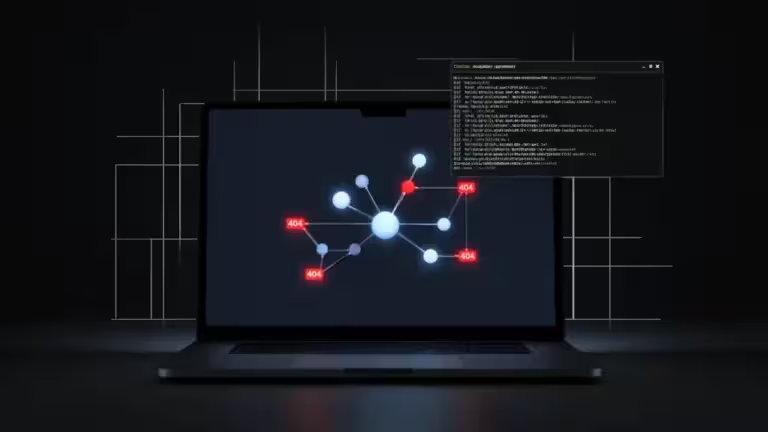

- Silent Failures: One of the most significant risks is a “silent data failure,” where an engine appears to be working but returns no results because it has been invisibly blocked by a provider like Google.

Insight: Successful SearXNG administration requires proactive monitoring. Without automated health checks for specific engines, you may find your “aggregated” search results dwindling down to a single source over time without realizing it.

8. Conclusion: The Future of Sovereign Retrieval

As we navigate 2026, the value of SearXNG has shifted from a simple privacy tool to a vital infrastructure component for the agentic web. It provides a necessary abstraction layer, shielding both humans and AI models from the tracking and biases of centralized search giants. While it demands a higher level of technical commitment than paid alternatives, the digital sovereignty it offers is unparalleled. In a future where information is increasingly synthesized rather than just searched, owning the retrieval layer is the only way to ensure the transparency and integrity of the data that feeds our digital minds.

Going Further with SearXNG

- SearXNG in 2026: Architecting Sovereign Retrieval for the AI Era

- Step-by-Step Guide: Configure SearXNG Like Kagi (Docker, settings.yml)

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!