Fish Audio S2 Pro: How to Use a Voice Reference for High-Fidelity Voice Cloning

Fish Audio S2 Pro represents the state-of-the-art in multilingual text-to-speech, leveraging an asymmetric Dual-Autoregressive (Dual-AR) architecture—combining a 4B parameter Slow AR model with a 400M parameter Fast AR model—to deliver industry-leading voice cloning. While many TTS systems struggle with robotic cadences, S2 Pro’s primary strength lies in its In-Context Learning (ICL) capability, allowing it to instantly adopt a target identity through two distinct workflows:

- Instant External Cloning: Using a short 10-30 second recording of a real voice to replicate its specific DNA and timbre with zero-shot accuracy.

- Style-Driven Reference Generation: Using style-driven generation to bootstrap a reusable reference via natural language tags, then “freezing” the best output as a permanent audio reference for future consistency.

This guide provides a technical deep dive into mastering these voice reference workflows, from preparing pristine samples to optimizing the semantic Slow AR and acoustic Fast AR transformers. Whether you are deploying locally via Conda for maximum stability or with Docker, this practical approach is the key to achieving professional-grade vocal synthesis.

1. What is Fish Audio S2 Pro?

Fish Audio S2 Pro is currently the state-of-the-art (SOTA) in multilingual text-to-speech, representing the most advanced multimodal model developed by Fish Audio. Trained on over 10 million hours of audio data covering more than 80 languages, it sets a new benchmark for natural, realistic, and emotionally rich speech generation.

As one of the Best Open-Source TTS Models available, S2 Pro outperforms many closed-source competitors in both accuracy and paralinguistic expression. Its primary strength lies in its Dual-Autoregressive (Dual-AR) architecture, which allows for zero-shot voice cloning and fine-grained emotional control via simple text tags.

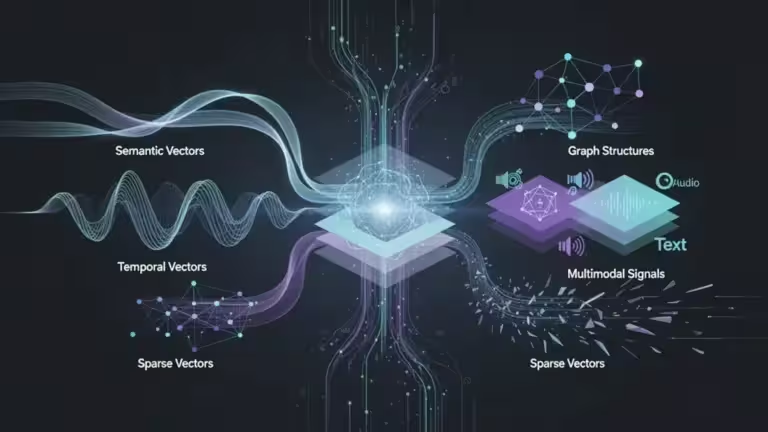

2. How Voice Referencing Works in S2 Pro

Voice referencing in S2 Pro is based on In-Context Learning (ICL). Unlike older systems that required hours of audio to “train” a specific voice model, S2 Pro uses a short audio snippet as a prompt to understand the “DNA” of a voice instantly.

Beyond ICL: S2 Pro also employs GRPO (Group Relative Policy Optimization) during post-training alignment. This technique uses multidimensional reward models (acoustic quality, speaker consistency, intelligibility) to fine-tune outputs—differentiating it from pure ICL systems like Vall-E and contributing to its superior Audio Turing Test scores.

- The Semantic Map: The Slow AR model (4B parameters) predicts the primary semantic codebook, analyzing rhythm and phrasing across 80+ languages.

- The Acoustic Detail: The Fast AR model (400M parameters) generates the remaining 9 residual codebooks at each time step, capturing fine-grained texture—the rasp, breathiness, and specific timbre—of the speaker.

- Zero-Shot Cloning: By providing a 10–30 second sample, the model can generate new speech in that exact voice without any additional fine-tuning or model weights.

3. Setting Up Fish Speech Locally

For production-level stability and high-performance inference, running Fish Audio S2 Pro locally on your hardware is the preferred approach. While Docker is an option for containerized environments, our internal testing shows that a Conda-based setup provides superior stability for GPU driver mapping and Python dependency management. That said, it’s worth noting that the Docker image should gain in stability over time—the project is very active and constantly evolving. In my case, using Conda allowed me to resolve errors more easily and troubleshoot issues with greater flexibility.

Hardware Prerequisites

- GPU: An NVIDIA GPU is required. Fish Audio officially recommends a minimum of 24GB VRAM for full bf16 inference with the S2-Pro model (4B Slow AR + 400M Fast AR).

- Quantization Options: With FP8 quantization (s2-pro-fp8), ~12–16GB is sufficient with minimal quality trade-off. For budget setups, NF4 4-bit quantization works on 16GB+ cards.

- Note: Tested locally on RTX hardware — actual requirements vary based on precision (bf16 vs FP8 vs INT4) and inference framework (SGLang, vLLM).

- Operating System: Linux is preferred, though Windows via WSL2 is supported.

Installation Steps

- Clone the Repository:

git clone https://github.com/fishaudio/fish-speech.git

cd fish-speech- Create the Environment:

conda create -n fish-speech python=3.12

conda activate fish-speech

# GPU installation (choose your CUDA version: cu126, cu128, cu129)

pip install -e .[cu129]

# CPU-only installation

pip install -e .[cpu]

# Default installation (uses PyTorch default index)

pip install -e .

# If you encounter an error during installation due to pyaudio, consider using the following command:

# conda install pyaudio

# Then run pip install -e . again- Download Model Weights: Download the s2-pro checkpoints from HuggingFace and place them in the checkpoints/ directory.

For those looking to manage their local AI stack more broadly, you can integrate this with an Open WebUI Docker guide for a graphical interface or our vLLM Docker guide for high-load backend management.

4. Preparing a High-Quality Voice Reference

The quality of your output is directly dictated by the purity of your reference file. S2 Pro’s RVQ audio codec (10 codebooks) extracts acoustic features from this file: the Slow AR model predicts the primary semantic codebook, while the Fast AR generates the remaining 9 residual codebooks.

Reference Checklist

- Duration: Use a sample between 10 and 30 seconds (typically recommended). Samples shorter than 10s may lack sufficient prosodic data for accurate cloning.

- Audio Format: Use WAV 24-bit or FLAC at 44.1kHz or 48kHz. Avoid low-bitrate MP3s, as the model will interpret compression artifacts as a natural part of the voice.

- Cleanliness: The audio must be dry—no background music, reverb, or noise.

- The Zero-Normalization Rule: Do not normalize your audio to 0 dB. Aim for -3 dB peaks; pushing the gain too high can cause digital clipping that results in a “metallic” or “crunchy” synthetic output.

- Transcription: Always prepare an accurate text transcript of exactly what is said in the reference audio. This allows the model to align its semantic predictions with the acoustic signal.

5. Step-by-Step: Using a Voice Reference

The core of the Fish Audio S2 Pro workflow is the “Reference Master” strategy. There are two primary ways to establish a voice identity: using an existing recording or “architecting” a new one through text.

Method A: Cloning from an External File

- Upload: In the WebUI or API, upload your prepared 10–30s WAV file.

- Transcribe: Enter the Reference Transcript. Providing the exact text spoken in the audio is critical for the model to align its semantic codebook correctly.

- Input Target Text: Type the new content you want the voice to speak.

- Generate: The Slow AR (4B) and Fast AR (400M) models work together, using the reference audio as context to generate new speech with matching timbre and prosody.

Method B: Style-Driven Generation (Synthetic Bootstrapping)

If you don’t have a recording, you can bootstrap a reusable reference from style tags:

- Prompt: Use descriptive natural language (e.g., [deep gravelly voice], [professional female tone]).

- Iterate: Generate several takes until you find the “Perfect One”. Note that this process controls style and sampling, not a fully controlled vocal identity.

- Freeze: Export that specific output and re-import it as an External Audio Reference. This “freezes” the synthetic style, ensuring consistent timbre for all future generations.

6. Optimizing Output Quality

To achieve studio-grade results with the Dual-AR architecture, you must balance the Slow AR and Fast AR transformers through specific inference parameters.

- Temperature (0.7 – 0.8): This is generally considered a good starting range for balanced output. Lower values (< 0.5) may result in more deterministic but potentially flat speech, while higher values (> 0.9) can introduce variability that may affect voice consistency. Note: Optimal settings may vary depending on your specific use case and reference audio quality.

- Top_P Sampling: Helps control the diversity of generated tokens by focusing on the most probable acoustic sequences.

- Inline Emotion Tags: S2 Pro supports 15,000+ free-form text descriptions for fine-grained control. Examples include: [whisper in small voice], [professional broadcast tone], [laughing], [pause], [emphasis], [angry], [singing], [volume up], [echo], and many more.

- SGLang Acceleration: For real-time applications, use the SGLang server to leverage inference optimizations like Continuous Batching and Paged KV Cache. Note: Benchmark figures such as TTFA ~100ms and RTF 0.195 were measured on an NVIDIA H200 GPU; performance will vary based on your hardware.

7. Common Mistakes and Fixes

Even with a high-fidelity model like Fish Audio S2 Pro, small configuration errors can lead to degraded audio quality. Below are the most frequent issues encountered during local inference and how to resolve them.

| Issue | Likely Cause | Solution |

|---|---|---|

| Metallic/Robotic Sound | Audio clipping or low sample rate. | Ensure reference peaks are at -3 dB. Use 44.1kHz/48kHz WAV files. |

| Voice “Glitching” | Temperature set too high (> 0.9). | Lower Temperature to the 0.7 – 0.8 range for stability. |

| Inconsistent Timbre | Reference sample is too short (< 10s). | Provide a 10-30s sample with a clear transcript for better alignment. |

| Loss of Identity | Text context is too long for one pass. | Break long scripts into smaller paragraphs using the same reference. |

| Slurred Speech | Top_P is too low, restricting tokens. | Increase Top_P (try 0.9 or 0.95) to allow natural phonetic variance. |

8. Why Dual-AR Matters: The Tech Behind the Guide

The success of your voice cloning relies on the Slow AR / Fast AR architecture. While it is not necessary to understand the deep math to use the guide, knowing how it handles data helps in troubleshooting:

- The Slow AR (4B parameters): Operates along the time axis, predicting the primary semantic codebook that dictates rhythm and prosody across 80+ languages. If the cadence feels wrong, this component is struggling with text-to-semantic mapping.

- The Fast AR (400M parameters): Generates the remaining 9 residual codebooks at each time step to reconstruct exquisite acoustic details. If the voice sounds “flat” or “thin,” this component lacks sufficient acoustic detail from your reference file.

9. License & Legal Framework

Understanding the licensing terms is essential before deploying Fish Audio S2 Pro in any project. The model is released under the FISH AUDIO RESEARCH LICENSE (Last Updated: March 7, 2026), which establishes clear boundaries between permitted and restricted uses.

✅ Permitted Uses (Free of Charge)

The license grants a non-exclusive, worldwide, royalty-free license for:

- Research Purpose: Academic or scientific advancement not primarily intended for commercial advantage or monetary compensation.

- Non-Commercial Purpose: Personal use (hobbyist), evaluation, testing, or any purpose not directed toward generating revenue.

❌ Commercial Use Requires Separate License

Any “Commercial Purpose” requires a written license agreement from Fish Audio. This includes activities primarily intended for:

- Creating, modifying, or distributing products/services (including hosted services or APIs)

- Internal business operations of your organization

- Any use connected to revenue-generating products or services (directly or indirectly)

Important: Running the model on local infrastructure does not exempt you from licensing obligations if used commercially. Offline deployment is still subject to these terms.

⚠️ Critical Restrictions

- No Foundational Model Training: You may not use Fish Audio Materials to create or improve any foundational generative AI model (excluding Derivative Works of S2 Pro itself).

- Attribution Required: If distributing Fish Audio Materials or Derivative Works, you must:

- Include a copy of this license agreement

- Retain the attribution notice: “This model is licensed under the Fish Audio Research License, Copyright © 39 AI, INC. All Rights Reserved.”

- Prominently display “Built with Fish Audio” on related websites, user interfaces, blog posts, or documentation

- Acceptable Use Policy: Your use must comply with applicable laws and Fish Audio’s Acceptable Use Policy (incorporated by reference).

📞 Obtaining a Commercial License

To obtain commercial rights, contact Fish Audio directly:

- Website: https://fish.audio

- Email: business@fish.audio

⚖️ Legal Jurisdiction & Liability

- This Agreement is governed by the laws of the United States and the State of California.

- Fish Audio provides the Materials “AS IS” without warranties of any kind.

- Fish Audio reserves the right to terminate licenses for violations and will take action against unauthorized commercial use.

💡 Note on Licensing: Due to the terms of the Fish Audio Research License, I am currently not allowed to publish generated audio samples on a monetized website (e.g. with advertising).

For this reason, no audio examples are included in this article. I have contacted Fish Audio to request permission for broader usage, including publishing samples on this site and for potential use in audio translations on platforms such as YouTube.

💡 Fish Audio S2 Pro vs Alternatives: Quick Comparison

When choosing a TTS solution, understanding the trade-offs between open-source and closed-source options is critical:

| Feature | Fish Audio S2 Pro | ElevenLabs | Other Open-Source |

|---|---|---|---|

| Self-hosting | ✅ Yes (open-source) | ❌ No (Web, API only) | ✅ Varies by model |

| Voice quality | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐-⭐⭐⭐⭐ |

| Languages supported | 80+ | 32 (standard) / 70+ (v3) | Often English-focused |

| Zero-shot cloning | ✅ Yes (10-30s) | ✅ Yes | ❌ Often requires training |

💡 Note on Voice Quality: While both models achieve industry-leading results, ElevenLabs retains a slight edge on nuanced English voices (especially emotional range). Fish Audio S2 Pro excels in multilingual support and zero-shot cloning flexibility.

However, it is important to stress that any direct comparison between Fish Audio S2 Pro and ElevenLabs requires a rigorous and time-consuming evaluation. A proper benchmark must account for multiple factors, including voice consistency across long generations, available tooling and workflows, latency, and per-language quality variations.

👉 In practice, this makes comparisons highly complex and context-dependent. Many online benchmarks tend to oversimplify this analysis, which is why results should always be interpreted with caution.

🎯 Recommendation by Use Case

- High-volume production / Privacy-sensitive: Fish Audio S2 Pro (self-hosted) — full control, no API costs.

- Quick prototyping / Low volume: ElevenLabs — No setup required, pay-as-you-go.

- Budget hobbyists: Explore other open-source models, but expect trade-offs in quality and language support.

💡 Key Insight: Fish Audio S2 Pro offers enterprise-grade voice cloning with the flexibility of self-hosting—ideal for creators who need consistent, high-fidelity results without recurring API fees.

FAQ

- Can I use a text prompt to create a voice without an audio file? You can generate a voice style via text tags (Method B), but for consistent results, you must export that output and reuse it as an audio reference.

- Why is my cloned voice sounding metallic? This is often caused by digital clipping in the source file. Ensure your reference audio is not normalized to 0 dB; keep it at -3 dB for the best results.

- Does Fish Audio S2 Pro support multi-speaker cloning? Yes. You can upload a reference audio containing multiple speakers, and the model processes each speaker’s features via the <|speaker:i|> token. This allows you to control which speaker performs which part of the dialogue within a single generation pass, without needing separate reference files for each voice.

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!