Reliability and Contextual Drift: Why LLMs Lose Track in Long Contexts

The evolution of Large Language Models (LLMs) in 2026 is defined by an aggressive race toward ever-larger context windows. With models now capable of processing hundreds of thousands—even millions—of tokens, the promise is compelling: the ability to “interact” with entire codebases or analyze massive document sets in a single pass.

However, this technical leap masks a more complex operational reality. Many users and developers are facing a paradox: the longer the context, the less reliable the output appears to be. This phenomenon, commonly referred to as “drift,” does not reflect a loss of memory in the literal sense. Instead, it reveals a growing inability of the model to maintain coherence and prioritize relevant information over time.

The challenge is no longer just about raw performance—as highlighted in modern inference benchmarks—but about the model’s capacity to remain focused on critical signals within an increasingly saturated information space. Understanding why an AI “loses track” requires a deeper look into attention mechanisms and a clear distinction between structural limitations and operational misuse.

II. Anatomy of Drift: From Academic Theory to Operational Reality

To properly assess reliability, terminology must be clarified. In industry discussions, the term “drift” is often used loosely, yet it encompasses several distinct technical phenomena that must be separated.

1. Classical Drift: Model, Data, and Concept Drift

These categories describe how models evolve over time, independently of any specific interaction:

- Data Drift: The statistical distribution of input data in production diverges from the training dataset.

- Concept Drift: The relationship between input and output changes over time. For example, predictive models trained on 2024 market trends may become invalid in 2026 due to macroeconomic shifts or technological disruptions.

- Model Drift: Often linked to silent updates from API providers. A model may degrade on specific tasks (e.g., coding) after being realigned for safety or generalization improvements. This is a critical factor in enterprise AI reliability and long-term system stability.

2. Contextual Drift: An Operational Phenomenon

Unlike the previous categories, contextual drift is not yet a formally standardized academic term. It is primarily used by practitioners to describe a loss of coherence within a single session.

This is not caused by changes in model weights, but by a form of latent space contamination. As the context window fills, the model must process an increasingly dense history. Each user input, each nuance, and even each prior model response contributes to a growing signal-to-noise imbalance.

In extended sessions, this accumulation saturates the attention mechanism, leading to:

- Circular reasoning

- Loss of instruction fidelity

- Hallucinated references to earlier (non-existent) context

At this stage, the model does not “forget”—it becomes statistically overwhelmed.

III. The Real Cause: Prioritization vs Memory

A common misconception is that LLMs “lose memory” as conversations grow longer. Technically, this is incorrect. Within the Transformer architecture, all tokens inside the context window remain accessible to the attention mechanism.

The issue is not storage—it is signal prioritization.

1. The “Lost in the Middle” Effect

The seminal study by Lost in the Middle: How Language Models Use Long Contexts (Liu et al., 2023) highlights a structural weakness of LLMs: performance follows a U-shaped curve.

- High accuracy for information at the beginning (initial instructions)

- High accuracy for information at the end (recent exchanges)

- Significant degradation for information located in the middle of the context

In dense contexts, the attention mechanism fails to properly weight critical signals buried among thousands of tokens. The model still “has” the information—but it no longer assigns it sufficient importance.

2. Information Noise and Bias Accumulation

As context length increases, so does the risk of informational pollution:

- Code analysis scenario: Injecting multiple files into context can degrade output quality. The model may mix function versions or suggest outdated dependencies simply because they appear somewhere in the prompt.

- Self-reinforcement loop: A minor reasoning error introduced mid-session becomes part of the next input. The model then builds on its own flawed outputs, amplifying the drift over time.

This recursive degradation is closely related to failure patterns observed in agentic systems, where feedback loops can lead to instability (see: an audit of bugs in AI agents).

The conclusion is clear: increasing token capacity without improving attention density is ineffective. This trade-off between volume and relevance is also central to modern discussions on inference efficiency and system design (inference economics in 2026).

IV. Benchmarking Drift: Measuring the Loss of Priority

To move beyond subjective impressions (“the model feels less accurate”), engineers rely on structured evaluation protocols to quantify contextual degradation.

1. Needle in a Haystack

The “Needle In A Haystack” test has become a standard benchmark for long-context LLMs.

- Methodology: A specific factual statement (the “needle”) is inserted at a defined depth within a large, mostly irrelevant text corpus (the “haystack”). The model is then asked to retrieve it.

- Expected outcome: A robust model should retrieve the information regardless of its position.

- Observed reality: Performance degrades significantly when the needle is located in the middle of the context window.

These results are often visualized as heatmaps, where reliability drops sharply in central regions—empirical confirmation of the “Lost in the Middle” effect.

2. Recursive Degradation in Real Usage

Beyond synthetic benchmarks like LongBench, contextual drift is highly visible in real-world usage:

- After 40–50 turns in tools like ChatGPT or Claude, models may:

- Become repetitive

- Ignore earlier formatting constraints

- Introduce fabricated references to prior messages

- Technical explanation: The model treats its previous outputs as valid context. Any embedded inaccuracies propagate and intensify over time.

This behavior reflects a fundamental limitation of autoregressive systems: they lack a built-in mechanism for truth correction once noise enters the context.

3. Local Testing: Controlled Environments

Developers seeking to analyze these behaviors without relying on proprietary APIs can test locally using tools such as:

- Ollama

- vLLM

A controlled setup (e.g., MCP, Ollama, vLLM and Open WebUI setup) allows manual tuning of parameters such as num_ctx (context size), enabling precise observation of when coherence begins to degrade.

This approach is particularly useful for comparing models (e.g., Llama, Mistral) and identifying task-specific limits in extraction, reasoning, or long-form synthesis.

V. Technical Solutions: Reducing Saturation, Not Just Increasing Size

Addressing contextual drift is not about endlessly expanding context windows. The current engineering focus is on improving signal quality and attention efficiency. Several approaches coexist, each with clear trade-offs.

1. RAG (Retrieval-Augmented Generation): Controlled Context Injection

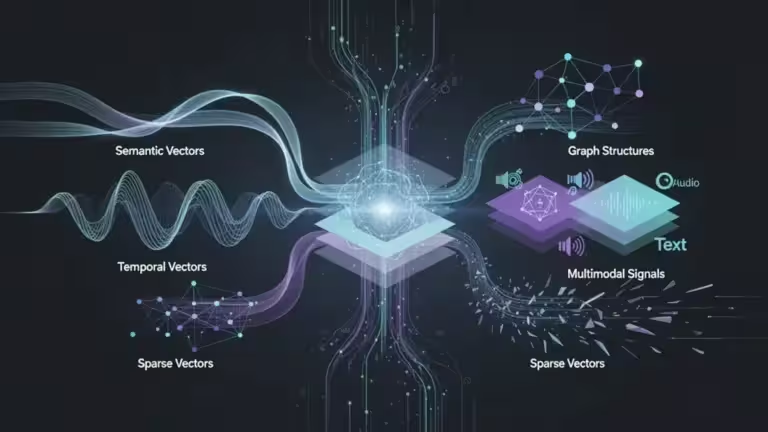

RAG is often presented as the primary alternative to brute-force long context. Instead of feeding 100,000 tokens into the model, a retrieval system selects only the most relevant fragments.

- Principle: Query a vector database and inject a curated subset of documents into the prompt.

- Benefit: Significant reduction in attention saturation.

- Limitation: Drift is not eliminated—it is shifted upstream. If retrieval is inaccurate or incomplete, the model will still produce incorrect outputs.

For a deeper architectural perspective, see:

- Types of vectors in modern AI

- Vector databases for AI and semantic memory

- Context packing vs RAG with Gemini 3

In practice, RAG improves precision but introduces a dependency on retrieval quality and embedding relevance.

2. Transformer Optimizations: Efficiency vs Intelligence

When long-context processing is unavoidable (e.g., legal documents, large codebases), several optimizations attempt to improve attention handling.

- Flash Attention (Dao et al., 2022)

- Optimizes GPU memory access patterns to accelerate attention computation

- Enables larger contexts on fixed hardware

- Limitation: Improves efficiency, not reasoning quality

- RoPE Scaling (Rotary Positional Embeddings)

- Extends context length beyond training limits

- Improves positional generalization

- Limitation: Does not resolve mid-context degradation

These techniques are closely tied to hardware constraints and inference performance (see: vLLM vs TensorRT-LLM and best GPU for local AI).

3. Alternative Architectures: State Space Models (SSM)

The most significant paradigm shift comes from State Space Models (SSM), particularly Mamba.

- Core idea: Replace quadratic attention (O(N2)) with linear sequence processing

- Advantage: Scales efficiently to very long sequences without attention saturation

- Trade-off: Context is compressed into a fixed-size hidden state

This introduces a fundamental constraint: the model must decide what to retain and what to discard. Unlike Transformers, which keep all tokens accessible, SSMs impose a form of selective memory.

As of 2026, these architectures are promising but not universally superior—especially for complex reasoning tasks requiring precise token-level recall.

VI. Practical Guide: How to Prevent Contextual Drift

Mitigating contextual drift is less about choosing the “best model” and more about applying disciplined context engineering practices.

1. Segmentation and Periodic Summarization

Long conversations accumulate noise exponentially. The solution is to actively reset and compress context.

- Pivot summarization technique: Every 10–15 iterations, ask the model to summarize key decisions and facts. Start a new session using this summary as the initial context.

- Sliding window strategy: Retain only critical system instructions and recent exchanges instead of the full history.

This approach maintains signal clarity while preventing attention overload.

2. State-Based Interaction Instead of Continuous Chat

Contextual drift is particularly severe in web-based chat interfaces such as ChatGPT or Claude, where history management is opaque.

To regain control:

- “Snapshot & Wipe” method:

- Capture the current state of the task

- Reset the session

- Reinject a clean, structured context

- API-driven workflows: Using APIs or playground environments allows:

- Persistent system prompts

- Controlled temperature (e.g., ~0.2 for stability)

- Explicit context management

- Local inference control: Tools like Ollama or vLLM enable direct control over num_ctx, reducing uncontrolled context growth.

3. Structuring Information with Semantic Boundaries

LLMs perform better when information is explicitly structured.

- Use tags such as: , <technical_context>, <source_data>

- Benefits:

- Improves attention segmentation

- Reduces ambiguity between instructions and data

- Mitigates “Lost in the Middle” effects

4. Prioritize Density Over Volume

More data does not mean better performance.

- Pre-filter inputs: Remove redundancy and irrelevant content

- Instruction placement: Place critical instructions at the end of the prompt (recency bias optimization)

This ensures the model assigns maximum weight to the actual task.

5. Monitoring and Drift Detection

In production systems, drift must be actively monitored.

- Compare outputs against source data

- Detect hallucinations early

- Implement validation layers when possible

Controlled environments (e.g., MCP, Ollama, vLLM and Open WebUI setup) are useful for stress-testing context limits before deployment.

VII. FAQ: Why Does ChatGPT “Forget” What You Said?

Why does my AI become repetitive in long conversations?

This is a typical symptom of attention saturation. The model increasingly overweights its own previous outputs, which dominate the context distribution. As a result, it falls into repetitive response patterns that it statistically reinforces at each turn.

Does increasing the context window solve the problem?

No. Expanding the context window without improving prioritization is equivalent to increasing storage capacity without improving indexing. The model can “see” more information, but its ability to retrieve the right signal at the right time does not scale proportionally.

What is the difference between long context and “infinite memory”?

- Long context: A form of working memory where all tokens are processed simultaneously.

- “Infinite memory”: A misleading term often used to describe hybrid systems (e.g., RAG) that retrieve information from external storage when needed.

These are fundamentally different mechanisms: one is computational, the other is architectural.

VIII. Toward Intelligent Attention Management

Contextual drift represents the current frontier of LLM reliability. It highlights a critical shift: raw compute power and ever-larger context windows are no longer sufficient.

The key metric in 2026 is not how much a model can process—but how effectively it can prioritize.

- Long context without attention control leads to noise saturation

- RAG improves precision but introduces retrieval dependencies

- Alternative architectures like Mamba offer efficiency but enforce selective memory

The implication is clear: the future of LLM reliability lies in attention management, not brute-force scaling.

In practice, contextual drift is often the hidden cost of user-friendly chat interfaces. Overcoming it requires a shift toward:

- Structured context engineering

- API-driven workflows

- Controlled inference environments

The end of the “context size race” marks the beginning of a more mature phase in AI systems design—one focused on information density, signal clarity, and deterministic behavior.

The Rise of Hybrid Architectures (Transformer + SSM)

Beyond software optimization, a new generation of models is striving to merge the best of both worlds. By alternating Transformer layers (for reasoning precision) with SSM layers like Mamba (for linear context management), these hybrid architectures offer native resistance to drift. They enable the processing of massive data volumes while maintaining a stable “train of thought,” paving the way for complex work sessions where the AI never loses its guiding thread.

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!