Testing MCP servers: protocol validation, transport correctness, and interoperability

The Model Context Protocol (MCP) has rapidly shifted from a nascent proposal to a critical standard for connecting Large Language Models (LLMs) to local and remote data sources. However, as architects and senior developers integrate MCP into production environments—whether for agentic IDE architectures or enterprise data connectors—the challenge shifts from basic connectivity to strict protocol conformity. Testing an MCP server is not merely about verifying execution; it is about ensuring deterministic behavior across diverse client implementations and transport layers.

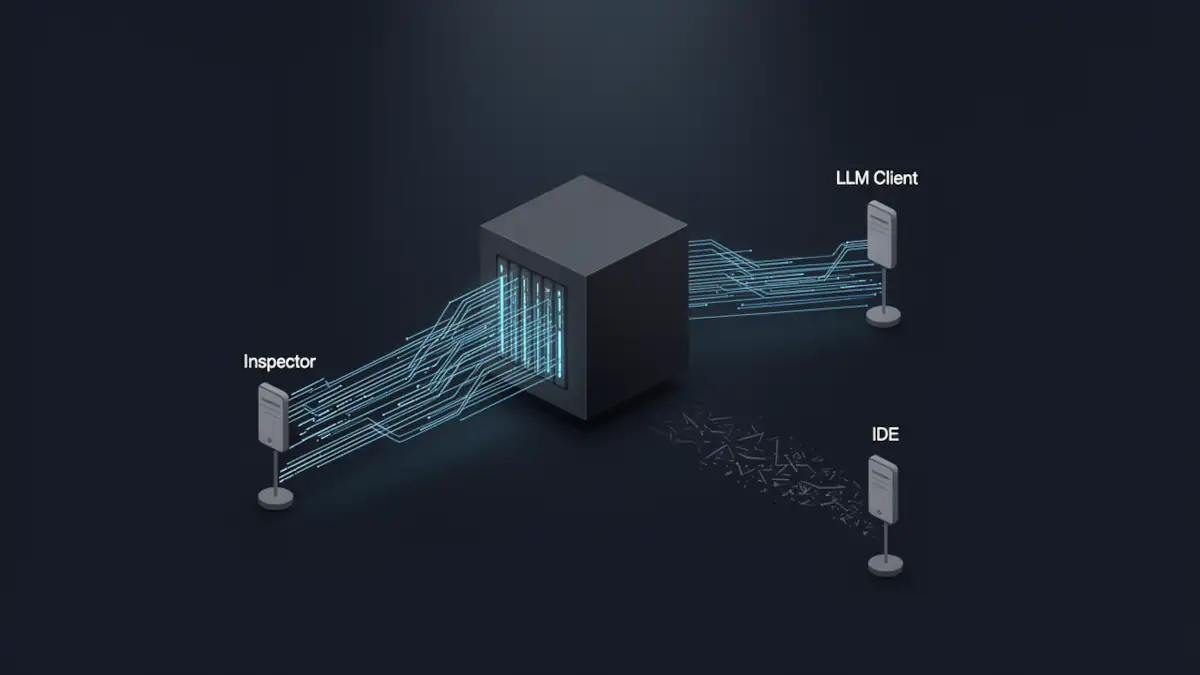

Protocol validation with the MCP Inspector

The primary tool for initial validation is the MCP Inspector. Unlike a standard LLM client, the Inspector functions as a clinical diagnostic environment, isolating the server’s responses from the probabilistic nature of model-generated prompts.

To ensure reliable execution, the Inspector should be invoked using the argument separator (—). This prevents the shell from misinterpreting server flags as Inspector flags:

bash npx @modelcontextprotocol/inspector -- node build/index.jsThe use of — is mandatory for complex startup commands to ensure clear argument forwarding. The Inspector allows architects to manually trigger list_tools, list_resources, and call_tool requests, verifying that the server adheres to the official MCP architecture without the overhead of an active LLM session.

Transport mechanisms: stdio vs. streamable HTTP

MCP supports two primary transport patterns, each presenting unique debugging challenges.

Stdio: the silent failure of stdout pollution

In local deployments, stdio (standard input/output) is the default. The most frequent failure point is stdout pollution. Since the JSON-RPC messages are exchanged over stdout, any auxiliary logging (e.g., console.log or print) will corrupt the message stream, leading to immediate connection termination.

- Expert Practice: Redirect all server-side telemetry and debugging information to stderr. High-maturity servers should implement structured logging specifically for stderr to facilitate post-mortem analysis without breaking the protocol.

Streamable HTTP and SSE

For remote deployments, MCP utilizes a streamable HTTP transport. While Server-Sent Events (SSE) are frequently used to handle streaming responses from the server to the client, the protocol is fundamentally anchored in HTTP POST requests for JSON-RPC signaling.

- Handshake Validation: Remote testing requires validating the initial HTTP POST handshake. Unlike stdio, HTTP transport introduces concerns regarding CORS, timeouts, and state management between the SSE stream and the POST control channel.

- Debugging Implications: While stdio is synchronous and direct, streamable HTTP requires specialized tooling (like Postman or custom SSE listeners) to verify that the connection remains open and that the endpoint mapping for JSON-RPC calls is correctly advertised by the server.

Claude Desktop and Cowork: precision in configuration and logs

Claude Desktop or Cowork remains the flagship reference client, but its implementation details are specific and often undocumented.

Environment-specific configurations

Interoperability testing must account for OS-specific paths for claude_desktop_config.json:

- macOS: ~/Library/Application Support/Claude/claude_desktop_config.json

- Windows: %APPDATA%Claudeclaude_desktop_config.json

Log diagnostics

When a server fails to initialize in Claude, the Inspector may show success while the client shows a generic connection error. Developers must analyze the raw logs:

- macOS: ~/Library/Logs/Claude/

- Windows: The logs directory within *%APPDATA%Claude*

Note on Resources: Even if capabilities.resources is correctly declared, some versions of Claude Desktop require manual attachment or explicit referencing in the system prompt to surface them in the UI, highlighting the gap between protocol compliance and UI-specific behavior.

MCP server compliance checklist (interop ready)

To ensure a server is ready for production-grade integration with vLLM, Ollama, or Open WebUI, it must pass this architectural audit:

- Initialize Handshake: Compliant with version negotiation.

- JSON-RPC IDs: Proper incrementing and handling of request/response IDs.

- Tool Enumeration: list_tools returns a schema-valid response.

- Resource Declaration: list_resources implemented if the capability is declared.

- Schema Validation: Strict enforcement of input types using Zod or equivalent.

- Zero Stdout Pollution: All non-protocol data sent to stderr.

- Deterministic Errors: Meaningful JSON-RPC error codes (e.g., -32602 for invalid params).

- Timeout Management: Server handles hanging processes without blocking the main loop.

- HTTP Status Codes: Correct 200/202/400 codes for remote transport.

- Streaming Integrity: SSE heartbeat and event continuity tested.

- Unambiguous Descriptions: Tool and argument descriptions optimized for LLM parsing.

- Authentication: Enforced for all non-local (HTTP) endpoints.

- Capability Check: Server does not claim features it cannot fulfill.

Security considerations for exposed MCP servers

Exposing an MCP server, particularly over HTTP, introduces a significant attack surface. An MCP server is essentially a remote execution engine.

- Authentication: Never expose an unauthenticated MCP endpoint. Implement robust Bearer token or mTLS validation.

- Tool Execution Abuse: LLMs can be manipulated into executing tools with malicious parameters (Prompt Injection).

- Isolation: Implement sandboxing (e.g., Docker or gVisor) and network isolation to prevent a compromised MCP server from pivoting into the internal network.

For a deeper dive into mitigating these risks, refer to our technical guide on securing the MCP ecosystem.

Inspector vs. real-world LLM interoperability

The official Inspector is a “syntax” validator; it ensures your server speaks the language. However, it does not validate the “semantics” of LLM interaction. Real-world interoperability requires stress testing with the actual model to ensure that tool descriptions are sufficiently precise to avoid “hallucinated” argument shapes. A server may be 100% protocol-compliant yet fail in production because its metadata leads the LLM to provide incorrect data types.

The maturation of MCP will likely lead to automated compliance suites that simulate edge-case JSON-RPC traffic. As we move toward more autonomous agentic workflows, the distinction between “it runs” and “it is compliant” will define the reliability of the AI-augmented enterprise.

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!