Best GPU for Local AI in 2026: Deep Dive on VRAM, Energy, and Real Benchmarks

Running large language models on your own hardware has reached a critical inflection point in 2025-2026. This isn’t just a question of technical curiosity or cloud cost-cutting—it’s become a compliance, sovereignty, and data security imperative.

For enterprises handling sensitive data (healthcare, financial services, intellectual property), sending every inference request to foreign cloud servers creates regulatory risk (GDPR, data residency laws) and strategic vulnerability. API costs explode. Proprietary models remain locked behind vendor agreements. Going local means reclaiming control.

For developers, researchers, and creators, it’s a matter of zero network latency, absolute data privacy, freedom to modify and fine-tune, and predictable costs. Modern LLMs (Llama 3, Qwen, DeepSeek-R1) require surgical precision in resource allocation. While CPUs can theoretically run inference at 2-3 tokens/sec, only GPUs deliver the massively parallel architecture needed (50-150 tokens/sec) for realistic production workloads.

But choosing the right GPU is non-trivial. This guide cuts through the hype and benchmarks to show you exactly what you need for your use case—and why VRAM beats compute power 90% of the time.

Last updated: March 2026 (RTX 50-series, ROCm 7.0, AMD R9700)

Why Local AI Needs a Different GPU Strategy

Local LLMs vs Cloud: Latency, Privacy, Control

Running models locally gives you what cloud can’t: immediate inference (no network roundtrip), data confidentiality (nothing leaves your machine), and freedom to modify (fine-tune, quantize, optimize). But it also means you’re alone with your hardware constraints.

The cloud wins on scale, support, and multi-user concurrency. Local wins on sovereignty. For developers, researchers, and small teams, local is often the better trade-off—if you choose the right silicon.

CPU vs GPU for LLM Inference (and why GPU wins)

A CPU can technically run LLM inference. At 2-3 tokens/sec. A GPU can do 50-150 tokens/sec on the same model. The difference is tensor parallelism: GPUs are built for matrix math, CPUs aren’t. For any serious local LLM work, a GPU is non-negotiable.

Key Hardware Fundamentals: VRAM, Compute, Power

VRAM-First Philosophy: Why Compute is Secondary

This is the critical insight most guides miss: VRAM determines what you can run. Compute determines how fast. For local AI, VRAM is the bottleneck 90% of the time.

A RTX 5090 with 32 GB of GDDR7 will run a 32B quantized model smoothly. A RTX 4090 with 24 GB will struggle. The RTX 4090 actually has respectable compute (16,384 CUDA cores), but 8 GB less memory means either dropping context length or using extreme quantization.

Realistic VRAM progression (assuming 4-bit quantization):

- 12 GB: Entry-level local AI — 7B models with short context, basic chat

- 16 GB: Serious work — 7B-13B models, RAG, lightweight agents

- 24 GB: 32B comfort zone — 32B quantized, or 13B with longer context (2K-4K tokens)

- 32 GB: 32B optimal — 32B with long context, LoRA fine-tuning

- 48 GB+: 70B and beyond — 70B comfortable, or 32B with intense fine-tuning

Critical note: There is no “threshold”—it’s a gradient. A RTX 5090 excels at 32B; beyond 70B (full precision), dual GPU becomes logical but optional for short-context work.

⚠️ Context length VRAM impact: All VRAM figures assume ~2K token context. At 8K+ context, double your VRAM estimates due to KV-cache growth.

Single-User Throughput: RTX 5090 vs H100 (Surprising Results)

Single-user scenario (1 request, same model):

- RTX 5090: ~45-50 tokens/sec on DeepSeek-R1 32B Q4

- H100: ~45-50 tokens/sec on DeepSeek-R1 32B Q4 ✓

- Latency parity (both ~20ms first token)

But H100’s real advantage: 94 GB VRAM allows full-precision 70B models (not quantized), boosting quality. RTX 5090 (32 GB) maxes out at 32B quantized.

Multi-user scenario (10+ concurrent):

- RTX 5090: ~45-50 tokens/sec total

- H100: 200+ tokens/sec (scheduling multiple requests) ✓

- Winner: H100

Takeaway: For single-user quality vs cost, RTX 5090 wins. For enterprise multi-user or unquantized 70B, H100 wins.

KV-Cache and Context Length: The Hidden VRAM Killer

Why does a 20 GB model use 30 GB on a 32 GB card? KV-Cache.

When you run a model with 4K token context, the GPU must cache the key-value tensors for attention—and this grows linearly with context length. A 32B model at 512 tokens context uses ~22-23 GB VRAM. At 4K tokens context, it can spike to 29-31 GB.

The math:

- Model weights: 20 GB (62.5%)

- KV-Cache (2K context): 7-8 GB (22%)

- Runtime buffers: 2-3 GB (8%)

- Total: 29-31 GB (91-97%)

This is why RTX 5090’s 32 GB feels tight for 32B models with long context, and why 24 GB (RTX 4090) becomes painful at 32B.

Power Supply, Connectors, and Cooling for 24/7 Inference

Local AI is sustained compute, not gaming bursts. Your hardware runs hot for hours.

- Power draw: RTX 5090 peaks at 575W. A 1,000W PSU is the minimum; 1,250W recommended for stability and headroom.

- 12V-2×6 connector: Rare incidents in 2023-2024 with RTX 4090, but NVIDIA strengthened the design for RTX 50-series. Hardware Busters confirmed safe operation at 575W with correct insertion. Practical rule: Verify full click-in, use approved cables, avoid tight bends. Real risk today: <0.1% if installed correctly.

- Cooling: Founders Edition is compact but hot and loud under sustained load. For local AI, custom coolers (ASUS ROG Astral, MSI SUPRIM Liquid, Gigabyte AORUS Xtreme) with triple fans or AIO liquid cooling maintain stable clocks without throttling.

Best GPUs for Local AI in 2026 (By Tier)

Ultra-Performance: Blackwell Dominance

RTX 5090: The New Gold Standard

The RTX 5090 is the benchmark for local AI in 2025-2026. 32 GB GDDR7, 21,760 CUDA cores, 1,792 GB/s bandwidth enable native execution of 32B models (Llama 3, DeepSeek-R1) without aggressive quantization artifacts.

Price (March 2026): ~$1,999 MSRP / €1,999 (often $2,500-3,000 / €2,300-2,800 in stock-constrained markets)

Official specs: NVIDIA GeForce RTX 5090

Custom vs Founders Edition: Ignore the compact FE. For 24/7 LLM inference, choose custom coolers (ASUS, MSI, Gigabyte) with triple fans or AIO. FE will throttle and sound like a jet engine.

RTX PRO 6000 Blackwell: Enterprise Research

96 GB VRAM for labs and enterprises running massive models or fine-tuning. Price ($8,000+ / €7,500+) restricts it to specialist use. Skip unless you’re training 70B+.

Mid-Range and Used Market

RTX 4090: Still Solid, With Warnings

24 GB VRAM handles 7B-13B comfortably and image gen via Stable Diffusion. But 32B models require extreme optimization.

Used market (March 2026): €400-550 (down from €1,600 new in 2024)

Used GPU caution: Many 4090s have mining history. Always verify:

- ✓ Proof of purchase / invoice

- ✓ GPU-Z stress test (clock stability, memory temps)

- ✓ 14-day return guarantee if failure

- ✓ MemtestG80 immediately after purchase

Red flags (don’t buy):

- ✗ No history / receipt

- ✗ Memory temps >85°C under load

- ✗ Failure within 30 days of purchase

- ✗ “Never used” (hidden mining suspicion)

Verdict: Used 4090 = good value if verified. Crypto market headwinds (2024-25) mean fewer active mining operations, making used 4090 mining cards less common than media hype suggests. But due diligence is mandatory. Save €600-800 vs new hardware—worth the verification effort.

RTX 5080: The Overlooked Mid-Tier

16 GB GDDR7 is not a “entry-level discovery” card. It’s a solid professional choice for real work.

Price (March 2026): ~$799 MSRP / €700-800

Real use cases:

- ✓ Local chat + RAG (7B-13B Mistral, Phi, Llama 3)

- ✓ Autonomous agents (crewAI, AutoGen)

- ✓ Moderate ComfyUI (1024×1024 generation, Stable Diffusion 3)

- ✓ Lightweight LoRA fine-tuning (7B adapter)

- ✓ Light multimodal (video 1080p + text)

Real limits:

- ✗ 32B + long context = tight (15-16 GB used)

- ✗ Two heavyweight models simultaneously = OOM risk

- ✗ Flux.2-dev full resolution = uncomfortable

Benchmark: Llama 3.1 8B achieves 120+ tok/s. Qwen 14B: 70-80 tok/s. DeepSeek-R1 32B Q4: 35-40 tok/s (saturation).

Verdict: At €700, RTX 5080 rivals 5090 for 90% of creative/dev work. Only extreme 32B ambitions or multi-workload scenarios justify the €1,200 jump to 5090.

RTX 5070 Ti / RTX 5070: Budget Entry-Point

RTX 5070 Ti (16 GB, €550) = solid entry. RTX 5070 (12 GB, €350) = usable but constrained. Both inherit Blackwell’s inference gains but hit limits on ambitious projects or multi-GPU workflows.

AMD Alternative: Radeon AI PRO R9700 (July 2025)

After years of ROCm stability issues, AMD released the Radeon AI PRO R9700 (RDNA 4)—a proper workstation GPU, not a gaming card repurposed.

Specs:

- 32 GB GDDR6 (vs GDDR7 on RTX 5090)

- 64 Compute Units + 128 AI Accelerators

- ROCm 6.4+ (Phoronix confirmed stable, Oct 2025)

- Price: ~$950 USD / €850-1,000 (vs $1,999 / €1,999 for RTX 5090)

- TDP only 300W (vs 575W RTX 5090) = €150-200 electricity savings over 2 years

Official specs: AMD Radeon AI PRO R9700

Performance (DeepSeek-R1 32B Q6): ~40-45 tokens/sec single-user (competitive with RTX 5090)

Advantages:

- ✓ Same 32 GB VRAM

- ✓ 35% cheaper than RTX 5090

- ✓ ROCm officially mature (Linux professional support)

- ✓ Blower cooling (multi-GPU dense scaling)

- ✓ Dramatic power efficiency (€300+ savings)

Disadvantages:

- ✗ No compact dual-slot FE (less suited for gaming PCs)

- ✗ GDDR6 vs GDDR7 bandwidth (-50%, ~700 GB/s)

- ✗ PyTorch/ONNX/TF on ROCm = 95-98% compatible (vs 100% CUDA)

- ✗ Ollama support still unstable (GPU discovery timeouts reported Nov 2025)

Note: R9700 is workstation-class (not consumer). Limited availability outside US.

Verdict 2026: For budget-conscious teams or Linux-first shops, R9700 is compelling. For solo developers or beginners, NVIDIA’s ecosystem is safer. For <€1,500 budget: R9700 is the only 32 GB choice.

Budget Segment: Ampere Legacy

- RTX 3060 12 GB: Reddit darling for 7B-13B quantized models. Best VRAM/price ratio in used market.

Price (used, Mar 2026): €150-200

Better alternatives (+€50):

- RTX 3070 8 GB used (€200-250) — 40% faster on 13B than 3060, same VRAM interface

- RTX 4060 8 GB new (€250-300) — Ada architecture, better efficiency

2-year TCO realistic (€0.15/kWh French electricity):

| GPU | Initial | Electric/year | 2-yr TCO | €/inference |

|---|---|---|---|---|

| RTX 3060 (€180) | €180 | €120 | €420 | Baseline |

| RTX 3070 used (€220) | €220 | €140 | €500 | -5% cost (40% perf gain) |

| RTX 4060 (€270) | €270 | €95 | €460 | Best ratio |

Recommendation: RTX 4060 new is the smartest budget 2026 pick (efficiency + support). RTX 3060 for sub-€150 budgets only.

Real Benchmarks: DeepSeek-R1 32B and Model Scaling

Performance by Model (Ollama RTX 5090)

Inference speed depends drastically on model size, quantization, and software. This table shows realistic performance on RTX 5090 with standard 4-bit quantization:

| Model | Parameters | Size (GB) | VRAM Used (%) | Tokens/sec |

|---|---|---|---|---|

| Llama 3.1 | 8B | 4.9 | 82% | 149.95 |

| Qwen 2.5 | 14B | 9.0 | 66.5% | 89.93 |

| DeepSeek-R1 | 14B | 9.0 | 66.3% | 89.13 |

| Gemma 3 12B | 12B | 8.1 | 32.8% | 70.37 |

| QwQ | 32B | 20 | 94% | 57.17 |

| Gemma 3 27B | 27B | 17 | 82% | 47.33 |

| DeepSeek-R1 | 32B | 20 | 95% | 45.51 |

| Qwen 2.5 | 32B | 20 | 95% | 45.07 |

Source: Ollama 0.50+, DeepSeek-R1 32B Q4_K_M, CUDA 12.4, 512-token context. Single-latency (1 request). Localhost benchmark, not multi-user.

Looking for model comparisons? See Which Qwen 3 Model Should You Choose? for detailed 32B-4B breakdown by GPU and VRAM.

📌 Why VRAM Usage Exceeds Model Weights

A QwQ 32B model weighs ~20 GB, but the table shows 94% of 32 GB = ~30 GB used. Where’s the 10 GB gap?

- KV-Cache (+25-30%): Attention key-value storage (grows with context)

- 512-token context ≈ +3 GB

- 2K-token context ≈ +10 GB

- 4K-token context ≈ +15 GB

- Runtime buffers (+5-10%): Ollama/vLLM allocations, gradients, temp tensors

- OS overallocation (+1-2%): Linux/CUDA safety margins

Lesson: RTX 5090 is comfortable for 32B with 2K-4K context. Beyond 8K context, saturation happens fast.

Software Stack: How to Get the Most Out of Your GPU

Quantization (GGUF, GPTQ, AWQ) and Why VRAM Matters More Than TFLOPS

Quantization is the software lever that makes local AI accessible without a server cluster. Reducing model precision (FP16 → INT4) slashes VRAM and latency while preserving conversational coherence.

- GGUF: Flexible, CPU/GPU optimized, widely supported. Explore llama.cpp for tools.

- GPTQ / AWQ: Performance-focused, excellent for complex models (Mistral, Llama). GPTQ is fastest; AWQ balances quality/speed.

- Concrete impact: Mistral 7B needs ~14 GB in FP16 native, but runs on 6-8 GB in 4-bit quantized.

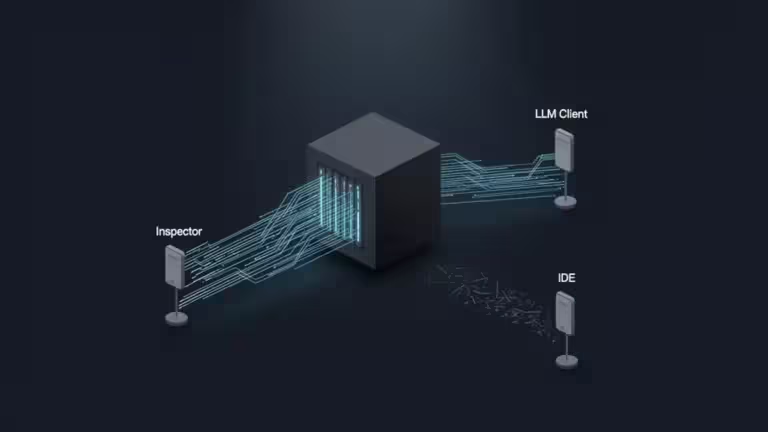

Best Tools for Local LLMs (Ollama, vLLM, LM Studio, Text-Gen-WebUI)

Your choice of frontend directly impacts how you manage GPU resources.

- Ollama: Plug-and-play simplicity, intelligent GPU/CPU fallback. Visit Ollama.com for official setup.

- Text-generation-webui (oobabooga): Most complete for experts. Fine-grained control over temperature, context length, inference params.

- LocalAI: Privacy-first alternative emulating OpenAI API for transparent integration.

- vLLM: High-performance framework for fast inference and complex pipelines.

For practical comparison, see Ollama vs vLLM: Which Solution for Local LLMs?.

For advanced setup with MCP integration, see Integrating MCP with Ollama, vLLM, and Open WebUI.

For step-by-step installation, see Install vLLM with Docker Compose on Linux (WSL2 compatible).

Recommended PC Build: CPU, RAM, Storage, OS

A powerful GPU in a bottlenecked system suffers frustrating throughput loss.

- CPU: Modern 6-8 core (Ryzen 5 7600, i5-13600K) minimum. Handles background tasks and model loading.

- RAM: 32 GB sufficient for 7B-13B models. 64 GB critical for larger models or hybrid systems avoiding crashes.

- Storage: Fast NVMe SSD mandatory. Loading a 10+ GB model from SATA SSD or HDD severely degrades UX.

- OS: Windows + WSL 2 is now fast (quasi-native). Linux native (Ubuntu, Arch) remains recommended for stability and community support.

Windows 11 + WSL 2 ≠ handicap: In 2025-2026, WSL 2 delivers near-native AI performance with Ollama and PyTorch.

- I/O latency: negligible (5-10% slower than native)

- GPU throughput: identical (GPU passes directly to WSL VM)

- CUDA setup: as simple as Ubuntu

Example: Llama 3 8B on WSL 2 = 120 tok/sec (vs 122 tok/sec Linux native)

When Linux native still matters:

- ✗ Extreme SSD I/O (mining operations need raw I/O)

- ✗ Exotic cooling scenarios

- ✗ Production servers (long-term stability)

Windows users: Don’t self-censor over WSL 2. It’s fine for 95% of local AI. WSL 2 + Ubuntu 24.04 = logical path.

In Conclusion

Local AI isn’t magic, but it has matured. You can run serious 32B models on a RTX 5090 (€1,999) with quality that would’ve seemed impossible 2 years ago. But don’t expect to rival cloud providers: you won’t get multi-user concurrency, auto-scaling, or enterprise SLA.

What you do get: absolute privacy, zero network latency, no monthly bills, and freedom to modify. For solo developers, researchers, and small teams, that’s often superior to cloud.

The real challenge isn’t hardware—it’s learning to optimize: VRAM tuning, model selection, quantization strategy. If you’re not ready to tinker, stick with cloud. Otherwise, welcome to 2026: local AI actually works.

Frequently Asked Questions

Q: Which GPU is best for running local LLMs at home?

A: RTX 5090 (€1,999) if budget allows. RTX 5080 (€700) if you’re smart. RTX 3060 used (€180) if you’re on a shoestring.

Q: Is 8 GB VRAM enough for local AI?

A: For 7B quantized models, yes. Beyond that, you’ll hit context-length limits and lag. 12 GB is the practical floor.

Q: Can I run a 70B model on a single GPU?

A: Not comfortably on anything under 48 GB. RTX 5090 (32 GB) + extreme 2-bit quantization is possible but painful.

Q: Is the RTX 4090 still worth it in 2026?

A: Used, yes. €400-550 for 24 GB and 75% of 5090 speed is a solid deal if you verify the history.

Q: What budget should I plan for serious local AI in 2026?

A: Minimal: €300 (used RTX 4060). Comfortable: €700-900 (RTX 5080). Optimal: €1,500-2,000 (RTX 5090 or AMD R9700).

Q: What about Apple Silicon Macs for local AI?

A: M-series Macs (M3/M4 Pro/Max) can run 7B-13B models efficiently with 16-20GB unified memory. For 32B models, you’d need the 96GB M4 Max (~$7,000+). For most builders, the hardware ecosystems and software flexibility around RTX GPUs offer better value and control. If you’re fully invested in Apple, check MLX for optimized M-series inference.

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!