RTX 5090 Benchmark: Flux.2 Dev Performance in FP8 and GGUF

Local AI inference performance is traditionally evaluated through a binary metric: whether a model fits entirely within physical VRAM. In the contemporary local AI ecosystem, this paradigm is shifting. Factors such as software stack optimization, execution scheduling, and kernel maturity increasingly dictate actual processing throughput. Memory residency alone no longer guarantees optimal generation speeds.

The NVIDIA GeForce RTX 5090, built on the Blackwell architecture, introduces massive compute capabilities alongside 32 GB of physical VRAM. This hardware profile provides an excellent platform for testing how modern architectures handle heavy diffusion workloads under different data formats.

This technical analysis evaluates the performance of FLUX.2 Dev inside ComfyUI. We compare an FP8 mixed precision implementation against a GGUF Q5_K_M quantized version. The comparison highlights a clear divergence between raw memory footprint reduction and execution pipeline efficiency.

Please note that Sage Attention was not utilized during these tests. All benchmarks rely strictly on the native attention mechanisms implemented within the evaluated ComfyUI environment.

Benchmark methodology and environmental conditions

To isolate the variables influencing inference throughput, a standardized benchmark environment was established on a dedicated workstation. The baseline hardware infrastructure consists of an NVIDIA GeForce RTX 5090 GPU featuring 32 GB of physical VRAM, paired with 64 GB of system RAM.

The software ecosystem operates under Windows 11 using ComfyUI version 0.20.1. The underlying execution framework leverages contemporary production-grade NVIDIA drivers and a corresponding CUDA toolkit environment. PyTorch Scaled Dot-Product Attention (SDPA) serves as the primary attention mechanism. To ensure clean evaluation metrics of the model formats, advanced runtime graph alterations—such as torch.compile, xFormers, or Sage Attention—were explicitly omitted from the pipeline.

Inference parameters were kept perfectly deterministic across all evaluation cycles to maintain prompt consistency and execution parity. The benchmark tests utilize the FLUX.2-dev architecture under the following strictly controlled variables:

- Batch Size: 1

- Step Count: 20 steps

- Sampler / Scheduler: Euler / Simple

- Classifier-Free Guidance (CFG): 1.0

- Seed Handling: Fixed deterministic seed value across all iterations

- Resolution: 1024×1024, standardized resolution target

For the quantized runs, the system utilized the dedicated ComfyUI-GGUF node ecosystem to parse the model weights.

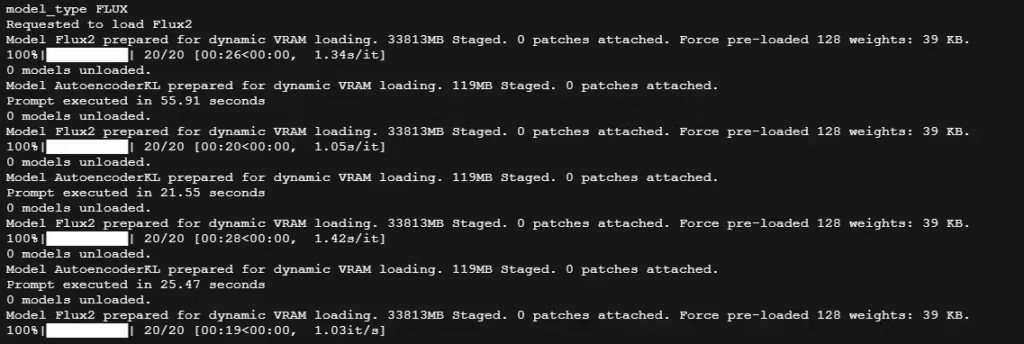

Performance metrics: FLUX.2-dev FP8 mixed precision

Raw execution tracking

The evaluation of the flux2_dev_fp8mixed.safetensors model format spanned four consecutive execution passes under a sustained workload of 20 sampling steps. This sequence captures both the initial cold-start overhead and the subsequent behavior of the warmed execution graph.

- Run 1 (Cold start): Total execution time: 55.91 seconds. Iteration speed: 1.34 s/it.

- Run 2 (Warm start): Total execution time: 21.55 seconds. Iteration speed: 1.05 s/it.

- Run 3 (Warm start): Total execution time: 25.47 seconds. Iteration speed: 1.42 s/it.

- Run 4 (Warm start): Total execution time: 20.20 seconds. Iteration speed: 1.03 it/s (approximately 0.97 s/it).

VRAM allocation analysis

During the initialization phase, the ComfyUI terminal logged the following runtime state:

Model Flux2 prepared for dynamic VRAM loading. 33813MB Staged. 0 patches attached. Force pre-loaded 128 weights: 39 KB.

This telemetry indicates that the model’s uncompressed FP8 weight footprint requires 33,813 MB of memory. Because the physical VRAM capacity of the NVIDIA GeForce RTX 5090 is capped at 32,768 MB (32 GB), the required footprint exceeds the onboard physical frame buffer by approximately 1,045 MB. ComfyUI natively manages this deficit by staging the weights across the system’s memory subsystem and orchestrating a dynamic weight-streaming workflow during the forward pass.

Compute behavior and data transport mechanics

The cold start of 55.91 seconds reflects the dual overhead of disk I/O and the initial layer mapping across system memory and VRAM. Once the tensor structures are staged, the warm-state execution times stabilize within a narrow band between 20.20 and 25.47 seconds.

This throughput, achieved despite the physical VRAM overflow, underscores the efficiency of the Blackwell architecture. The Tensor Cores within the RTX 5090 are architecturally optimized for native lower-precision math, including FP8 matrix operations. The hardware executes these instructions natively, which bypasses any intermediate conversion steps.

Crucially, the dynamic weight streaming required to bridge the 1,045 MB VRAM deficit does not cause severe system memory paging penalties. The high internal memory bandwidth of the Blackwell GPU, paired with optimized host-to-device transport pipelines, hides a significant portion of the data transit latency behind the execution of the active layers. The compute engine receives data quickly enough to maintain an iteration speed hovering near 1.05 s/it.

This behavior highlights the operational viability of running slightly oversized models in their native numerical format inside ComfyUI, a topic detailed further in the ComfyUI format guide. It also demonstrates how native sub-16-bit precisions function as a high-performance baseline prior to moving toward newer experimental paradigms like NVFP4 and structural sub-8-bit primitives.

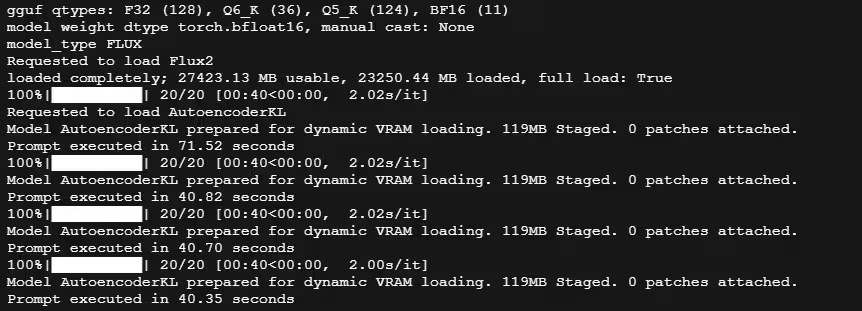

Performance metrics: FLUX.2-dev GGUF Q5_K_M

Raw execution tracking

The evaluation of the quantized Unsloth FLUX.2-dev GGUF format followed an identical protocol. The workload consisted of four consecutive execution passes generating an output at the exact same target resolution of 1024×1024 pixels over 20 sampling steps.

- Run 1 (Cold start): Total execution time: 71.52 seconds. Iteration speed: 2.02 s/it.

- Run 2 (Warm start): Total execution time: 40.82 seconds. Iteration speed: 2.02 s/it.

- Run 3 (Warm start): Total execution time: 40.70 seconds. Iteration speed: 2.02 s/it.

- Run 4 (Warm start): Total execution time: 40.35 seconds. Iteration speed: 2.00 s/it.

VRAM allocation analysis

During the initial tensor loading phase, the ComfyUI console outputted the following tracking telemetry:

loaded completely; 27423.13 MB usable, 23250.44 MB loaded, full load: True

This log confirms that the model’s total compressed memory footprint equals 23,250.44 MB. This footprint sits well below the 32,768 MB physical limit of the NVIDIA GeForce RTX 5090 frame buffer. The variable full load: True indicates that the ComfyUI-GGUF backend successfully allocated the entire model weight graph into the physical VRAM. The system retained approximately 9.5 GB of unallocated VRAM during inference.

Execution linearity

The cold start required 71.52 seconds. This duration includes disk retrieval, file parsing, and the initial layout setup within ComfyUI. Once the state shifted to a warm graph, the execution times demonstrated absolute linearity.

The generation times stabilized between 40.35 and 40.82 seconds. The iteration speed remained static at 2.02 s/it for the first three runs before experiencing a minor variance to 2.00 s/it on the final run.

Because the model weights reside completely inside the physical GPU memory, the system experiences no VRAM paging or dynamic memory streaming over the PCIe bus. The memory allocation remains stable across all test cycles. The execution behavior reflects the baseline characteristics of the Unsloth ComfyUI implementation. This setup utilizes specific GGUF architectures to enforce predictability at the cost of changing the underlying compute mechanics, a trade-off often observed in specialized quantization formats.

Quantitative performance summary

The following matrix consolidates the empirical data collected across both evaluation cycles at the native 1024×1024 pixel resolution.

| Performance Metric | FLUX.2-dev FP8 Mixed Precision (safetensors) | FLUX.2-dev GGUF Q5_K_M (Unsloth) |

|---|---|---|

| Model Size / Staged Memory | 33,813 MB | 23,250.44 MB |

| VRAM Residency Status | Dynamic VRAM Loading (Overflow) | Full VRAM Load (full load: True) |

| Available VRAM Headroom | ~0 GB (Buffer saturated) | ~9.5 GB |

| Cold Start Latency (Run 1) | 55.91 seconds | 71.52 seconds |

| Warm State Execution Range | 20.20 – 25.47 seconds | 40.35 – 40.82 seconds |

| Iteration Speed Range (Warm) | 0.97 – 1.42 s/it (Peak at 1.03 it/s) | 2.00 – 2.02 s/it (Constant) |

| Primary Execution Path | Native Tensor Core FP8 Operations | On-the-fly Software Dequantization |

Architectural interpretation: Pipeline maturity vs. quantization overhead

The diffusion vs. LLM quantization divergence

The discrepancy between memory residency and raw throughput highlights a fundamental architectural difference between autoregressive Large Language Models (LLMs) and diffusion-based image generation models.

In LLM inference, the execution paradigm is heavily memory-bandwidth bound during the decoding phase, where tokens are generated one by one. Reducing the weight footprint via quantization directly accelerates inference by shrinking the data volume passing through the memory bus. This mechanism is well-documented in standard text generation platforms, as seen in the Unsloth Qwen 3.5 GGUF benchmarks and broader LLM inference benchmarks 2026.

Diffusion models like FLUX.2-dev operate under a different computational profile. The model processes large, multi-dimensional tensors across a fixed series of iterations over the entire model graph. The workload shifts between being memory-bound and heavily compute-bound depending on the specific layer and resolution. At a target resolution of 1024×1024 pixels, the dense attention mechanisms and transformer blocks require intensive mathematical computation during each sampling step.

Dequantization bottlenecks and kernel optimization

The GGUF format requires the runtime execution layer to perform on-the-fly dequantization. The quantized blocks must be unpacked into a standard floating-point format (typically FP16 or BF16) before the GPU can execute the matrix multiplications.

This unpacking process introduces a substantial dequantization tax. In the current ComfyUI-GGUF implementation, this dequantization step adds significant compute cycles and introduces thread synchronization overhead. This overhead re-occurs across every layer during all 20 sampling steps.

Conversely, the native FP8 stack within ComfyUI bypasses these intermediate conversion layers. The NVIDIA Blackwell architecture includes specialized hardware-level support for FP8 primitives. The RTX 5090 Tensor Cores ingest the FP8 tensors directly. This direct execution path ensures optimal hardware utilization without software translation penalties.

The performance gap is therefore a reflection of software stack maturity and kernel optimization rather than physical memory limitations. The native FP8 execution path is highly optimized and mapped directly to the Blackwell instruction set.

The GGUF diffusion stack, while functional, lacks equivalent kernel maturity for this hardware generation. The computational overhead of on-the-fly dequantization outweighs the benefits of keeping the model entirely inside physical VRAM. This contrast between raw mathematical precision scaling and quantized execution paths mirrors historical developments in text models, such as those analyzed in the dynamic 4-bit vs FP16/BF16 comparison.

Operational trade-offs: Why GGUF deployment still matters

Despite the throughput advantage of the native FP8 format, quantization formats like GGUF remain highly relevant in production environments. Inference speed is rarely the sole metric when engineering complex local pipelines.

The GGUF Q5_K_M variant reduces the model’s footprint to 23,250.44 MB. On a 32 GB frame buffer like the one featured on the NVIDIA GeForce RTX 5090, this allocation leaves approximately 9.5 GB of VRAM entirely unallocated.

In contrast, the FP8 mixed precision model creates a minor VRAM overflow. This footprint leaves virtually no headroom for additional neural networks within the same execution graph. When building advanced pipelines, this remaining VRAM budget is essential for multi-model composition. ComfyUI workflows often run multiple secondary models concurrently to modify or enhance the base generation at the target 1024×1024 pixel resolution.

This unallocated memory budget can be distributed to several critical pipeline components:

- ControlNet and IP-Adapter stacking: Running multiple conditioning models simultaneously requires significant explicit VRAM allocation during the denoise cycle.

- Neural upscaling and refinement: High-resolution post-processing architectures and tiled latent upscalers require substantial memory pools during execution, a necessity detailed in the ultimate ComfyUI upscale models guide.

- Local LLM integration: Multi-stage generative workflows frequently embed a local LLM node within ComfyUI to handle complex prompt expansion or semantic conditioning before passing tensors to the diffusion model.

Selecting a model format involves a strategic trade-off. FP8 mixed precision maximizes raw computation speed by leveraging the Blackwell Tensor Cores natively.

Conversely, GGUF trades iteration speed to protect the overall memory budget. This architectural flexibility is a key consideration when mapping software demands to hardware provisioning strategies, as explored in the local AI GPU selection guide.

Benchmark limitations and ecosystem trajectory

The performance metrics recorded in this analysis represent a specific technical snapshot of the local AI ecosystem in 2026. These results are bounded by the current software architecture of ComfyUI v0.20.1, the existing implementation state of the Unsloth GGUF diffusion node backend, and the maturity of current NVIDIA Blackwell drivers. They do not represent a permanent performance hierarchy.

The current performance delta is largely driven by software-side implementation lag rather than inherent hardware constraints. The native FP8 execution path benefits from extensive upstream optimization within PyTorch and NVIDIA’s core library stack. In contrast, GGUF support for diffusion architectures is a relatively recent development. The underlying dequantization kernels have not yet been fully optimized or fused to leverage the architectural specificities of the RTX 5090.

Several architectural developments could shift this performance equilibrium in the near future:

- Fused dequantization operators: Integrating kernels that combine dequantization and matrix multiplication directly into a single execution step would significantly reduce memory bandwidth and thread synchronization overhead.

- Optimized GGUF kernels for Blackwell: Tailoring the GGUF runtime to exploit the specific cache hierarchies and warp scheduling mechanics of the Blackwell architecture could narrow the compute gap.

- Native FP4 execution pipelines: The Blackwell architecture includes hardware-level acceleration for sub-8-bit formats. The deployment of native, production-ready FP4 diffusion pipelines could redefine both VRAM efficiency and raw inference throughput, moving beyond the limitations of current FP8 and GGUF implementations as analyzed in the NVFP4 vs FP8 architectural analysis.

Consequently, engineers and power users must view these benchmarks as a transient representation of runtime maturity rather than an absolute baseline for hardware capability.

Conclusion and architectural outlook

This benchmark highlights that local AI inference performance is determined by an intersection of hardware capabilities, data formats, and software runtime maturity. The evaluation of FLUX.2-dev on the NVIDIA GeForce RTX 5090 challenges the assumption that fitting a model entirely within physical VRAM is the sole requirement for achieving optimal throughput.

Under the current ComfyUI v0.20.1 ecosystem, the native FP8 mixed precision format delivers superior generation speeds. It leverages the Blackwell architecture’s native support for lower-precision arithmetic, allowing the RTX 5090 Tensor Cores to operate with high efficiency. Even when the model footprint slightly exceeds the physical 32 GB VRAM limit, the architecture’s internal bandwidth and optimized weight streaming minimize the performance impact of memory offloading.

Conversely, the GGUF Q5_K_M format offers a clear advantage for memory budgeting. By shrinking the model footprint to 23,250.44 MB, it frees up roughly 9.5 GB of VRAM. This headroom is essential for complex, multi-model ComfyUI pipelines that require concurrent loading of ControlNets, IP-Adapters, or high-resolution upscale models. However, this memory savings introduces a computational trade-off. The on-the-fly dequantization required by the current GGUF diffusion stack creates an execution overhead that limits overall iteration speed.

Ultimately, choosing between native FP8 and quantized GGUF is a tactical decision based on pipeline requirements:

- Select FP8 mixed precision when raw generation speed and native hardware utilization are the primary goals.

- Select GGUF quantization when building complex, multi-stage workflows that demand strict VRAM budgeting for auxiliary networks.

As runtime environments evolve and kernel implementations mature, the performance balance between these formats will likely shift. For the current local AI landscape, matching the model format to the native strengths of the underlying hardware remains the most effective path to balancing speed and workflow complexity.

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!