LTX-2.3 vs Wan-2.2: Which Open-Source AI Video Model Should You Run Locally in 2026?

The 2026 Sora shutdown was a wake-up call: depending on a single giant for video creation is a strategic risk. As proprietary alternatives become overpriced, savvy creators are pivoting to Local-First. Meanwhile, the economics of cloud-based video generation are drifting toward subscription tiers that put professional-grade output out of reach for independent creators and small studios.

The response from the technical community has been unambiguous: local-first video generation is no longer an enthusiast experiment. It’s a production strategy.

But running AI video locally in 2026 means choosing between two fundamentally different philosophies. LTX-2.3, developed by Lightricks, is built on a DiT (Diffusion Transformer) architecture optimized for speed, native vertical output, and integrated audio, the engine for short-form content factories. Wan-2.2, out of Alibaba’s research labs, runs a Mixture-of-Experts (MoE) architecture that trades inference speed for photorealistic texture fidelity and cinematic coherence.

This isn’t a question of which model is objectively better. It’s a question of which model fits your hardware, your output format, and your production pipeline. And in some workflows, the right answer is to use both, in sequence.

One more dimension deserves attention upfront: sustainability. The most capable versions of Wan (2.7+) and future Lightricks engines are already migrating toward API-gated or subscription-only access. The open-weight window may not stay open indefinitely. That context matters when deciding where to invest your workflow engineering time.

1. LTX-2.3: Speed, Native 9:16, and the Audio Question

1.1 Native 9:16 Vertical Composition

LTX-2.3, developed by Lightricks, is an open-weight video model built on a DiT (Diffusion Transformer) architecture, capable of generating synchronized video and audio with native support for the 9:16 portrait format.

That last point is more significant than it might appear. Most video generation models, including LTX’s previous iteration, LTX-2.0, are trained on landscape-oriented data. When asked to produce vertical output, they either crop a 16:9 frame or apply post-processing transformations that introduce compositional artifacts: subjects cut off at the edges, camera movements designed for horizontal panning that look awkward in portrait, depth cues that break down when reframed.

LTX-2.3 generates vertical content with spatial composition and camera motion natively designed for the portrait frame. This means pan, tilt, and zoom behaviors that feel intentional on a smartphone screen rather than adapted from a widescreen source. For creators building automated production pipelines targeting TikTok, Instagram Reels, or YouTube Shorts at scale, this is a structural advantage, it eliminates an entire class of post-processing corrections.

At the current state of the ecosystem, LTX-2.3 is the most capable local model for vertical short-form content automation.

1.2 Native Audio Pipeline, Promises vs. Reality

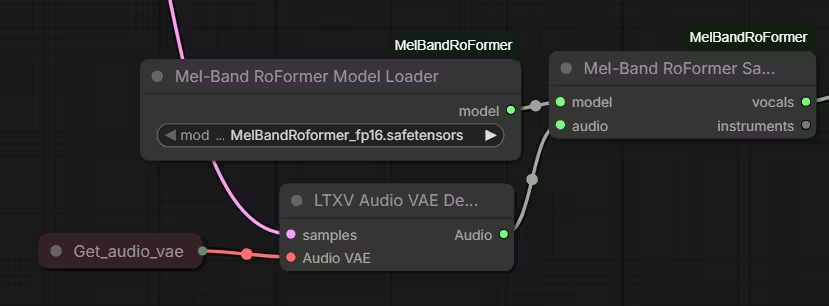

LTX-2.3 includes an integrated audio generation pipeline that produces synchronized sound alongside the video in a single inference pass. The concept is compelling: one model, one workflow, one output. The technical reality requires a more calibrated assessment.

The audio pipeline covers three distinct generation tasks, each with its own quality profile:

- Voice generation. Lip sync and phoneme timing are handled reasonably well, the synchronization mechanics work. The voice quality itself, however, is metallic and affectless. It does not compete with dedicated open-weight TTS models in terms of naturalness or emotional range. For any content where voice is a primary channel (narration, dialogue, explainers), this output requires either replacement or significant post-processing.

- Sound effects and ambience. Environmental audio and SFX generation exists but remains rough. Results are inconsistent across inference runs and lack the precision needed for sound design work.

- Music generation. This is the weakest component of the pipeline. Quality is poor, and more problematically, the model has a tendency to add musical content even when the prompt explicitly requests none. The model tends to force background music even when not requested, a known issue documented in the model’s discussion thread on Hugging Face. Standard solutions like excluding music via positive or negative prompts simply do not work. Post-production removal is mandatory.

The honest operational verdict: treat LTX-2.3’s audio output as draft-quality scaffolding, not a finished asset. It provides a useful timing reference and a rough ambience layer. For professional output, plan for one of two approaches: accept the draft audio for contexts where production quality is not critical (rapid content testing, internal review), or build Audio-to-Video (A2V) workflows where a separately produced audio track drives the video generation process, giving you full control over the sound layer from the outset.

1.3 4K Support, The Local Inference Trap

LTX-2.3 boasts 4K support, but this is a technical mirage. In local environments, native 4K immediately saturates pipelines, even on heavy rigs. In practice, LTX excels at 720p/1080p, allowing for iteration speeds ten times faster than its rivals.

The VRAM and compute demands scale non-linearly with resolution, running at 4K does not simply require more memory than 1080p, it changes the entire throughput profile. On typical workstation hardware, native 4K generation is either infeasible or produces runtimes that eliminate the speed advantage that makes LTX-2.3 worth using in the first place.

The practical resolution sweet spot for local LTX-2.3 inference is 720p to 1080p. At these resolutions, the model’s inference speed advantage over competitors like Wan-2.2 is substantial, iteration cycles are an order of magnitude faster, which is what makes prompt testing and creative exploration viable.

The path to 4K output runs through post-processing, not native generation. A dedicated upscaling workflow applied after inference, using models purpose-built for video upscaling, produces results that rival native 4K quality at a fraction of the compute cost. See the complete guide to ComfyUI upscale models for a breakdown of available models and their performance characteristics.

1.4 VRAM Optimization

LTX-2.3’s integrated video and audio modules do not run independently. When both are active simultaneously, their combined VRAM footprint can trigger OOM (Out of Memory) errors on GPUs with less than 16 GB, a configuration that still represents a significant portion of workstation hardware in active use.

The core optimization levers are: sequencing audio and video generation as separate passes where VRAM is constrained, applying aggressive attention tiling, and selecting the appropriate quantization for your specific GPU tier. For a systematic approach to matching model configuration to available VRAM, the ComfyUI model size and VRAM guide covers the decision framework in detail.

2. Wan-2.2: Cinematic Photorealism and Its Hardware Cost

2.1 MoE Architecture, What It Actually Delivers

Where LTX-2.3 optimizes for throughput, Wan-2.2 optimizes for fidelity. The architectural choice behind this difference is the Mixture-of-Experts (MoE) design, which routes different aspects of the generation task through specialized sub-networks rather than processing everything through a single unified model.

In practice, this translates to measurably better handling of the elements that define cinematic quality: skin texture consistency across frames, specular highlight behavior on reflective surfaces, volumetric light scattering, and structural coherence in fabric and hair dynamics. These are precisely the areas where DiT-based models like LTX-2.3 tend to produce the soft, slightly plastic aesthetic that reads as “AI video” to a trained eye.

That said, MoE is not a physics simulator. Wan-2.2 has documented limitations with scenarios involving complex mechanical interactions, a glass breaking on impact, a boxing exchange with contact and recoil, or any sequence requiring accurate rigid body dynamics. These scenes tend to produce plausible-looking but physically incorrect motion. Prompt design for Wan-2.2 should account for these boundaries and avoid relying on the model for physical accuracy in high-complexity interactions.

2.2 14B vs. 5B Variants

The Wan-2.2 project ships in two primary configurations, and the choice between them is primarily a hardware decision rather than a quality preference.

14B (full variant). This is the reference configuration for photorealistic output. It handles skin texture gradients, multi-surface reflections, and fluid dynamics with a level of structural coherence that the smaller variant cannot match. The 14B model is the right choice when visual quality is the primary constraint and hardware budget allows it. For deeper context on the architectural decisions behind this generation of models, the LTX-2 Technical Guide provides useful comparative framing on DiT vs. heavier architectures.

5B (optimized variant). This is the practical configuration for constrained VRAM environments. It retains the core MoE routing logic but with reduced parameter depth, which means it loses a significant portion of the fine structural detail that defines the 14B’s output quality. The 5B variant is a survival option for local setups. To get satisfactory results, aim for minimalist compositions or cartoon/illustrated aesthetics. This bypasses the model’s struggle with high-frequency cinematic textures. Attempting to generate complex photorealistic scenes with the 5B variant will surface its limitations quickly.

2.3 Hardware Requirements and Inference Latency

Wan-2.2 is demanding by design. Its MoE routing architecture, while responsible for its quality ceiling, introduces a structural inference latency penalty that does not exist in DiT-based models. Each forward pass involves conditional routing across expert sub-networks, which adds overhead that cannot be fully offset by hardware scaling alone.

The comfortable operational baseline for Wan-2.2 is 24 GB VRAM. At this tier, the 14B model runs without aggressive memory optimization and produces consistent frame-to-frame coherence at target resolutions. Below this threshold, the inference process becomes progressively more dependent on software-side workarounds, attention tiling, sequential module loading, reduced batch sizes, each of which adds latency and introduces potential consistency artifacts.

For a structured approach to configuring Wan-2.2 on hardware below the 24 GB threshold, the ComfyUI model size and VRAM guide covers the specific trade-offs involved.

2.4 GGUF and FP8 Quantization as the Practical Solution

Running the Wan-2.2 14B model on 12–16 GB GPUs requires quantization. This is not a compromise position, it is the standard production approach for the majority of local inference setups, and the community has converged on a set of configurations that preserve most of the model’s quality ceiling while making it operationally viable on mid-range hardware.

Understanding the precision trade-offs before committing to a quantization strategy matters here. The ComfyUI format guide (BF16 / FP16 / FP8 / GGUF) provides a systematic breakdown of what each format costs in terms of visual fidelity and what it returns in terms of memory headroom. For benchmark data specifically on FP8 and NVFP4 behavior in video generation contexts, the LTX-2 technical optimization benchmarks offer directly applicable reference points.

The most robust method for loading Wan-2.2 on constrained hardware remains GGUF quantization within ComfyUI. At Q4_K_M or Q5_K_M quantization levels, the 14B model fits within a 16 GB VRAM envelope with acceptable quality degradation, primarily visible in fine texture detail at high zoom rather than in overall compositional coherence. For most production use cases at 720p output resolution, this trade-off is workable.

3. Technical Comparison Table

The following data reflects observed performance on RTX 50-Series hardware (2026). Values are configuration-dependent and will vary across GPU generations, quantization levels, and workflow implementations.

| Feature | LTX-2.3 | Wan-2.2 |

|---|---|---|

| Architecture | DiT (Diffusion Transformer) | MoE (Mixture-of-Experts) |

| Realistic output resolution | 1080p (Portrait & Landscape) | 720p (upscaling required) |

| Native FPS | 24–30 FPS | 16–24 FPS |

| Max clip length (constrained VRAM) | ~10 seconds | ~5 seconds |

| Recommended VRAM (comfortable) | 16 GB | 24 GB |

| Max clip length (32 GB VRAM) | ~20 seconds | ~15 seconds |

| Integrated audio | Yes (draft quality) | No |

| Primary use case | TikTok, Shorts, rapid iteration | Cinematic, VFX, photorealism |

Observed values, variable depending on GPU, quantization level, and workflow configuration.

4. Choosing by Use Case

The choice of local AI video model should not be driven by benchmark aesthetics alone. The decisive factors are the alignment between your target output format, your available hardware, and your tolerance for post-production overhead. Here are production-grounded recommendations based on current workflows:

TikTok / Shorts / Reels → LTX-2.3. Native 9:16 generation eliminates the framing corrections that cost time at scale. Inference speed makes multi-variant prompt testing viable within a single session. The draft-quality audio layer, while not production-ready, provides a usable timing reference and reduces the iteration cost of A2V workflows. For high-volume short-form pipelines, LTX-2.3 is the clear operational choice.

YouTube / Cinematic projects → Wan-2.2. When the deliverable is 16:9 and photorealism is a baseline requirement, Wan-2.2 is the reference model. Budget for longer render times and build a post-production pipeline that includes external upscaling, the 720p native output is an intermediate asset, not a final deliverable.

Fluid organic animation → Mochi 1. For scenes requiring complex physical motion, cloth dynamics, fluid behavior, organic movement, Mochi 1 frequently outperforms heavier models on motion coherence despite its lower resolution ceiling. Its most effective production role is as a Video-to-Video (V2V) reference input: generate the motion reference in Mochi 1, then drive a higher-fidelity pass in LTX-2.3 or Wan-2.2.

Prompt drafting and staging validation → Pyramid Flow. Before committing to a 15-minute Wan-2.2 render, validate your scene composition, camera motion, and color grading intent with Pyramid Flow. Its near-instant preview generation makes it an indispensable tool for compressing the creative exploration phase, not a production engine, but a compute-cost multiplier for the models that are.

5. The Challengers: Mochi 1 and Pyramid Flow

The LTX-2.3 / Wan-2.2 pairing covers the majority of local video generation use cases in 2026, but the ecosystem extends beyond this core duel. Two additional models appear consistently in production workflow discussions and deserve direct coverage.

Mochi 1 (Genmo): Motion Fidelity as a Specialization

Developed by Genmo, Mochi 1 is not a general-purpose video generation model. It is a motion specialist, a model built around a single design priority: the physical plausibility and temporal smoothness of movement.

The output specifications reflect this focus. Mochi 1 generates clips of approximately 5 seconds at a resolution ceiling around 480p. These are not numbers that position it as a final render engine. No pipeline targeting YouTube or any distribution platform with a resolution floor above SD should rely on Mochi 1 as its output stage.

Where Mochi 1 earns its place is in scenarios where motion quality is the critical variable and resolution is handled downstream. Its architecture produces movement dynamics, cloth behavior under force, fluid surface tension, articulated limb mechanics, that consistently outperform models with significantly higher parameter counts on these specific tasks. The heavier models optimize for texture and light; Mochi 1 optimizes for physics plausibility.

The most effective production use case is as a V2V (Video-to-Video) reference layer: generate the motion blueprint in Mochi 1, then use that output as the conditioning input for a higher-resolution pass in LTX-2.3 or Wan-2.2. This approach combines Mochi 1’s motion coherence with the texture fidelity of the larger models, without requiring either model to do work outside its strength zone.

Pyramid Flow: The Drafting Engine

Pyramid Flow occupies a different niche entirely. Its architecture is optimized for generation speed above all other considerations, producing visual previews at near-instant latency compared to production-grade models.

The operational logic is straightforward: a Wan-2.2 render at 720p can run 10–15 minutes on a 24 GB workstation. Discovering at the end of that render that the camera motion was wrong, the lighting intent didn’t translate, or the subject positioning breaks the composition is an expensive failure mode. Pyramid Flow compresses that feedback loop to seconds.

Use it to validate scene staging, test prompt phrasing, check color grading direction, and confirm that the compositional intent holds before committing compute to a full-quality render. Detail fidelity is insufficient for any professional deliverable, Pyramid Flow is not competing on that axis. It is a creative iteration accelerator, and its value is measured in Wan-2.2 renders avoided rather than in frames produced.

6. Post-Production Pipeline: Getting to 4K from 720p

Neither LTX-2.3 nor Wan-2.2 should be treated as a turnkey 4K production system in local inference conditions. The practical architecture is a two-stage process: generate at the resolution that matches your hardware’s throughput capabilities, then bring the output to broadcast quality through a dedicated post-processing pipeline. In 2026, this pipeline is mature, well-documented, and fully integrated into ComfyUI.

6.1 RTX Video Super Resolution + ComfyUI Upscaling

The most effective current approach for taking Wan-2.2’s 720p output to 4K combines two complementary tools operating at different stages of the upscaling process.

RTX Video Super Resolution within ComfyUI handles the real-time upscaling layer, leveraging NVIDIA’s tensor core-accelerated VSR pipeline to apply neural upscaling at speeds that make iterative review practical. For final render output, a dedicated upscaling pass using purpose-built ComfyUI models adds the structural detail recovery that VSR’s speed-optimized approach leaves on the table. The complete ComfyUI upscale models guide covers the available model options, their performance profiles, and the use cases each one is optimized for.

Used in combination, these two tools transform a 720p Wan-2.2 output into a sequence that holds up at 4K playback, not by interpolating missing detail, but by reconstructing it from the model’s latent output with significantly better fidelity than classical upscaling algorithms produce.

6.2 Frame Interpolation as a Standard Workflow Step

Wan-2.2’s native FPS ceiling of 16–24 frames per second is a structural characteristic of its MoE inference process, not a parameter that can be adjusted at generation time without significant compute cost. The standard solution, now effectively a default step in production ComfyUI workflows, is post-generation frame interpolation.

The ComfyUI Video Frame Interpolation template supports two models that have become community standards for this task:

FILM (Frame Interpolation for Large Motion, Google Research) is optimized for sequences involving significant inter-frame displacement, fast camera movement, rapid subject motion, action sequences. Its interpolation algorithm is specifically designed to handle large motion vectors without the ghosting artifacts that affect simpler interpolation approaches.

Practical-RIFE is the more broadly applicable option for general-purpose FPS multiplication. It operates with lower compute overhead than FILM and produces clean results across a wide range of motion types, making it the default choice for most workflows where motion complexity is not the primary concern.

Both models are available through Comfy-Org’s hosted model repository. The practical workflow is straightforward: generate at the model’s native FPS, then apply an interpolation pass to double or quadruple the frame rate. A Wan-2.2 output at 16 FPS becomes 32 or 64 FPS after two interpolation passes, enough to produce fluid, cinematic motion from source material that would otherwise read as choppy at standard playback speeds.

The key limitation to account for is motion blur consistency: interpolated frames are synthesized, not captured, which means fast motion sequences can exhibit subtle blending artifacts at the interpolation boundaries. For most content types, standard camera movement, moderate subject motion, environmental sequences, this is not operationally significant. For sequences with extreme motion vectors, FILM’s large-motion optimization reduces this effect considerably.

6.3 Staying Current with the Ecosystem

The tooling covered in this section is the current production standard, but ComfyUI’s upscaling and interpolation ecosystem evolves rapidly. New model releases, quantization improvements, and workflow templates appear on a cadence that makes periodic review worthwhile for any production pipeline.

The ComfyUI best sources and ecosystem resources guide tracks the primary community hubs where these developments surface. For model discovery specifically, the find and download best ComfyUI models guide covers the repositories and curation sources worth monitoring on a regular basis.

The Human Touch: Local AI video is a viable solution, but its success depends on knowing the models’ hard limits. Beyond the technical workflow, the editing phase remains essential. Tools like CapCut are perfect for adding the dynamic pacing, text overlays, and human sensibility that differentiate your content from raw AI output.”

7. Open Source Sustainability: Window of Opportunity or Durable Foundation?

Sora’s shutdown demonstrated something the AI video space had not yet been forced to confront directly: no proprietary platform is structurally committed to your workflow continuity. The economics of generative video at scale are brutal, and when the unit economics stop working, the product stops existing, regardless of how deeply it has been integrated into production pipelines. The strategic analysis of OpenAI’s decision covers the underlying dynamics in detail, but the operational conclusion is simple: owning your model weights is the only durable insurance against platform dependency.

That argument for open-weight models is straightforward. What requires more careful examination is whether the open-weight ecosystem itself remains genuinely open as it matures.

The Hybrid Model Migration Pattern

A pattern is now clearly established across multiple model families. The research release, the version that generates community adoption, benchmarks, and ecosystem tooling, is open-weight. The production-capable successor migrates toward a “Lite stays free” architecture: a reduced-capability open variant that serves as a demonstration layer, while the models that actually matter for professional output move behind API access or subscription tiers.

Wan 2.7 is the clearest current example. The capabilities that made Wan-2.2 a reference model for local photorealistic inference are not carried forward into the open-weight release of its successor. Future Lightricks engine generations are tracking the same trajectory. The open-weight releases at the frontier are becoming increasingly thin, functional enough to maintain community engagement, insufficient for the output quality that professional pipelines require.

This is not a criticism of the organizations involved. The compute costs of training and serving frontier video models are not recoverable through goodwill. It is, however, a structural reality that local inference practitioners need to account for in their workflow planning.

The Strategic Implications for Local Pipelines

The practical consequence of this migration pattern is that the current generation of open-weight models, LTX-2.3, Wan-2.2 at 14B, represents a high-water mark for what is available without API dependency. This is not necessarily permanent; the open-source ecosystem has repeatedly closed quality gaps that appeared insurmountable. But the timeline for the next generation of genuinely capable open-weight video models is not predictable.

This context strengthens the case for investing workflow engineering time in the post-production pipeline described in Section 6 rather than waiting for better generation models. A well-constructed upscaling and interpolation stack extends the productive lifespan of current open-weight models significantly. A Wan-2.2 output processed through RTX VSR, a dedicated upscaling pass, and FILM or RIFE interpolation produces results that remain competitive with API-gated alternatives at a fraction of the per-generation cost, and with no dependency on a platform’s continued commercial viability.

The durable architecture for local AI video production in 2026 is not a single powerful model. It is a modular pipeline: a generation layer that you own outright, combined with post-processing tooling that compounds its output quality, running on hardware that you control. The individual components of that pipeline will be replaced as better options emerge. The architecture itself, local generation, local post-processing, no platform dependency, is the stable investment.

Monitoring the Ecosystem

The open-weight landscape shifts faster than any static guide can track. Keeping a reliable signal on new model releases, quantization developments, and workflow innovations requires monitoring the right sources consistently. The ComfyUI best sources and ecosystem resources guide is the starting point for building that monitoring practice.

FAQ: AI Video Models for Local Inference in 2026

What is the best open-source AI video model in 2026?

The answer depends entirely on your production target. For vertical short-form content, TikTok, Instagram Reels, YouTube Shorts, LTX-2.3 is the leading option: native 9:16 generation, fast inference, and an integrated audio pipeline make it the most operationally efficient model for high-volume short-form workflows. For projects requiring photorealistic output in 16:9, cinematic sequences, VFX work, high-production YouTube content, Wan-2.2 remains the quality reference despite its higher hardware requirements and slower inference. Neither model is universally superior; the correct choice is the one that aligns with your output format and hardware configuration.

What is the fundamental architectural difference between LTX-2.3 and Wan-2.2?

LTX-2.3 uses a Diffusion Transformer (DiT) architecture, optimized for inference speed and format flexibility, including native portrait output and integrated audio generation. Wan-2.2 is built on a Mixture-of-Experts (MoE) architecture, which routes generation tasks through specialized sub-networks to achieve higher fidelity in texture, lighting, and structural coherence, at the cost of significantly higher compute overhead and slower inference. The architectural difference is not incidental, it explains every major performance characteristic that separates the two models in production conditions.

What is the maximum video duration these models can generate?

In a single inference pass, both models produce between 5 and 20 seconds of usable output depending on available VRAM, with 16–25 frames being the typical generation window at standard configurations. Exceeding this range is possible through Context Shifting in ComfyUI, a technique that slides an attention window across a longer timeline to maintain temporal coherence beyond the model’s native context length. The practical limitation of this approach is semantic drift: character details, lighting consistency, and structural elements can degrade progressively over extended sequences as the attention window moves away from the conditioning frames.

For fluidity rather than duration, frame interpolation via FILM or Practical-RIFE is the more reliable lever. Multiplying the native FPS output through post-generation interpolation produces smoother motion and a more cinematic feel without the coherence risks associated with extended single-pass generation.

Is the audio output from LTX-2.3 production-ready?

Not for most professional use cases. LTX-2.3 is currently the only local open-weight model with a serious native audio pipeline, but its output quality sits firmly in the draft tier: synchronization mechanics are functional, voice quality is metallic and lacks emotional range, SFX generation is inconsistent, and music suppression via negative prompting is unreliable. The audio layer is most useful as a timing reference and rough ambience guide, a starting point for sound design rather than a finished asset. For production-quality audio, plan for either a dedicated post-production pass using specialized tools or an Audio-to-Video (A2V) workflow where a separately produced audio track drives the generation process from the outset.

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!