Architecting a Sovereign Search Engine: High-Performance SearXNG Deployment

1. Introduction: The Case for Local Search Orchestration

The modern search landscape is caught in a pincer movement. On one side, mainstream engines have become “advertising platforms that happen to index the web,” prioritizing SEO-optimized content farms over technical depth. On the other, the rise of AI-driven search models introduces a new layer of data harvesting and “black-box” hallucination. For developers and privacy engineers, the response to this degradation is Search Orchestration.

The goal is no longer just to find information, but to control the infrastructure of discovery. By deploying a local search orchestrator, you decouple your intent from the data broker’s profile. You gain the ability to filter out low-signal domains (Pinterest, TikTok, AI-generated listicles) while boosting high-signal repositories like GitHub, StackOverflow, or specialized documentation.

This guide moves beyond the “set it and forget it” tutorials. We will treat search as a critical piece of self-hosted infrastructure, focusing on the deployment of SearXNG, the current standard for open-source metasearch, to build a curated, high-performance search layer that mirrors the quality and privacy of premium services like Kagi, but under your total control.

2. SearXNG vs. Kagi: Structural Disparities and Synergies

To build an effective search infrastructure, one must first understand the fundamental difference between a metasearch engine and a vertical search index.

The Index vs. The Aggregator

Kagi is built on a hybrid infrastructure. It maintains its own crawlers and proprietary indexes—Teclis (web) and TinyGem (news)—which it supplements with anonymized API calls to traditional giants (Google, Bing) and specialized sources (Brave, Mojeek). This allows Kagi to perform “index-level” optimizations, such as ad-density detection and deep link pruning, before the results even reach the ranking stage.

SearXNG, by contrast, is a stateless aggregator. It does not crawl the web or store a permanent index. When you submit a query, SearXNG acts as a router, dispatching that query simultaneously to over 70 “engines” (upstream sources). The magic—and the engineering challenge—lies in how it normalizes these disparate data formats into a single, coherent results page.

Ranking Philosophy: Proprietary vs. Configurable

- Kagi’s Ranking: Relies on a centralized, closed-source pipeline that emphasizes “non-commercial” signals and user-defined “Lenses.”

- SearXNG’s Ranking: Entirely decentralized and transparent. Every engine in SearXNG has a weight parameter. If you trust Brave Search more than Google, you simply increase its weight in your configuration.

The “Kagi-like” Goal: What can we actually replicate?

We cannot replicate Kagi’s proprietary index or its native AI summarizers (like the Universal Summarizer) purely through SearXNG. However, we can approximate the Kagi experience by:

- Eliminating the Noise: Removing ad-heavy engines and tracking-riddled sources.

- Domain Orchestration: Using plugins to boost technical hubs (GitHub, StackOverflow) and bury SEO-spam (Pinterest, TikTok).

- Strict Privacy: Achieving a zero-telemetry environment that even Kagi, as a managed service, cannot technically guarantee to the same extent as a local Docker instance.

In short, SearXNG allows you to build a curated search gateway that uses the world’s most powerful indexes as raw material, filtered through your own engineering logic.

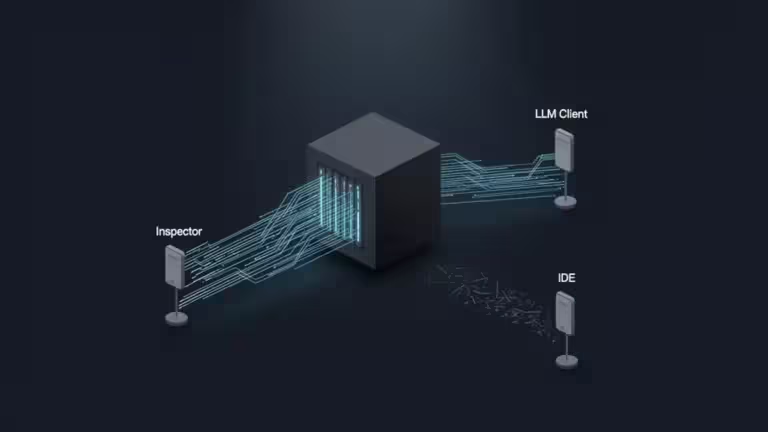

3. Technical Architecture: The Query Lifecycle

Understanding the SearXNG query flow is essential for diagnosing latency and optimizing result quality. Unlike a single-endpoint search engine, SearXNG operates as a high-concurrency orchestrator.

The Request Pipeline

When a user submits a query, the system executes a multi-stage lifecycle:

- Query Parsing & Routing: The internal router analyzes the query for category triggers (e.g., !github or !images). It selects the active engines based on your settings.yml and the user’s preferences.

- Concurrency Dispatch: SearXNG initiates asynchronous HTTP requests to all selected upstream engines. To avoid blocking the event loop, it utilizes a pool of workers (typically uWSGI or Granian in production).

- Engine-Specific Scraping: Each engine module (e.g., google.py, brave.py) receives the raw response. Since many engines do not provide official APIs, SearXNG uses specialized scrapers to extract data from HTML structures or JSON blobs.

- Result Normalization: Disparate data—titles, URLs, snippets, and thumbnail paths—are mapped into a unified internal object.

- Scoring & Merging: This is the critical “ranking” phase. SearXNG calculates a score for each result based on its position in the original source and the engine’s configured weight.

- The Weighting Formula: $Score = \sum \frac{Weight_{engine}}{Position_{result}}$.

- If a result appears in multiple engines, its scores are summed, naturally boosting its visibility.

- Template Rendering: The final list is passed to the UI (Jinja2 templates) and served to the user.

The Scraper Problem: IP Reputation

The most significant engineering hurdle in this architecture is the IP-based rate limit. Upstream engines (Google, Bing) can detect a surge of queries from a single Data Center IP (your VPS) and respond with CAPTCHAs or 403 Forbidden errors. In Section 7, we will discuss how to mitigate this using rotation and proxy logic.

4. Step-by-Step Deployment: The Production-Ready Stack

A naive docker run is insufficient for a search engine that aims to be a daily driver. To achieve “Kagi-level” responsiveness, we must integrate a caching layer and a security-hardened configuration.

The Architecture Components

- SearXNG Core: The main application logic.

- Redis (or Valkey): Essential for caching engine results (to reduce upstream latency) and managing user sessions.

- Filtron (Optional but Recommended): A specialized Go-based WAF that protects your instance from bot scraping and DDoS, which is vital if you expose your instance to the web.

Docker Compose Configuration

Create a docker-compose.yml that isolates the network and enforces resource limits. This setup uses the official SearXNG image and a Redis sidecar.

YAML

version: '3.8'

services:

redis:

container_name: searxng-redis

image: valkey/valkey:7-alpine # Using Valkey as a high-performance open-source fork

command: valkey-server --save 60 1 --loglevel warning

networks:

- searxng_net

restart: always

searxng:

container_name: searxng

image: searxng/searxng:latest

networks:

- searxng_net

ports:

- "127.0.0.1:8080:8080" # Bind to localhost; use a reverse proxy for HTTPS

volumes:

- ./searxng:/etc/searxng:rw

environment:

- SEARXNG_SETTINGS_PATH=/etc/searxng/settings.yml

depends_on:

- redis

cap_drop:

- ALL

cap_add:

- CHOWN

- SETGID

- SETUID

logging:

driver: "json-file"

options:

max-size: "10m"

max-file: "3"

restart: always

networks:

searxng_net:

driver: bridge

Initializing the Environment

Before launching, you must generate a unique secret_key. This key is used to sign cookies and secure the session data.

Bash

# Create the config directory

mkdir -p ./searxng

# Generate a 32-character secret key

export SEARX_SECRET_KEY=$(openssl rand -hex 32)

# Create a basic settings.yml if it doesn't exist

cat <<EOF > ./searxng/settings.yml

use_default_settings: true

server:

secret_key: "${SEARX_SECRET_KEY}"

base_url: https://search.yourdomain.com/

redis:

url: redis://redis:6379/0

EOF

By binding the port to 127.0.0.1, we ensure that the instance is not accessible directly from the internet. You should use a reverse proxy like Caddy or Nginx to handle TLS termination (HTTPS), which is a hard requirement for modern browser search engine integration.

5. Configuring the Engine: settings.yml Deep Dive

The settings.yml file is the brain of your instance. To achieve a high-signal, “Kagi-style” experience, we must move away from the “search everything” default and toward a curated selection of reliable engines.

The Core Engine Selection

A major factor in search “quality” is the aggressive removal of engines that prioritize ads or have poor privacy track records. For a developer-centric setup, we recommend a mix of general, technical, and privacy-first sources.

In your settings.yml, you should explicitly define and weight your engines:

YAML

engines:

- name: google

engine: google

weight: 1.0

use_official_api: false # Use scraping logic

timeout: 3.0

- name: brave

engine: brave

weight: 1.5 # Boost Brave for its independent index

shortcut: br

- name: mojeek

engine: mojeek

weight: 1.2

shortcut: mj

- name: github

engine: github

weight: 2.0

categories: it

shortcut: gh

- name: stackoverflow

engine: stackoverflow

weight: 1.5

categories: it

Refining the Search Logic

To improve the UI and prevent “search leakage,” adjust the following global parameters:

- search.safe_search: Set to 1 (Moderate) or 2 (Strict) depending on your environment.

- search.autocomplete: Change to duckduckgo or brave. Avoid google to prevent sending keystrokes to their servers as you type.

- ui.default_locale: Set this to your primary language (e.g., en-US or fr-FR) to ensure local results are prioritized.

- server.image_proxy: Set to true. This routes all thumbnail and image results through your SearXNG instance, preventing the upstream source from seeing your IP when the browser loads images.

Configuring the Cache (Redis)

Ensure SearXNG is actually utilizing the Redis container we deployed in the previous step. This reduces the load on upstream engines for popular queries:

YAML

redis:

url: redis://redis:6379/0

By caching results for a few hours, you not only speed up recurring searches but also significantly decrease the likelihood of your IP being flagged by Google’s anti-bot systems.

6. Advanced Relevance Tuning: Beyond Default Weights

A common misconception is that SearXNG supports a native boost or block syntax in the same way a commercial engine does. In reality, fine-grained domain control—a hallmark of the Kagi experience—is achieved through the Hostnames Plugin and Engine Weighting.

The “Hostnames” Plugin: Your Content Filter

The Hostnames plugin is the most powerful tool for shaping your search results. It allows you to modify the score of specific domains or remove them entirely.

Add the following to your settings.yml under the enabled_plugins section:

YAML

plugins:

- hostnames

hostnames:

# Boost high-authority technical domains

high_priority:

- github.com

- stackoverflow.com

- docs.python.org

- en.wikipedia.org

# Deprioritize or "bury" SEO-heavy domains

low_priority:

- pinterest.com

- quora.com

- softonic.com

# Completely block malicious or irrelevant domains

block:

- tiktok.com

- expert-sex-signals.com

Strategic Weighting for Developer Workflows

To approximate Kagi’s “Lenses” (specialized search views), use SearXNG’s category system combined with shortcuts. Instead of a generic search, you can force the orchestrator to prioritize technical indexes:

- IT Category: Assign github, stackoverflow, and archwiki to the it category.

- The Result: Querying !it docker networking ensures that SearXNG only queries these high-signal technical sources, effectively creating a “Developer Lens.”

Why “result_filters” is a Myth

Earlier versions of many community guides suggested using a result_filters key in settings.yml. It is important to clarify: this is not a native feature of the SearXNG core. If you require logic beyond simple domain blocking (e.g., filtering results based on the presence of specific keywords in the snippet), you would need to develop a custom Python plugin within the searx/plugins/ directory. For 95% of users, the Hostnames plugin is sufficient.

7. Overcoming the “Wall”: Proxies, CAPTCHAs, and Maintenance

The primary challenge of a self-hosted search engine is the “IP Reputation Wall.” Major engines like Google and Bing deploy aggressive anti-bot measures designed to block non-residential traffic. If you host SearXNG on a popular VPS provider (AWS, DigitalOcean, Hetzner), you will inevitably encounter the dreaded 429 Too Many Requests or CAPTCHA challenges.

Mitigation Strategies

To maintain a reliable “Kagi-style” uptime, you must implement one or more of the following infrastructure layers:

- Rotating Residential Proxies: The most effective but costly solution. By routing requests through a proxy pool (e.g., Bright Data, Oxylabs), SearXNG appears as a standard home user.

- The “Network” Setting: You can configure SearXNG to use a specific local interface. If your server is connected to a VPN with a dedicated IP or a rotating exit node, you can bind SearXNG to that tunnel.

- Filtron Integration: Using Filtron as a pre-filter allows you to throttle aggressive bots that might be using your instance, preserving your IP’s “quota” for your own searches.

- Fallthrough Logic: In settings.yml, ensure you have enough diverse engines (Brave, Mojeek, Wikipedia) that do not block VPS IPs. This ensures that even if Google blocks you, you still receive a viable results page.

Engine Maintenance

Upstream engines frequently change their HTML structure to break scrapers. As a SearXNG operator, you must adopt a “DevOps” mindset:

- Monitor /stats: Check your instance’s internal statistics page regularly to identify which engines are failing.

- Stay Updated: Run docker compose pull weekly. The SearXNG community is incredibly reactive; when Google breaks a scraper, a fix is usually pushed to the latest image within 48 to 72 hours.

8. Production Considerations and Hardening

Exposing a SearXNG instance to the public internet without hardening is a liability. For a production-grade deployment, follow these security protocols:

TLS and Reverse Proxy

Never serve SearXNG over raw HTTP. Use Caddy or Nginx to handle HTTPS. Caddy is particularly recommended for its automatic certificate management:

search.yourdomain.com {

reverse_proxy localhost:8080

}Instance Secrecy

Unless you intend to run a public service, set your instance to Private.

- Disable the API (search.formats: [html]) to prevent third-party bots from consuming your resources.

- Use a firewall (UFW/iptables) to restrict access to the Docker ports, allowing only your reverse proxy or your local IP to connect.

Performance Tuning

To match Kagi’s speed, ensure your uWSGI or Granian workers are scaled to your CPU core count. In the Docker environment, SearXNG usually handles this automatically, but you can fine-tune the SEARXNG_WORKERS environment variable if you notice high latency under load.

9. Conclusion: The Future of Curated Search

Building your own search layer with SearXNG is a significant technical commitment, but the reward is a truly sovereign discovery tool. While SearXNG may not possess Kagi’s proprietary indexes or integrated LLM summarizers natively, its transparency and configurability are unmatched.

By treating search as programmable infrastructure, you move from being a passive consumer of information to an active architect of your digital environment. As the “Dead Internet Theory” becomes more plausible due to AI-generated spam, the ability to weight, filter, and orchestrate your own search results will shift from a niche hobby to a fundamental developer skill.

The next step in this evolution is the integration of Local LLMs (via Ollama or LocalAI) to summarize the clean, ad-free results your SearXNG instance now provides—bringing you one step closer to a fully private, locally-controlled AI search assistant.

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!