The illusion of the trust layer: securing LLM prompts through interception

The rapid integration of Large Language Models (LLMs) into the corporate workflow has birthed a significant security paradox: how can an organization leverage generative intelligence without its industrial secrets, proprietary code, or client data being exposed to external systems? The threat is threefold: provider training policies that harvest conversation data by default, supply-chain vulnerabilities in the vendor ecosystem, and, increasingly, poorly secured access infrastructure, from unauthenticated API endpoints to exposed MCP servers that represent direct entry points for attackers. In the rush to bridge this gap, a new category of “trust layers” has emerged, promising to shield sensitive information through real-time prompt interception. However, as many Chief Information Security Officers (CISOs) are discovering, the technical reality of anonymizing a prompt addresses only one of these vectors,and not necessarily the most critical one.

Note (August 2025 — updated February 2026): The threat model described above has materially expanded on two fronts. First, following near-simultaneous policy updates by OpenAI, Google, and Anthropic, all three providers now train on consumer conversations by default unless users explicitly opt out, with Anthropic extending its retention window from 30 days to five years for Free, Pro, and Max plan users. Enterprise accounts, API access, and institutional plans remain excluded from training pipelines. Second, documented incidents reveal that the supply chain around providers is itself a vector: a contractor error exposed Anthropic customer metadata in January 2024, and a third-party vendor breach at OpenAI compromised business customer information in November 2025, in neither case were conversation contents directly involved. More structurally, a large-scale campaign documented between December 2025 and January 2026 recorded over 35,000 attack sessions targeting exposed LLM endpoints and unauthenticated MCP servers, confirming that access infrastructure is now an active attack surface. For organizations relying on consumer-grade access, or deploying LLM infrastructure without hardened authentication, the threat model described in this article is no longer theoretical.

A pixel-perfect leak

Consider a common scenario in modern software engineering: a senior developer, tasked with optimizing a legacy authentication module, copies a snippet of code into ChatGPT to refactor a complex function. They are aware of the company’s “No PII” policy, so they manually remove the specific server IP addresses. Yet, they leave behind the unique structure of the internal API, a few hardcoded salt values, and the naming convention of the private database schema.

This is a classic “Proof of Concept” of the failure of manual or superficial control. Even without explicit names or emails, the technical “DNA” of the organization is transmitted. When the developer hits enter, they aren’t just asking a question; they are contributing to a global pattern-matching engine. The “Trust Layer” must therefore act not just as a filter, but as a sophisticated semantic barrier that understands the difference between generic logic and proprietary architecture. This is particularly relevant as tools like ChatGPT Atlas, OpenAI’s AI browser, integrate more deeply into user workflows, potentially increasing the surface area for such leaks.

The mechanics of interception: from DOM to Proxy

To understand the limitations of current solutions, one must look under the hood of how these interceptions occur. Generally, the industry has bifurcated into two primary architectural paths: client-side extensions and server-side gateways.

The browser extension workflow (Client-side)

Tools that operate as browser extensions work directly within the Document Object Model (DOM). When a user types into the input field of ChatGPT or Claude, the extension hooks into the submission event. The process follows a high-pressure sequence:

- Capture: The extension intercepts the text buffer before the HTTP request is fired.

- PII Analysis: A local engine scans for predefined patterns. While full-scale models like DistilBERT are often too heavy for real-time client-side execution, most extensions rely on quantized ONNX models or advanced, high-speed regex pipelines to maintain performance. Open-source solutions like Microsoft Presidio remain the most established SDK in this space, providing a modular framework that combines rule-based recognizers with ML-based NER models. Solutions like LLM Guard or MaskMyPrompt, among others, exemplify this local-first approach.

- Substitution: Sensitive strings are replaced by non-descript tokens, such as [INTERNAL_PROJECT_1] or [CLIENT_NAME_A].

- Transmission: The sanitized prompt is released to the LLM provider.

Note that some extensions bypass DOM manipulation entirely by operating at the network layer via the browser’s webRequest API, intercepting the HTTP request directly before it leaves the browser, a more robust but also more intrusive approach.

Upon receiving the response, the extension performs “re-hydration,” swapping the tokens back for the original data so the user sees a coherent answer. While this approach is popular for its ease of deployment, it remains inherently fragile. As OpenAI or Anthropic update their user interfaces—as seen in the ChatGPT release timeline, the DOM selectors often break, leading to “leaky” interceptions where the prompt bypasses the filter entirely.

The shift toward the Gateway (Proxy-side)

The historical justification for moving away from simple extensions lies in the recurring data breaches of 2023 and 2024, where proprietary code was leaked despite the presence of basic browser filters. This has led to the rise of the LLM Gateway. In this model, the organization redirects all GenAI traffic through a centralized reverse proxy.

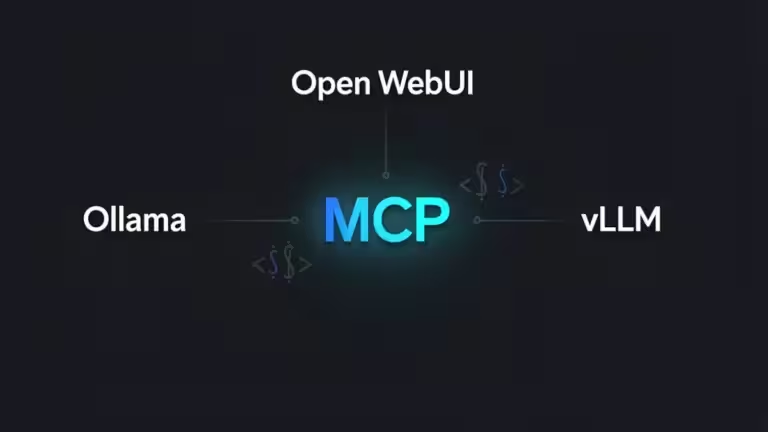

This architecture allows for far more robust Data Loss Prevention (DLP) policies. Unlike an extension, a gateway can execute heavy dual-model NER pipelines without inducing significant latency for the end-user, provided proper horizontal scaling and request batching strategies are in place. Actors like Lasso Security or Lakera Guard, among others, are positioning themselves in this “AI Firewall” segment. This centralized control is vital for managing diverse inference engines like vLLM, Ollama, and LM Studio. More importantly, it provides a single point of audit, crucial for compliance with the EU AI Act or GDPR, ensuring that even as the search market fragments with Apple Intelligence and ChatGPT Search, data remains within the corporate boundaries.

The Managed Browser: Enforcement at the Runtime

While extensions struggle with DOM stability and gateways focus on the network layer, a third architectural pillar has emerged: the Enterprise Browser. Solutions such as Island or Prisma Access Browser (Palo Alto Networks), among others, represent a shift from securing the application to securing the workspace itself. Unlike a standard browser, a managed browser operates as a controlled environment where security policies are baked into the binary.

- Shadow AI Mitigation: The primary advantage of an enterprise browser is its visibility into unmanaged accounts. While a gateway secures official API traffic, it cannot easily inspect a user logging into a personal ChatGPT account on chatgpt.com. The enterprise browser, however, can enforce policies — such as disabling copy-paste or file uploads — specifically for GenAI URLs.

- Contextual DLP: Because the browser has access to the device’s state and the user’s identity, it can apply “Just-In-Time” (JIT) protections. For example, it can allow a developer to paste code into a corporate-approved LLM instance but block the same action on a public web interface.

- Performance at the Edge: By performing PII detection directly within the browser’s runtime, these tools reduce the latency overhead associated with a round-trip to a remote proxy. It should be noted, however, that local analysis still consumes device resources — CPU and memory constraints on standard corporate hardware can introduce their own performance ceiling. More significantly, replacing the standard browser across an entire device fleet remains a substantial operational undertaking, both in cost and in user adoption friction — which is why gateway-based architectures remain dominant in practice despite their limitations.

Reversible pseudonymization: the technical differentiator

The true value of a sophisticated trust layer lies in reversible pseudonymization. This goes beyond simple “redaction” (the permanent blacking out of data) by maintaining a secure, in-memory Mapping Table.

- In-Memory Mapping: To ensure high performance and security, the relationship between original PII and its token (e.g., Marie Martin ↔ [USER_1]) must be stored in volatile memory. This prevents data persistence and complies with “Zero-Data” principles. It should be noted, however, that this guarantee operates at the gateway level only. Full Zero-Data compliance requires additional safeguards: strict log suppression, swap memory isolation, crash dump exclusion, and careful configuration of any observability or monitoring pipelines.

- Semantic Consistency: The challenge is ensuring the LLM understands the context without knowing the identity. If you replace a specific company name with [COMPANY_X], the model must still recognize it as a corporate entity to provide relevant predictive trend analysis.

- Entity Fusion: Advanced gateways use algorithms to solve the “overlap” problem. If one model identifies “Apple” as a [FRUIT] and another as an [ORGANIZATION], the gateway must resolve this conflict locally before the prompt is transmitted. This level of precision is necessary for expert-level predictive data prompts where accuracy is paramount. Tools like Private AI or the platform of Nightfall, among others, are references for this granular de-identification.

Blind spots of the giants: the “Zero-Data” opportunity

While tech giants are integrating native DLP—such as Microsoft Purview or Chrome Enterprise Premium—they often struggle with the “Shadow AI” inherent in BYOD (Bring Your Own Device) and personal accounts.

- Managed vs. Unmanaged: Native controls work well on corporate devices but often fail when an employee logs into a personal ChatGPT account on an unmanaged browser or private mobile device.

- Statistical Recoupment: Even with perfect masking, a risk remains: de-anonymization through recoupment. If an anonymized prompt contains enough specific technical details, a sophisticated model could potentially infer the organization’s identity through statistical correlation — a critical failure point in highly personalized AI systems where metadata accumulation can bypass traditional filters. While not yet empirically demonstrated at scale in controlled studies, this risk is considered plausible in high-context prompts and warrants explicit architectural consideration.

- The Sovereign Advantage: For industries like healthcare or defense, the goal is often absolute sovereignty. By using frameworks like LangGraph to build independent AI agents, organizations can create an infrastructure that is entirely independent from any single LLM provider, making the trust layer a permanent part of their stack rather than a temporary fix.

The fundamental paradox: when the cure reinforces the disease

Before examining future architectural directions, it is worth asking a more uncomfortable question: is prompt anonymization, however technically sophisticated, actually a sound security strategy?

The answer is nuanced. For well-structured PII—names, email addresses, IBANs, phone numbers—anonymization works reliably and meaningfully reduces residual risk. But for the majority of enterprise use cases involving code, system architecture, or proprietary business logic, the sensitive information is the structure itself. Replacing ClientAuthService with [MODULE_1] in a proprietary authentication schema does not meaningfully reduce what is being exposed; it only obscures the label.

More critically, there is a behavioral risk that is rarely discussed: anonymization tools create a false sense of security. An engineer who knows that an extension is “protecting” their prompts will systematically send content they would never have transmitted without that safety net. The existence of the filter modifies behavior—and not always in the intended direction.

The deeper question any CISO should ask before deploying a trust layer is therefore not “how do we anonymize this data?” but “should this data leave our perimeter at all?” If the answer is no, anonymization is not a solution—it is a patch over a policy failure. In those cases, the architecturally sound response is either a locally-hosted inference stack (via frameworks like Ollama or vLLM on internal infrastructure) or a strict, enforced usage policy that prohibits the transmission of sensitive context to external models entirely.

Anonymization is a valid component of a defense-in-depth strategy. It should never be mistaken for the strategy itself.

Future perspectives: from interception to inference sovereignty

Will the performance of on-device models render interception obsolete? The question is no longer purely speculative. Emerging compression techniques such as DFloat11 are pushing the boundaries of what is achievable on consumer-grade hardware, and the trajectory is clear: local inference is becoming a credible alternative, not just a fallback.

This shift reframes the entire trust layer debate. Interception — whether via extension, gateway, or managed browser — is inherently a compensatory mechanism: it exists because sensitive data is being sent somewhere it arguably should not go. Local inference eliminates that premise. When the model runs entirely within the organization’s perimeter, on-premises or on-device, there is no prompt to intercept, no provider to trust, and no supply-chain to compromise. The attack surface does not shrink — it disappears.

The practical path forward is likely a tiered architecture. Local models — deployed via frameworks like Ollama or vLLM on internal infrastructure — handle sensitive workloads: code review, document analysis, internal knowledge retrieval. Cloud-based frontier models remain available for tasks where reasoning depth outweighs data sensitivity, with secure orchestration protocols like MCP governing the boundary between the two. In this model, the trust layer is not replaced — it is relocated from the prompt pipeline to the architectural decision of which workloads cross the perimeter at all.

Until local models close the reasoning gap with frontier systems like Google Gemini or OpenAI’s latest generation, this hybrid posture represents the most defensible enterprise strategy. But the direction of travel is unambiguous: the organizations that will be best positioned are those building inference sovereignty today, not those still optimizing their anonymization pipelines tomorrow.

FAQ

How do these tools recognize sensitive data?

Most professional tools use a combination of Pattern Matching (Regex/Checksums) and Named Entity Recognition (NER). NER is a machine learning process that classifies words into categories like “Person” or “Organization” based on context, rather than just a list of names.

Does the LLM still understand the anonymized prompt?

Yes. Because the semantic structure is preserved—meaning the LLM knows that [USER_1] is a human and [LOCATION_A] is a city—it can still perform complex reasoning tasks. This is essential for strategic predictive analysis in Google Drive or other integrated environments.

What is the impact on latency?

Advanced gateways are optimized to add minimal overhead, typically between 100ms and 200ms for lightweight, optimized configurations. However, it is important to note that complex dual-model NER pipelines can exceed 500ms under heavy load or when processing high-density text. Optimization techniques like vLLM scheduling further help in maintaining high throughput in production environments.

Your comments enrich our articles, so don’t hesitate to share your thoughts! Sharing on social media helps us a lot. Thank you for your support!